What is a data center fabric?

What is data center fabric?

In this modern data center architecture, network devices are deployed in two (and sometimes three) highly interconnected layers, represented as a fabric. Unlike traditional multitier architectures, a data center fabric effectively flattens the network architecture, thereby reducing the distance between endpoints within the data center. This design results in extreme efficiency and low latency.

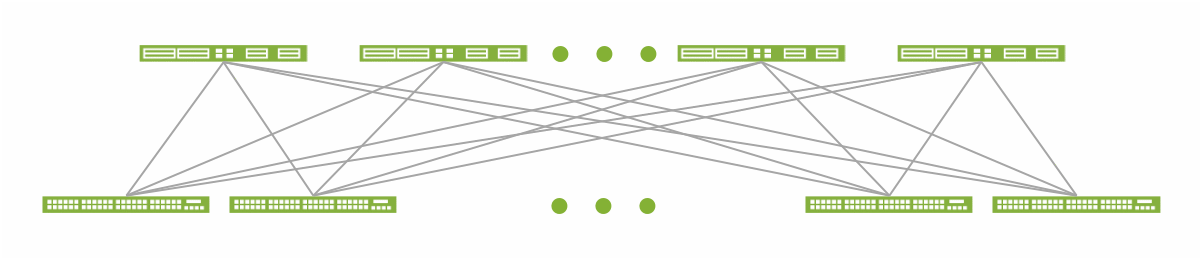

Spine-leaf data center fabric architecture.

Data center fabrics of all kinds share another design goal. They provide a solid layer of connectivity in the physical network, and move the complexity of providing network virtualization, segmentation, stretched Ethernet segments, workload mobility, and other services to an overlay that rides on top of the fabric. The fabric itself, when paired with an overlay, is called the underlay.

What Problems Do Data Center Fabrics Solve?

As applications move from monolithic to disaggregated and microservices design patterns, traffic patterns in the data center move, too. They shift from north-south, between devices within and outside the data center, to east-west, between devices within the data center.

Organizations that move beyond monolithic applications also adopt an agile IT approach, where they deploy applications more quickly in smaller steps and respond to transport requirements that can change rapidly. Also, many organizations are moving to virtualized workloads, such as containers and virtual machines, to support rapid shifts in capacity over time on a smaller set of physical servers.

Traditional hierarchical data center network designs aren’t well suited to support these requirements. So, many organizations are replacing their hierarchical networks with flatter, more agile data center fabrics.

How Does a Data Center Fabric Work?

Modern data center fabrics typically use a two-tier spine-and-leaf architecture, also known as a Clos fabric. In this fabric there are typically three stages, as data passes through three devices to reach its destination. For example, east-west data center traffic typically travels upstream from a given server through a leaf device to a spine device, and then downstream through another leaf device to the destination sever.

In a fabric design, there isn’t a network core, which changes the fundamental nature of the network itself.

- While intelligence can be moved to the core of a traditional hierarchical network (for instance, to implement policy), the intelligence in a spine-and-leaf fabric is moved to the edge. It’s implemented either in leaf devices (such as top-of-rack, or ToR, switches) or in endpoint devices connected to the fabric (the workloads). The spine devices merely act as a transit layer for the leaf devices.

- Spine-and-leaf fabrics easily accommodate places in the network where east-west traffic flows make sense, which isn't the case in a traditional hierarchical design.

- All traffic in a spine-and-leaf fabric—east-west or north-south—becomes equal. It’s processed by the same number of devices. This practice greatly aids in building fabrics with strict delay and jitter requirements.

The scale of a spine-and-leaf fabric is constrained by the number of available ports.

On the leaf devices:

- Downstream ports available to connect endpoints

- Upstream ports available to connect to spine devices

On the spine devices:

- Downstream ports available to connect to leaf devices

However, adding capacity to a spine-and-leaf fabric is easy. You simply add more spine or leaf devices as needed alongside the existing devices. This approach allows a spine-and-leaf fabric to “scale out” in the same way servers and services do—by adding more devices in parallel. This contrasts to “scaling up” by adding more capacity in existing devices, as in a traditional hierarchical design.

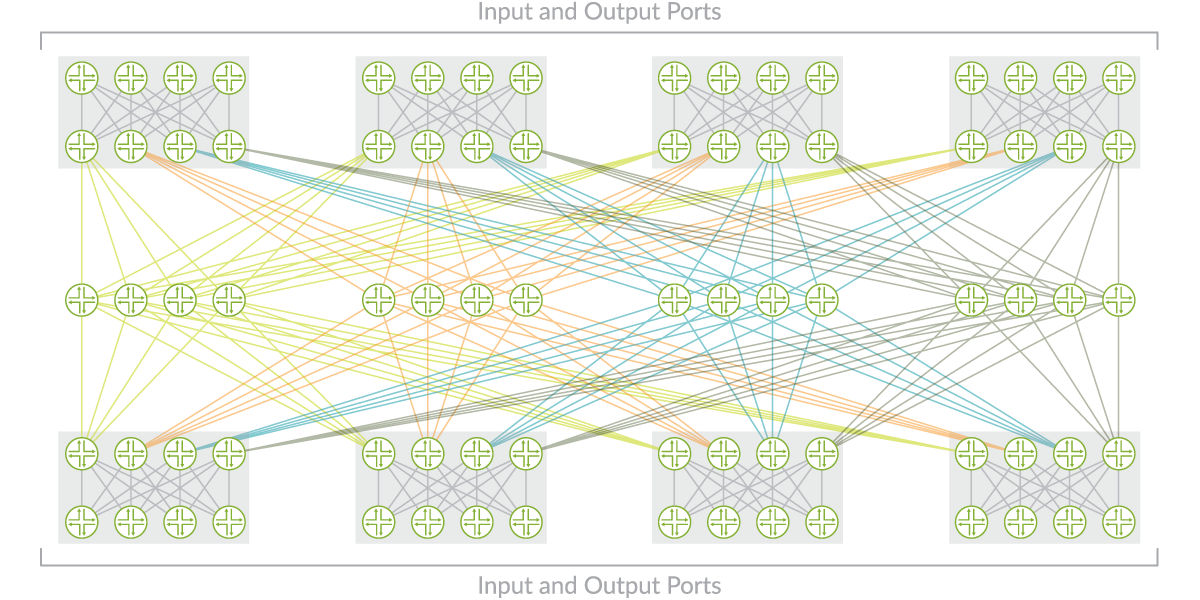

Beyond increasing port capacity, you can also increase overall fabric scalability by creating multiple spine-and-leaf fabric pods and interconnecting them with an additional spine-like layer.

Example design for a large-scale fabric.

This pod-based design has advantages in large-scale data center fabrics.

- Provides for building very large fabrics using a single device type throughout the network (a single SKU design)

- Enables generational management of hardware and software over time

- Makes it easy to steer traffic using technologies such as segment routing

Juniper Networks Implementation

Juniper’s data center fabric solutions include two main components.

- Juniper switches provide the high-performance, high-density platforms required to build innovative data center fabrics that scale to thousands of ports.

- Juniper’s joint solution with Apstra enables you to easily manage your physical data center infrastructure. Apstra AOS enables you to automate, manage, and monitor your data center fabric — simplifying operations for Day 0 and beyond.

These solutions accommodate physical and virtualized infrastructures, enable simplified management, and perfectly meet the requirements of virtualization, cloud computing, and big data.

Data Center Fabric FAQs

What is a data center switch fabric?

A data center fabric is a system of switches and the interconnections between them that can be represented as a unified logical entity. The fabric allows for a flattened network architecture in which any attached server or storage node can connect to any other server or storage node. Similarly, any switch node can connect to any other switch, server, or storage node.

What is the difference between a data center fabric and a traditional network?

The term “fabric” describes how switch, server, and storage nodes connect to all other nodes in a mesh configuration, evoking the image of tightly woven fabric. These links provide multiple redundant communications paths and deliver higher total throughput than traditional networks.

Are there different types of data center fabric architectures?

Yes, there are both proprietary architectures and open, standards-based fabrics, such as EVPN-VXLAN.

What is EVPN-VXLAN?

This type of fabric is an overlay network that sits on top of an IP-fabric underlay. It enables the extension and interconnection of Layer 2 data center domains and placement of endpoints (such as servers or virtual machines) anywhere in the network, including across multiple data centers.

What data center technology, solutions, and products does Juniper offer?

Juniper offers best-in-class data center QFX Series Switches, which form a reliable switching fabric. The Juniper Apstra intent-based networking solution handles all aspects of automation, telemetry collection, and analysis of your data center network. Together, the QFX series and Juniper Apstra deliver a fully automated EVPN-VXLAN framework for building, extending, and managing your data center fabric, including Day 0 design, Day 1 deployment with Zero Touch Provisioning (ZTP), and Day 2+ operations.