Bob Friday Talks - Coding Minds: Crafting Intelligence in Silicon

Unveil the secrets of AI development and training

Are you ready to stop viewing AI as some sort of magical black box and gain true confidence in its capabilities? In this episode, our Chief AI Officer, Bob Friday, will help demystify AI by walking you through how learning models are built and developed.

You’ll learn

How to train your AI

How to measure the effectiveness of an AI model

Who is this for?

Host

Experience More

Transcript

0:00 hi I'm rahu and welcome to another

0:02 episode of Bob Friday talks AI is often

0:05 regarded as a blackbox or magic

0:08 demystifying AI can be done by

0:10 explaining how AI is built and developed

0:13 today I'm joined by Bob Friday chief AI

0:15 officer here at Juniper today Bob will

0:18 be walking us through how to train your

0:20 AI thanks so much for being here Bob

0:22 thanks for having me let's Dive Right

0:24 into some fundamentals so what is a data

0:27 scientist and what's their role you you

0:30 know what I always tell people data

0:32 scientists are like unicorns no you're

0:34 looking for someone who really

0:36 understands statistics and math and it

0:38 can also write production software these

0:41 are not easy people to find if you look

0:44 what we missed the first data scientist

0:46 I hired was actually a particle

0:48 physicist someone working on physics

0:50 experiments and looking for signals in

0:52 these big physics experiments very

0:54 similar to what we're doing in

0:56 networking trying to find a signal and a

0:58 lot of noise another great example is a

1:01 data scientist who is actually working

1:03 on modeling protein molecules and amino

1:06 acids and he was doing that up to the

1:08 point until Google deep mine actually

1:09 solved that problem with deep learning

1:12 you know so these are people who

1:13 actually understand hardcore math

1:16 statistics but can also write great

1:18 production software these are actually

1:20 very hard people to find so how does how

1:22 does protein amino acids actually relate

1:24 to the building of AI because I've heard

1:26 this come up a few times in my

1:27 conversations with other people and it's

1:29 never been been quite clear to me like

1:31 how does those two things link well you

1:33 think about the protein problem right

1:34 you know they've got all these amino

1:35 acids they're trying to predict the

1:37 properties of proteins based on how

1:40 amino acids join there's billions of

1:42 different combinations here uh before

1:44 deep learning they're actually trying to

1:46 solve this problem with simulations you

1:48 know they're actually going to linear

1:50 accelerators to get x-rays to actually

1:52 look at these protein molecules what

1:54 Google Deep Mind did was actually be

1:56 able to train these deep learning models

1:58 now to predict the prop properties of a

2:00 protein molecule knowing what how the

2:03 amino acids join so that's a great

2:05 example where deep learning is actually

2:06 making a difference in the medical space

2:09 so tell us how do you train your AI yeah

2:12 you know when you look at AI models they

2:14 come in different flavors you know the

2:16 basic ones start with what they call

2:18 supervised training this is where you

2:20 have labeled data where you know the

2:22 answer you know and this is what open Ai

2:24 and chat PT is doing when they train

2:26 chat GPT they're taking tons of data off

2:29 the internet to predict the next word

2:31 and a sequence of words you know what

2:33 we're doing in networking is like with

2:35 zoom data we're taking Zoom data

2:37 combined with network feature datas and

2:39 we're building models now that to can

2:41 predict Zoom latency and user experience

2:44 so that's is an example of supervised

2:46 training self-supervised training is

2:49 where you look back on existing data

2:51 like anomaly detection you know where

2:53 we're looking back for the last week

2:55 months to actually predict the next 10

2:57 minutes or hours Based on data we we've

3:00 seen in the last past and that's what we

3:02 call supervised training and then we get

3:04 into reinforcement learning and this is

3:07 where we're basically training models

3:08 based on a a WS you know where we're

3:11 basically looking to optimize the user

3:13 experience on the wireless network and

3:15 we're creating a symmetrical trying to

3:18 optimize the Symmetry between the user

3:20 and the network so that's an example of

3:22 supervis self-supervised and

3:25 reinforcement learning that we're

3:26 working on how long does it take to

3:28 train the AI yeah you know training

3:30 models varies quite a bit you know you

3:32 look what they're doing at open Ai and

3:34 chat GPT that's millions of dollars

3:37 weeks and months worth of training on

3:38 these big large language models if you

3:41 look what we're doing here at Juniper

3:43 Miss really we're probably training on

3:45 the order of seconds and minutes you

3:47 know whether we're doing a big

3:48 supervised training model which may take

3:50 minutes if we're looking at all the data

3:52 across a universe of networks or we're

3:55 doing some training on a site level

3:58 where we're looking back for the last

3:59 week or months right so yeah training

4:02 can range anywhere from seconds to

4:04 minutes to weeks depending on how big

4:06 these models are your training so the

4:09 term I've heard a lot in conversation

4:10 come up is AI inference can you tell us

4:12 a little bit about what that is yeah

4:14 when they say AI inference what they're

4:16 really talking about is actually running

4:17 a model in production and getting an

4:19 answer you know when we train these

4:22 models for like LM right we may actually

4:26 spend more time and expense training the

4:28 model than actually running an operation

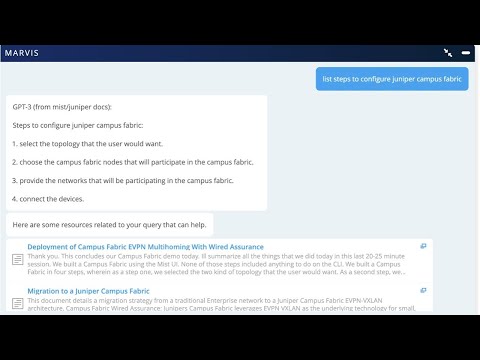

4:30 you know when you look at our llm Marva

4:33 chatbot you know we may have to train it

4:36 on hundreds of thousands of questions

4:39 and answers whereas when we actually put

4:41 it into our actual Network we may be

4:44 dealing with only hundreds of questions

4:46 or thousands of questions per week so

4:48 you think about training and inference

4:51 inference refers to actually running the

4:53 model in a production environment how do

4:55 you measure the effectiveness of an AI

4:57 model yeah I think there's a couple

4:59 answers to this question you know when a

5:01 data scientist trains like a supervised

5:03 model they'll typically take a training

5:06 data set they'll take 70% of the data to

5:10 actually train the model then they may

5:11 save 20 or 30% to actually measure the

5:14 effectiveness and how accurate that

5:16 model is so that's one technique or

5:19 definition of Effectiveness from a data

5:21 science point of view from a customer

5:23 facing point of view here at Juniper we

5:26 actually run our models and our customer

5:28 support team and we do something called

5:30 Marvis efficacy where we basically have

5:33 actual users grade our models and tell

5:35 us how often the model is getting the

5:37 right answer so you can think of

5:39 effectiveness at the data science level

5:41 when you're training your models and

5:43 then there's actually the customer

5:44 facing Effectiveness how effective it is

5:46 with your actual customers or the

5:47 problem you're working on thanks so much

5:49 for your thoughts today Bob we really

5:51 appreciate your time and thank you for

5:52 listening and until next

5:58 time