Bob Friday Talks: Generative Horizons, Gen AI and Juniper’s CUEL Breakthroughs

Bob Friday Talks: Generative AI and Juniper’s CUEL Breakthroughs

Explore how Generative AI and Juniper's pioneering AI-Native Networking Platform uses LLM to reduce support tickets and improve network operations. Bob Friday, Chief AI Officer, describes how Juniper’s Continuous Learning model adds value to Zoom and Teams integrations by predicting network requirements and proactively resolving network issues. Hear how an AI-Native network can dynamically adapt in real time to enhance connectivity and user satisfaction.

You’ll learn

How Juniper uses GenAI to accurately predict user experiences with Zoom and Teams experiences.

How Juniper differentiates itself in the industry with an AI-Native Network Platform and Marvis Virtual Network Assistant.

Who is this for?

Host

Experience More

Transcript

0:00 hello everyone my name is Aku and I'm

0:04 junipers head of AI evangelism today I

0:07 have the honor of being joined by our

0:09 very own Bob Friday who's our chief AI

0:12 officer well hello there Bob cool thanks

0:15 for having me this is a great time to be

0:17 in network and it's exciting to be here

0:18 with you well thank you so much well

0:21 everyone today we are going to learn

0:23 more about geni and what Juniper

0:26 Networks is doing in that field also

0:28 we'll dive deeper into llms as well as

0:31 Microsoft teams as well as zoom and what

0:34 we're doing for our customers in that

0:35 space so what is the power of gen and

0:38 what does this mean for Juniper Network

0:41 yeah cool start with at an industry

0:43 level gen chatti is the next generation

0:47 of user interfaces for a lot of vertical

0:49 including networking it's really what's

0:52 going to allow people to get the data

0:54 out of their Network in a much more

0:55 natural easier way if you look what

0:57 happened in networking we've gone from

0:59 CLI to dashboards chat GPT and LM is

1:02 that next Generation user interface for

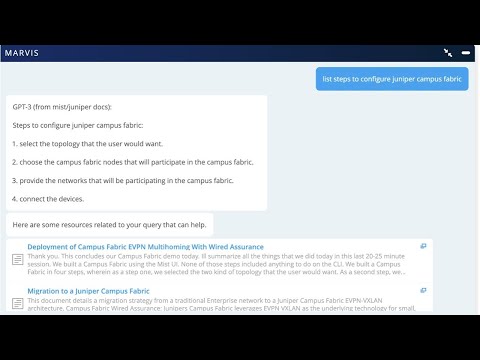

1:05 Juniper specifically in Marvis we've

1:07 been doing conversational interfaces

1:09 since 2018 and helping Enterprises

1:12 answer real-time troubleshooting

1:14 questions natural language is going to

1:16 bring that natural human interaction

1:19 into Marvis now making it really easier

1:21 for people to understand real type

1:23 troubleshooting and what we've added

1:25 lately is that public knowledge based

1:28 documentation questions where people now

1:30 answer questions about how to configure

1:32 a switch an AP and a router and making

1:34 it easier to find that information in

1:36 public documents so I love have you re

1:40 um in the M team reor it to put the data

1:43 scientists and the support team side by

1:46 side to work together and I think that

1:48 is such an important partnership for the

1:50 success of the organization on that note

1:53 I would love to learn about how do llms

1:55 Empower your customers so that they

1:57 don't open as many support tickets

2:00 when we started miss that was the

2:02 original vision for Miss is really using

2:04 cloud and AI Ops to reduce the

2:06 operational hassle of managing and

2:08 operating these networks right and with

2:10 llms we're continuing that Journey

2:12 making it easier for customers to get

2:15 questions answered before they open the

2:16 support tickets so with llm we've

2:19 actually added it into Marvis we

2:21 actually are using it in our support

2:23 team now every support ticket that comes

2:25 into Miss right now basically has an LM

2:28 answer and the documents in that ticket

2:30 trying to help our support team get to

2:33 resolution faster and that same

2:35 technology is now being available to our

2:37 customers and that's how we're trying to

2:39 help prevent support tickets both inside

2:41 of our support team and in our customers

2:43 team you know as we've discussed right

2:46 when we move to Cloud support is

2:48 ultimately at the best proxy for our row

2:50 customers you know and helping them

2:52 reduce tickets is basically helping our

2:54 customers the fewer tickets they see is

2:56 the fewer tickets our customers are

2:58 sending them yeah I love love that point

3:00 Bob around how if we reduce the support

3:04 tickets of our customers that is the

3:06 best you know Testament of success yeah

3:09 and what I tell people they always ask

3:10 me you know what's the difference

3:11 between Ai and whitewashing AI the first

3:14 step on that journey is really making

3:16 sure you're using your own cloud a Ops

3:19 in your support team if they're not

3:20 doing that they have not started the

3:22 journey to Cloud a Ops well thanks Bob

3:25 those are really you know interesting

3:27 points and on that note we'd love to

3:28 talk more about the zoom and the team

3:31 Integrations and how they're adding

3:33 value to customers because I know that's

3:35 essentially very important aspect and

3:38 many customers have found a lot of value

3:40 from from zoom and team Integrations

3:42 yeah know this is an interesting one you

3:44 know this started with a big customer

3:45 about three years ago who to us that you

3:48 know Zoom calls were in the top three

3:50 support tickets they were getting right

3:52 and the challenge was how do we help

3:53 customers with their zoom and teams

3:56 because when you think about it zoom and

3:58 teams are what I call the canary and the

3:59 coal mine right if your network has any

4:03 problems it's going to show up in those

4:04 video collaboration apps right and so

4:07 what we've done with zoom and teams is

4:09 basically we've taken Zoom data and

4:12 network feature data and now we build

4:14 models that can accurately predict user

4:17 experience Zoom teams experience and

4:20 once we have these models built we can

4:22 actually use them now to use things like

4:24 shapley to get the visibility on what is

4:27 causing a bad Zoom or teams experience

4:30 all the way at the user level AP level

4:32 and the site level so Canary and the

4:35 coal mine very important usually the top

4:38 three or four support ticket problems

4:40 yeah that sounds amazing Bob I I totally

4:43 agree just being a user of teams and

4:45 zoom myself it is so valuable to have

4:48 like I mean the Wi-Fi is so essential

4:51 and adding AI to the Wi-Fi that's that's

4:54 a big win yeah and you know I think the

4:56 other thing with zoom and teens you know

4:58 I always tell people people you know we

5:00 all have been using machine learning

5:02 algorithms zoom and teams what we're

5:04 doing here is what I call continuous

5:06 learning you know where we're

5:07 continuously training these models with

5:09 megabytes terabytes of data right and

5:12 that is the power when we go from

5:14 machine learning to deep learning you

5:16 know machine learning is what we've been

5:17 using for years to automate these

5:19 networks deep learning is really where

5:21 we're differentiating this is your chat

5:23 PT experience so how does Juniper

5:26 differentiate itself from more

5:27 traditional networking companies

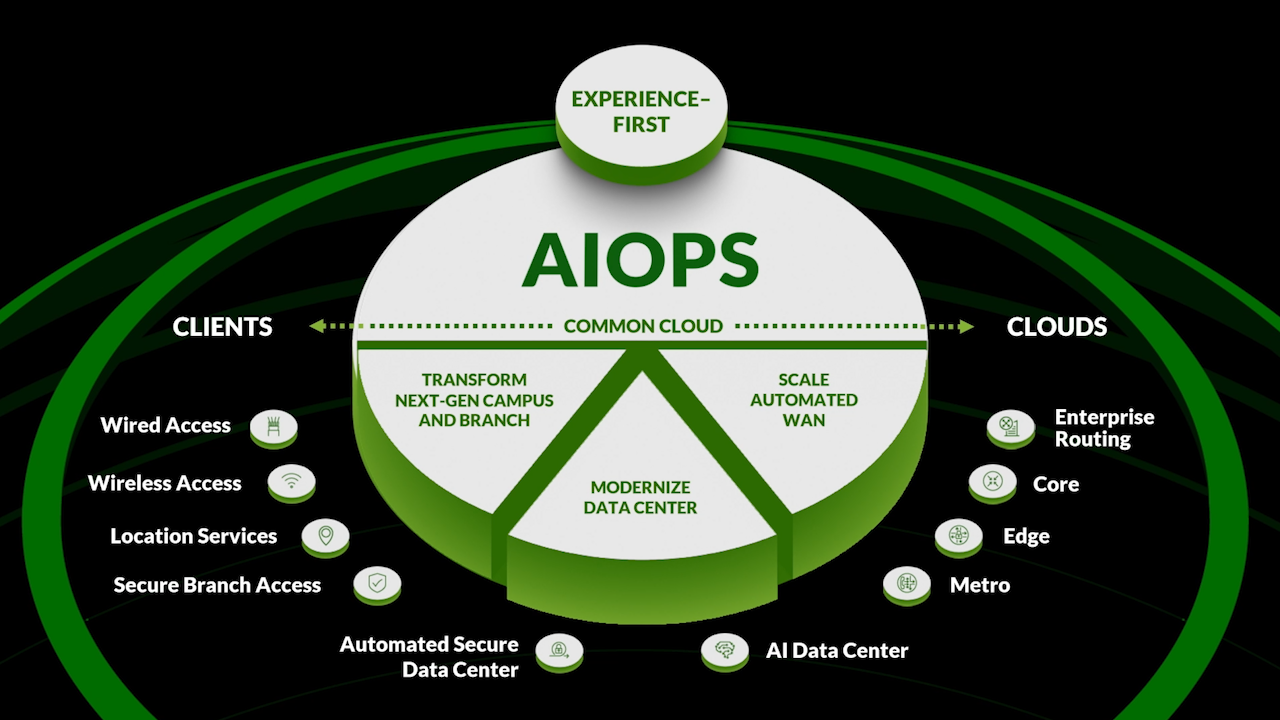

5:30 yeah you know I think this question goes

5:31 all the way back to the origins of mist

5:33 you know when sujan and I left Cisco we

5:36 made a big bet that basically cloud and

5:39 AI were architectural changes in the

5:41 industry right because we knew we were

5:43 not going to beat the big guys with a

5:44 feature so I think when you look what we

5:47 did at Juniper and Miss was really that

5:49 AI native blank sheet of paper story

5:52 where the BET was hey if we're going to

5:53 build a real time AI Network we're going

5:57 to have to start from scratch and

5:58 basically build something something that

5:59 can process tons of data inest tons of

6:02 data from different networking sources

6:04 so I think that is the main

6:05 differentiation is making sure that

6:07 cloud Foundation got built and then on

6:09 top of that AI native Cloud Foundation

6:11 is really building Marvis in the AI Ops

6:14 piece of it I love the the AI native and

6:17 how that that being the foundation and

6:19 Jennifer really differentiating itself

6:22 as having AI at its core and Foundation

6:25 this is big you know cool I think you

6:27 know the other differentiation I think

6:29 would people have a hard time

6:30 appreciating is when you actually go

6:31 into the details of moving from kind of

6:33 a controller based software on Hardware

6:36 into these microservices Cloud

6:38 architectures not everyone fully

6:40 appreciates the difference in the

6:41 software architecture and that's what

6:43 really forced us to actually T start a

6:45 blank sheet of paper because we couldn't

6:47 simply take the software that was on our

6:48 controller and put it in a container

6:50 Cloud you really had to start from

6:52 scratch and start with this software

6:54 architecture built around microservices

6:56 and API and that is the other big

6:59 difference at Juniper is that cloud

7:01 architecture so how do you improve radio

7:04 Resource

7:05 Management yeah so in the Wireless World

7:07 radio Resource Management has been

7:08 around for decades um 20 years ago when

7:11 I was doing Aerospace we were really

7:14 trying to optimize how access points saw

7:16 each other you know this time around

7:19 we're really trying to optimize the user

7:21 experience and that's really trying to

7:22 leverage reinforcement learning I'm

7:24 making sure that we're balancing the

7:26 connectivity between a user and the

7:28 access point you know when you look at

7:30 some of the challenges the challenges

7:32 have changed over the last 20 some years

7:34 you know 20 years ago we had you know

7:36 transmit power and channel basically you

7:38 know we look where Wi-Fi is headed with

7:40 6E and 7 now we have multiple bandwidths

7:43 to deal with we got 20 40 80 160 MHz

7:46 bandwidth and we have more bands now

7:48 right we have 2.4 5 gigs and now we're

7:51 even moving to the six gig bands so

7:53 there's a lot more knobs to adjust in

7:55 this radio reinforcement management uh

7:57 but there's a lot more tools in the

7:58 toolbox now we have reinforcement

8:00 learning that we can apply to it well

8:02 thanks Bob yeah that makes a lot of

8:04 sense on another note one area that I'm

8:08 personally very passionate about is AI

8:10 trust and explainability and

8:13 transparency I would love to learn about

8:15 what are the measures that we're taking

8:17 in that space yeah so as we discussed

8:20 you know as zoom in teams great example

8:23 where we're building models predict user

8:25 experience we're actually using

8:26 something called Mutual information

8:29 right oh Mutual information and now

8:31 we're using shle in the first generation

8:33 it was really around Mutual information

8:35 and trying to understand why a user

8:37 minute was bad now with shapley where

8:39 that are really explaining what network

8:42 feature is actually most likely to

8:44 predict an outcome right and that is

8:47 example of where explain abilities bring

8:49 a lot more granularity to root cause uh

8:52 if we look forward to other use cases

8:55 oops what was that go oh llms so if we

8:57 look forward to what we're doing with

8:59 llms that's almost self-explanatory

9:02 where we're starting to basically use

9:04 what they call retrieval Technologies

9:06 where we're basically retrieving

9:07 documents and basically having chat GPT

9:10 or an open source llm answer questions

9:13 in the context of a document so in that

9:15 case there the customer customer support

9:18 they get an answer but they also get the

9:20 docs from which that answer cames so

9:22 making sure we give domain specific

9:24 answers and minimize the hallucinations

9:26 you see out of

9:27 llms so as a woman been an AI myself and

9:30 a data scientist I've spent the last 10

9:33 plus years working on AI and machine

9:35 learning and now I'm really excited to

9:38 work with Bob Friday and apply Ai and

9:42 machine learning trust and ethics to the

9:44 space of networking because the world

9:47 isn't the world without the internet

9:49 right and applying AI to the Wi-Fi and

9:51 the internet makes the world much more

9:54 connected and we have every connection

9:56 counts for me you know if you look

9:58 what's going on in the industry right

9:59 now you know I tell people when you go

10:01 to your hospital and your doctor for get

10:03 C you're going to want to make sure your

10:04 doctor is using the latest and greatest

10:06 AI when he diagnoses you same with those

10:09 cars you're going to want to make sure

10:10 they use the latest and greatest AI you

10:11 know and in networking it's the same

10:13 you're going to want to make sure when

10:14 you connect to that Network that it's

10:16 using the latest and greatest AI to

10:18 provide you the best experience you know

10:20 and that's why it's exciting times to be

10:21 in networking so it's great to have you

10:23 thanks Bob for joining us today we

10:26 appreciate your time thanks and Co for

10:28 having me and look forward to meting

10:35 more