IPv6 Fabric Underlay and Overlay Network Design and Implementation with EBGP

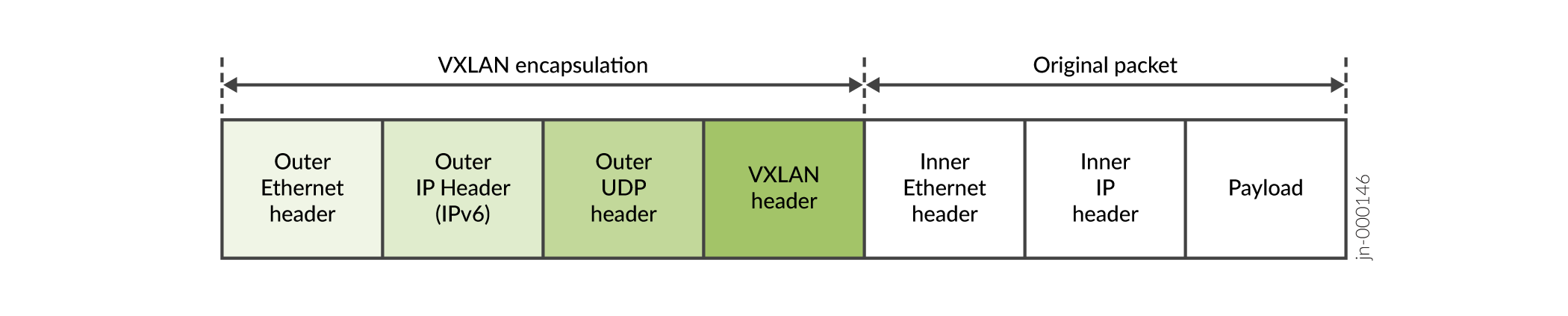

Most use cases in this guide are based on an IP Fabric that uses IPv4 and EBGP for underlay connectivity with IBGP overlay peering. On supporting platforms, starting in Junos OS Release 21.2R2-S1 and 21.4R1, you can alternatively use an IPv6 Fabric infrastructure. With an IPv6 Fabric, the VXLAN virtual tunnel endpoints (VTEPs) encapsulate the VXLAN header with an IPv6 outer header and tunnel the packets using IPv6. The workload packets with the payload can use either IPv4 or IPv6. See Figure 1.

An IPv6 Fabric uses IPv6 addressing, IPv6 and EBGP for underlay connectivity, and IPv6 and EBGP for overlay peering. You can’t mix IPv4 and IPv6 underlays and overlay peering in the same fabric.

This section describes how to configure the IPv6 Fabric design. In this environment, you can take advantage of the expanded addressing capabilities and efficient packet processing that the IPv6 protocol offers.

We have qualified this IPv6 Fabric in our reference architectures with:

The following routing and bridging overlay designs:

Bridged overlay

Edge-routed bridging (ERB) overlay

EVPN instances configured using MAC-VRF routing instances only.

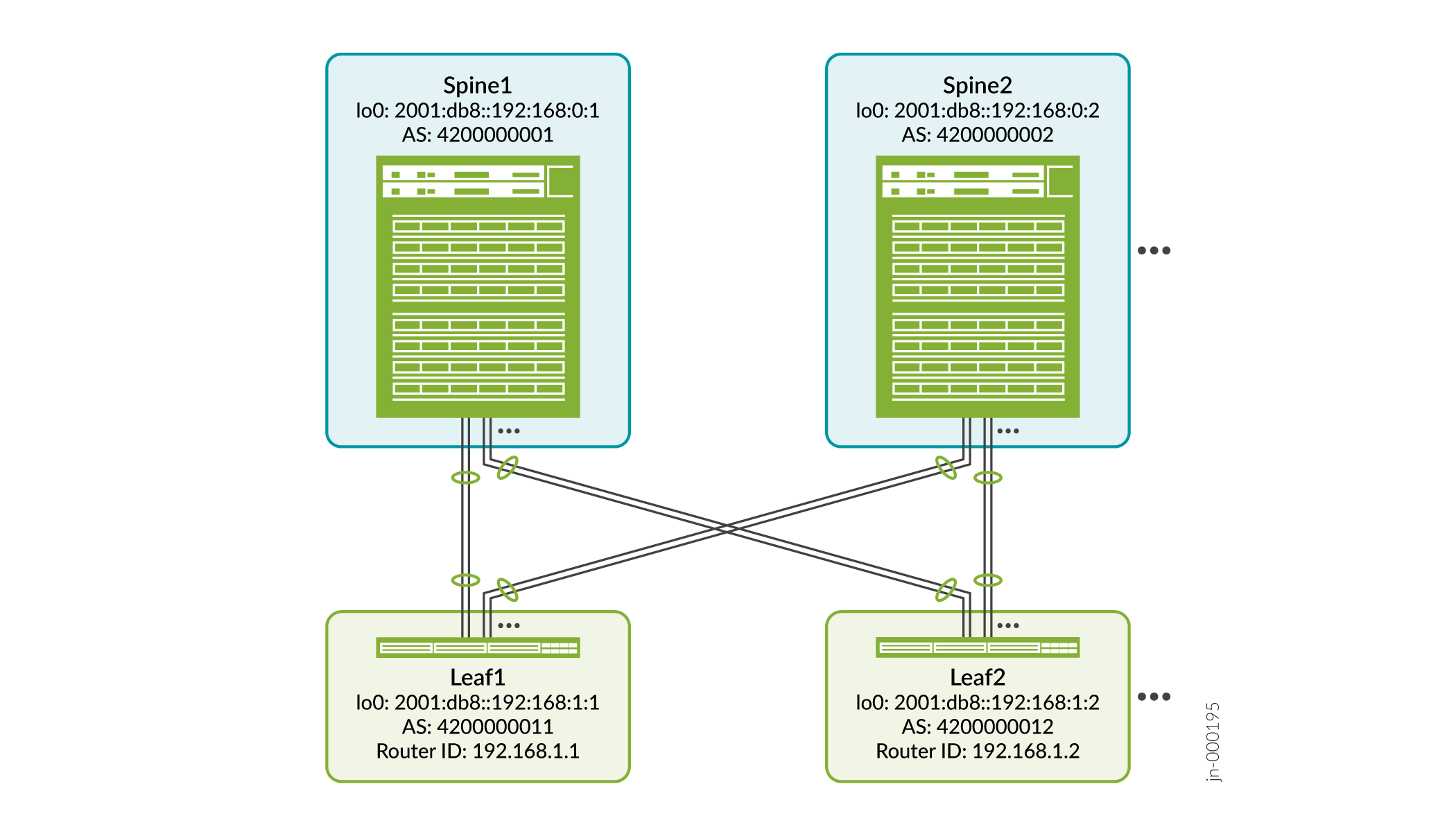

Figure 2 shows a high-level representative view of the spine and leaf devices in an IPv6 Fabric.

The topology can be the same or similar to the supported topologies with an IPv4 Fabric.

The main differences in how you configure an IPv6 Fabric instead of an IPv4 Fabric include:

You configure IPv6 interfaces to interconnect the devices.

You assign an IPv6 address to the loopback interface on the devices serving as VTEPs.

In the EVPN routing instance, you set the VTEP source interface as the device’s loopback IPv6 address, rather than an IPv4 address.

You configure the underlay EBGP peering between the IPv6 interface addresses interconnecting the devices. You configure the overlay EBGP peering between the device IPv6 loopback addresses.

See Data Center EVPN-VXLAN Fabric Reference Designs—Supported Hardware Summary for the initial hardened release in which a platform supports an IPv6 fabric design, based on the type of overlay architecture and the role the device serves in the fabric. Look for the table rows that state the device role “with IPv6 underlay”.

See EVPN-VXLAN with an IPv6 Underlay in the EVPN User Guide for an overview of EVPN-VXLAN feature support and limitations with an IPv6 Fabric.

For an overview of the supported IP fabric underlay and overlay models and components used in our reference architecture designs, see Data Center Fabric Blueprint Architecture Components.

Configure Interfaces and EBGP as the Routing Protocol in the IPv6 Fabric Underlay

In this design (similar to the IPv4 Fabric in IP Fabric Underlay Network Design and Implementation), you interconnect the spine and leaf devices using aggregated Ethernet interfaces with two member links. (You can alternatively use a single link, or more than two member links in an aggregated Ethernet bundle, for each spine and leaf connection.)

This procedure shows how to configure the interfaces on the leaf side toward the spines, and enable EBGP with IPv6 as the underlay routing protocol on the leaf device.

Although this procedure doesn’t show the spine side configuration, you configure the interconnecting interfaces and the EBGP underlay on the spine side in the same way as you do on the leaf device.

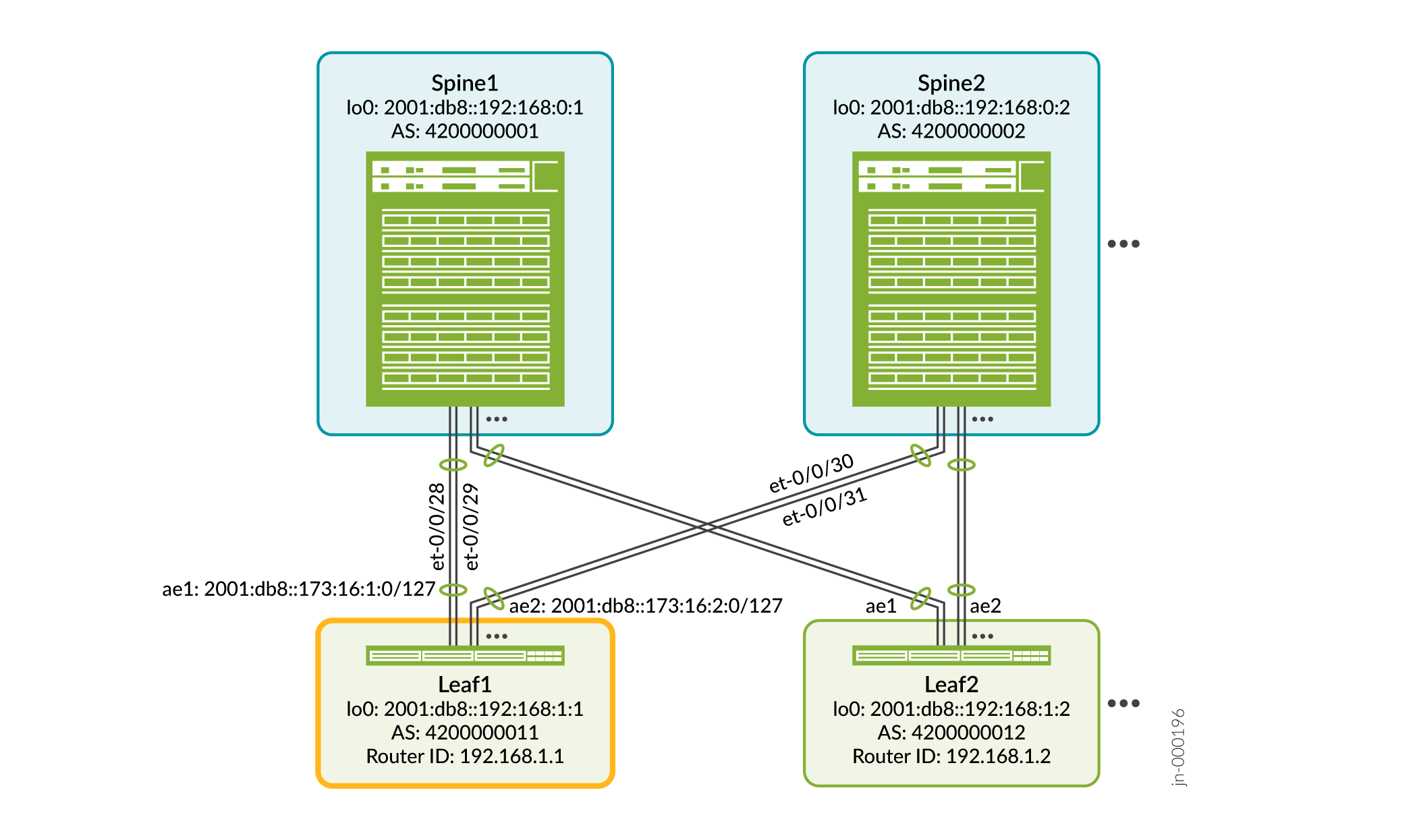

Figure 3 shows the interfaces on leaf device Leaf1 that you configure in this procedure.

To configure aggregated Ethernet interfaces and EBGP in the underlay with IPv6 on Leaf1:

Configure EBGP for IPv6 Overlay Peering

Use this procedure with an IPv6 Fabric EBGP underlay to configure the IPv6 overlay peering. Both the underlay and overlay must use IPv6, so you can’t use the overlay configuration in Configure IBGP for the Overlay (which describes configuring overlay peering in an IPv4 Fabric).

Because the overlay peering in the IPv6 Fabric also uses EBGP as the routing protocol, the overlay peering configuration is very similar to the underlay configuration in Configure Interfaces and EBGP as the Routing Protocol in the IPv6 Fabric Underlay. The main difference is that in the underlay, we specify the IPv6 addresses of the Layer 3 interfaces that connect to the EBGP neighbors (in this example, the aggregated Ethernet interface addresses). In contrast, in the overlay, we use the device IPv6 loopback addresses to specify the EBGP neighbors. Refer again to Figure 3 for the device addresses and ASN values in this example.

Another difference in the overlay configuration here is that we configure EVPN signaling.

To configure EBGP overlay peering with IPv6 on Leaf1 to Spine1 and Spine2:

Verify EBGP IPv6 Underlay and Overlay Device Connectivity

After you’ve committed the underlay and overlay configurations in Configure Interfaces and EBGP as the Routing Protocol in the IPv6 Fabric Underlay and Configure EBGP for IPv6 Overlay Peering, issue the following commands: