Example: Configuring an EVPN-VXLAN Edge-Routed Bridging Fabric With a Virtual Gateway

Ethernet VPN (EVPN) is a control plane technology that enables hosts (physical [bare-metal] servers and virtual machines [VMs]) to be placed anywhere in a network and remain connected to the same logical Layer 2 (L2) overlay network. Virtual Extensible LAN (VXLAN) is a tunneling protocol that creates the data plane for the L2 overlay network.

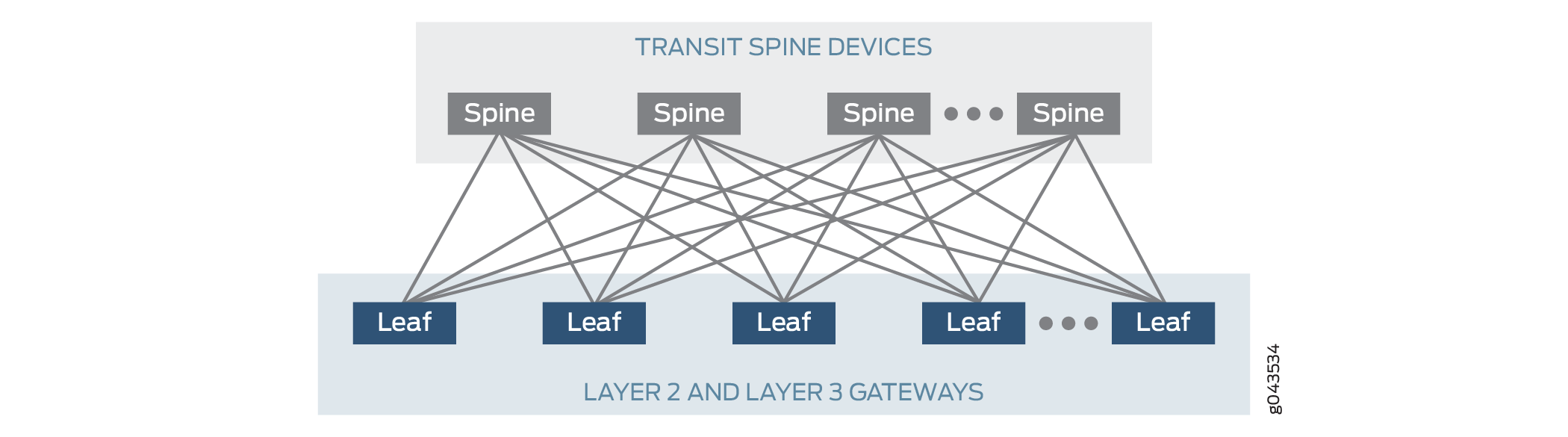

The physical underlay network over which EVPN-VXLAN is commonly deployed is a two-layer IP fabric, which includes spine and leaf devices as shown in Figure 1 . A two-layer spine and leaf fabric is referred to as a 3-stage Clos.

This example details how to deploy an edge-routed bridging (ERB) architecture using a 3-stage Clos fabric. In this design, the spine devices provide only IP connectivity between the leaf devices. In this capacity they are referred to as lean spines, as they require no VXLAN functionality. The leaf devices provide the connectivity to attached workloads. When compared to a centrally routed bridging (CRB) fabric design, the ERB architecture is sometimes called a "collapsed" fabric because the L2 and L3 VXLAN gateway functions are collapsed into a single layer of the fabric—the leaf device layer.

ERB fabric leaf devices provide L2 and Layer 3 (L3) VXLAN functionality in the overlay network as follows:

-

L2 gateways provide bridging within the same VLAN (intra-VLAN),

-

L3 gateways handle traffic between VLANs (inter-VLAN) using integrated routing and bridging (IRB) interfaces in the tenant virtual routing and forwarding (VRF) instances.

As a best practice for configuring the IRB interfaces for intersubnet routing, we use the following model for each IRB logical interface (unit) in a VRF instance:

-

Associate each IRB logical unit with one VLAN (bridge domain) and a corresponding VNI.

-

Assign one unique IP subnet range to the IRB logical unit.

-

Configure a default virtual gateway address for the IRB logical unit, or alternatively, an anycast gateway address.

This model supports optimal subnet routing and forwarding, and simplifies intersubnet traffic management.

You shouldn't assign multiple IP subnet ranges and multiple virtual gateway addresses to the same IRB logical unit, which can cause issues such as:

-

Inefficient routing through intermediate nodes (triangular routing).

-

Dropping of intersubnet traffic.

In this example, we configure the IRB interfaces with a virtual gateway address (VGA) and follow the best practices mentioned above. For an ERB example that uses an anycast IP address on the IRBs and more information about the different methods, see Example: Configuring an EVPN-VXLAN Edge-Routed Bridging Fabric with an Anycast Gateway.

For background information on EVPN-VXLAN technology and supported architectures, see EVPN Primer.

For an example of how to configure an EVPN-VXLAN centrally-routed bridging (CRB) overlay see Example: Configure an EVPN-VXLAN Centrally Routed Bridging Fabric.

This topic provides a sample configuration of a QFX device that functions as a leaf in an ERB overlay.

Requirements

This example uses the following hardware and software components:

Two devices that function as transit spine devices.

-

Four devices running Junos OS Release 21.3R1 or later that serve as leaf devices providing both L2 and L3 VXLAN gateway functionality.

See the hardware summary for a list of supported platforms.

Overview and Topology

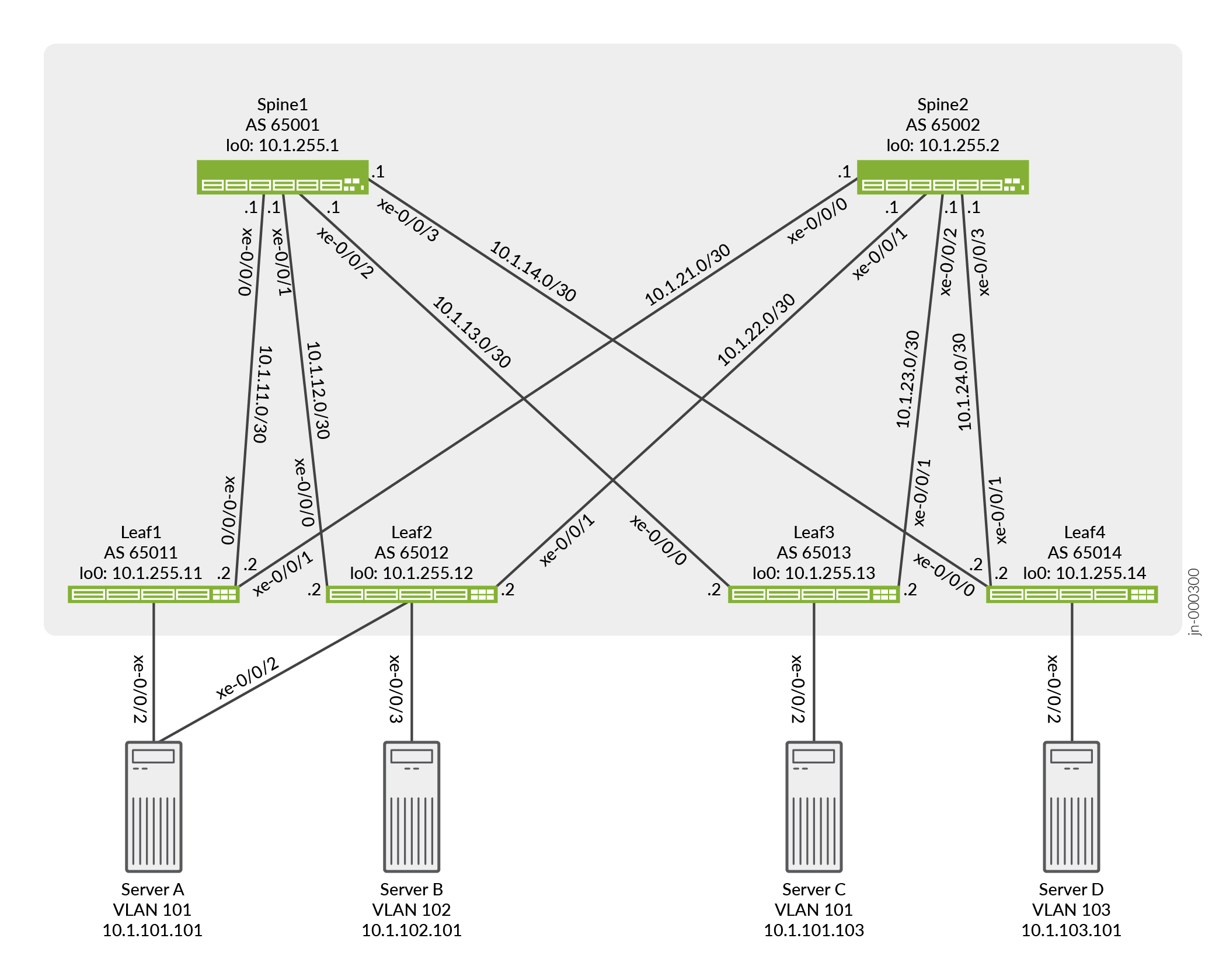

In this example, a service provider supports a site that has multiple servers. Server A and Server C communicate with each other using VLAN 101. Server B and Server D communicate using the L3 gateway. To enable this communication in the ERB overlay shown in Figure 2, you configure the key software entities in Table 1 on the switches that function as L2 and L3 VXLAN gateways, or leaf devices.

Entities |

Configuration on Leaf1, Leaf2, Leaf3 and Leaf4 |

|---|---|

VLANs |

v101 v102 v103 |

VRF instances |

vrf101 vrf102_103 |

IRB interfaces |

irb.101 10.1.101.1/24 (IRB IP address) 10.1.101.254 (virtual gateway address) |

irb.102 10.1.102.1/24 (IRB IP address) 10.1.102.254 (virtual gateway address) |

|

irb.103 10.1.103.1/24 (IRB IP address) 10.1.103.254 (virtual gateway address) |

As outlined in Table 1, you configure VLAN v101 for Server A and Server C, VLAN v102 for Server B, and VLAN v103 for Server D on each leaf device. To segregate the L3 routes for VLANs v101, v102, and v103, you create VPN routing and forwarding (VRF) instances vrf101 and vrf102_103 on each leaf device. To route traffic between the VLANs, you configure IRB interfaces irb.101, irb.102, and irb.103. You also associate VRF instance vrf101 with IRB interface irb.101, and VRF instance vrf102_103 with IRB interfaces irb.102 and irb.103.

You configure IRB interfaces irb.101, irb.102, and irb.103 to function as default L3 gateways that handle server inter-VLAN traffic. To that end, in each IRB interface configuration, you also include a virtual gateway address (VGA), which configures an IRB interface as a default L3 gateway. In addition, this example assumes that each server is configured to use a particular default gateway. For more information about default gateways and how inter-VLAN traffic flows between a physical server to another physical server or VM in another VLAN in an ERB overlay, see Using a Default Layer 3 Gateway to Route Traffic in an EVPN-VXLAN Overlay Network.

When configuring a VGA for an IRB interface, keep in mind that the IRB IP address and VGA must be different.

Also, we require that you configure the no-gateway-community option at the [edit routing-instances EVPN-instance-name protocols evpn default-gateway] hierarchy level in each EVPN routing instance in which you configure an IRB interface with a virtual gateway address. You can alternatively configure the no-gateway-community option globally at the [edit protocols evpn default-gateway] hierarchy level on platforms that support configuration at this level. See default-gateway for details on using the no-gateway-community option.

As outlined in Table 1, you configure a separate VRF routing instance for VLAN v101 and VLANs v102 and v103. To enable the communication between hosts in VLANs v101, v102, and v103, this example shows how to export unicast routes from the routing table for vrf101 and import the routes into the routing table for vrf102_103 and vice versa. This feature is also known as route leaking.

Quick Configuration

CLI Quick Configuration

To quickly configure Leaf1, copy the following commands and paste them into a text file. Remove any line breaks and change any details necessary to match your network configuration. Then copy and paste the commands into the CLI at the [edit] hierarchy level.

set chassis aggregated-devices ethernet device-count 1 set interfaces xe-0/0/0 unit 0 family inet address 10.1.11.2/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.21.2/30 set interfaces xe-0/0/2 ether-options 802.3ad ae0 set interfaces ae0 esi 00:01:01:01:01:01:01:01:01:01 set interfaces ae0 esi all-active set interfaces ae0 aggregated-ether-options lacp active set interfaces ae0 aggregated-ether-options lacp system-id 00:01:01:01:01:01 set interfaces ae0 unit 0 family ethernet-switching interface-mode trunk set interfaces ae0 unit 0 family ethernet-switching vlan members v101 set interfaces irb unit 101 virtual-gateway-accept-data set interfaces irb unit 101 family inet address 10.1.101.1/24 virtual-gateway-address 10.1.101.254 set interfaces irb unit 102 virtual-gateway-accept-data set interfaces irb unit 102 family inet address 10.1.102.1/24 virtual-gateway-address 10.1.102.254 set interfaces irb unit 103 virtual-gateway-accept-data set interfaces irb unit 103 family inet address 10.1.103.1/24 virtual-gateway-address 10.1.103.254 set interfaces lo0 unit 0 family inet address 10.1.255.11/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.11/32 exact set policy-options policy-statement send-direct term 1 then accept set policy-options policy-statement vrf101_vrf_imp term 1 from community vrf102_103 set policy-options policy-statement vrf101_vrf_imp term 1 then accept set policy-options policy-statement vrf102_103_vrf_imp term 1 from community vrf101 set policy-options policy-statement vrf102_103_vrf_imp term 1 then accept set policy-options community vrf101 members target:1001:1 set policy-options community vrf102_103 members target:1023:1 set routing-instances vrf101 instance-type vrf set routing-instances vrf101 routing-options auto-export set routing-instances vrf101 interface irb.101 set routing-instances vrf101 route-distinguisher 10.1.255.11:101 set routing-instances vrf101 vrf-import vrf101_vrf_imp set routing-instances vrf101 vrf-target target:1001:1 set routing-instances vrf102_103 instance-type vrf set routing-instances vrf102_103 routing-options auto-export set routing-instances vrf102_103 interface irb.102 set routing-instances vrf102_103 interface irb.103 set routing-instances vrf102_103 route-distinguisher 10.1.255.11:123 set routing-instances vrf102_103 vrf-import vrf102_103_vrf_imp set routing-instances vrf102_103 vrf-target target:1023:1 set routing-options router-id 10.1.255.11 set routing-options autonomous-system 65011 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.11.1 peer-as 65001 set protocols bgp group underlay neighbor 10.1.21.1 peer-as 65002 set protocols bgp group overlay type external set protocols bgp group overlay multihop set protocols bgp group overlay local-address 10.1.255.11 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001 set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002 set protocols evpn encapsulation vxlan set protocols evpn default-gateway no-gateway-community set protocols evpn multicast-mode ingress-replication set protocols evpn vni-options vni 101 vrf-target target:101:1 set protocols evpn vni-options vni 102 vrf-target target:102:1 set protocols evpn vni-options vni 103 vrf-target target:103:1 set protocols evpn extended-vni-list all set switch-options vtep-source-interface lo0.0 set switch-options route-distinguisher 10.1.255.11:1 set switch-options vrf-target target:1:1 set switch-options vrf-target auto set vlans v101 vlan-id 101 set vlans v101 l3-interface irb.101 set vlans v101 vxlan vni 101 set vlans v102 vlan-id 102 set vlans v102 l3-interface irb.102 set vlans v102 vxlan vni 102 set vlans v103 vlan-id 103 set vlans v103 l3-interface irb.103 set vlans v103 vxlan vni 103

Underlay Network Configuration

CLI Quick Configuration

To quickly configure a underlay network on Leaf1, copy the following commands and paste them into a text file. Remove any line breaks and change any details necessary to match your network configuration. Then copy and paste the commands into the CLI at the [edit] hierarchy level.

set interfaces xe-0/0/0 unit 0 family inet address 10.1.11.2/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.21.2/30 set interfaces lo0 unit 0 family inet address 10.1.255.11/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.11/32 exact set policy-options policy-statement send-direct term 1 then accept set routing-options router-id 10.1.255.11 set routing-options autonomous-system 65011 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.11.1 peer-as 65001 set protocols bgp group underlay neighbor 10.1.21.1 peer-as 65002

Configuring the Underlay Network

Step-by-Step Procedure

To configure a underlay network on Leaf1:

Configure the interfaces connected to the spine devices and the loopback interface on Leaf1.

user@leaf1# set interfaces xe-0/0/0 unit 0 family inet address 10.1.11.2/30 user@leaf1# set interfaces xe-0/0/1 unit 0 family inet address 10.1.21.2/30 user@leaf1# set interfaces lo0 unit 0 family inet address 10.1.255.11/32

Configure the router ID, autonomous system number, and apply the load balancing policy for Leaf1. We show the policy configuration in a later step.

user@leaf1# set routing-options router-id 10.1.255.11 user@leaf1# set routing-options autonomous-system 65011 user@leaf1# set routing-options forwarding-table export load-balancing-policy

Configure an EBGP group that peers with both spine devices. We show the policy to advertise the loopback address for Leaf1 in a later step.

user@leaf1# set protocols bgp group underlay type external user@leaf1# set protocols bgp group underlay export send-direct user@leaf1# set protocols bgp group underlay multipath multiple-as user@leaf1# set protocols bgp group underlay neighbor 10.1.11.1 peer-as 65001 user@leaf1# set protocols bgp group underlay neighbor 10.1.21.1 peer-as 65002

Configure policies to load balancing and advertise the loopback address of Leaf1.

user@leaf1# set policy-options policy-statement load-balancing-policy then load-balance per-packet user@leaf1# set policy-options policy-statement send-direct term 1 from protocol direct user@leaf1# set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.11/32 exact user@leaf1# set policy-options policy-statement send-direct term 1 then accept

EVPN-VXLAN Overlay Network Configuration

CLI Quick Configuration

To quickly configure an overlay network, copy the following commands and paste them into a text file. Remove any line breaks and change any details necessary to match your network configuration. Then copy and paste the commands into the CLI at the [edit] hierarchy level.

set protocols bgp group overlay type external set protocols bgp group overlay multihop set protocols bgp group overlay local-address 10.1.255.11 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001 set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002 set protocols evpn encapsulation vxlan set protocols evpn default-gateway no-gateway-community set protocols evpn multicast-mode ingress-replication set protocols evpn vni-options vni 101 vrf-target target:101:1 set protocols evpn vni-options vni 102 vrf-target target:102:1 set protocols evpn vni-options vni 103 vrf-target target:103:1 set protocols evpn extended-vni-list all set switch-options vtep-source-interface lo0.0 set switch-options route-distinguisher 10.1.255.11:1 set switch-options vrf-target target:1:1 set switch-options vrf-target auto

Configuring an EVPN-VXLAN Overlay Network

Step-by-Step Procedure

To configure a basic EVPN-VXLAN overlay network on Leaf1:

Configure an EBGP-based overlay between Leaf1 and the spine devices, specify a local IP address for Leaf1, and include the EVPN signaling Network Layer Reachability Information (NLRI) to the BGP group.

user@leaf1# set protocols bgp group overlay type external user@leaf1# set protocols bgp group overlay multihop user@leaf1# set protocols bgp group overlay local-address 10.1.255.11 user@leaf1# set protocols bgp group overlay family evpn signaling user@leaf1# set protocols bgp group overlay multipath multiple-as user@leaf1# set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001 user@leaf1# set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002

Note:Some IP fabrics use an IBGP based EVPN-VXLAN overlay. For an example of an IP fabric that uses IBGP for the overlay, see Example: Configure an EVPN-VXLAN Centrally Routed Bridging Fabric. Note that choosing either EBGP or IBGP for the overlay does not impact the fabric architecture. Both CRB and ERB designs support either type of overlay.

Configure VXLAN encapsulation for the data packets exchanged between the EVPN neighbors. Specify that all VXLAN network identifiers (VNIs) are part of the virtual routing and forwarding (VRF) instance. Also, specify that the MAC address of the IRB interface and the MAC address of the corresponding default gateway are advertised without the extended community option

default-gateway.user@leaf1# set protocols evpn encapsulation vxlan user@leaf1# set protocols evpn default-gateway no-gateway-community user@leaf1# set protocols evpn multicast-mode ingress-replication user@leaf1# set protocols evpn vni-options vni 101 vrf-target target:101:1 user@leaf1# set protocols evpn vni-options vni 102 vrf-target target:102:1 user@leaf1# set protocols evpn vni-options vni 103 vrf-target target:103:1 user@leaf1# set protocols evpn extended-vni-list all

Configure switch options to set a route distinguisher and VRF target for the VRF routing instance, and associate interface lo0 with the virtual tunnel endpoint (VTEP).

user@leaf1# set switch-options vtep-source-interface lo0.0 user@leaf1# set switch-options route-distinguisher 10.1.255.11:1 user@leaf1# set switch-options vrf-target target:1:1 user@leaf1# set switch-options vrf-target auto

Customer Profile Configuration

CLI Quick Configuration

To quickly configure a basic customer profile for Servers A, Server B, Server C and Server D, copy the following commands and paste them into a text file. Remove any line breaks and change any details necessary to match your network configuration. Then copy and paste the commands into the CLI at the [edit] hierarchy level.

set chassis aggregated-devices ethernet device-count 1 set interfaces xe-0/0/2 ether-options 802.3ad ae0 set interfaces ae0 esi 00:01:01:01:01:01:01:01:01:01 set interfaces ae0 esi all-active set interfaces ae0 aggregated-ether-options lacp active set interfaces ae0 aggregated-ether-options lacp system-id 00:01:01:01:01:01 set interfaces ae0 unit 0 family ethernet-switching interface-mode trunk set interfaces ae0 unit 0 family ethernet-switching vlan members v101 set interfaces irb unit 101 virtual-gateway-accept-data set interfaces irb unit 101 family inet address 10.1.101.1/24 virtual-gateway-address 10.1.101.254 set interfaces irb unit 102 virtual-gateway-accept-data set interfaces irb unit 102 family inet address 10.1.102.1/24 virtual-gateway-address 10.1.102.254 set interfaces irb unit 103 virtual-gateway-accept-data set interfaces irb unit 103 family inet address 10.1.103.1/24 virtual-gateway-address 10.1.103.254 set routing-instances vrf101 instance-type vrf set routing-instances vrf101 interface irb.101 set routing-instances vrf101 route-distinguisher 10.1.255.11:101 set routing-instances vrf101 vrf-target target:1001:1 set routing-instances vrf102_103 instance-type vrf set routing-instances vrf102_103 interface irb.102 set routing-instances vrf102_103 interface irb.103 set routing-instances vrf102_103 route-distinguisher 10.1.255.11:123 set routing-instances vrf102_103 vrf-target target:1023:1 set vlans v101 vlan-id 101 set vlans v101 l3-interface irb.101 set vlans v101 vxlan vni 101 set vlans v102 vlan-id 102 set vlans v102 l3-interface irb.102 set vlans v102 vxlan vni 102 set vlans v103 vlan-id 103 set vlans v103 l3-interface irb.103 set vlans v103 vxlan vni 103

Configuring a Customer Profile

Step-by-Step Procedure

To configure a basic customer profile on Leaf1:

Enable Server A to be multihomed to Leaf1 and Leaf2 by configuring an aggregated Ethernet interface, specifying an ESI for the interface, and setting the mode so that the connections to both leaf devices are active.

user@leaf1# set chassis aggregated-devices ethernet device-count 1 user@leaf1# set interfaces xe-0/0/2 ether-options 802.3ad ae0 user@leaf1# set interfaces ae0 esi 00:01:01:01:01:01:01:01:01:01 user@leaf1# set interfaces ae0 esi all-active user@leaf1# set interfaces ae0 aggregated-ether-options lacp active user@leaf1# set interfaces ae0 aggregated-ether-options lacp system-id 00:01:01:01:01:01 user@leaf1# set interfaces ae0 unit 0 family ethernet-switching interface-mode trunk user@leaf1# set interfaces ae0 unit 0 family ethernet-switching vlan members v101

Note:When configuring the ae0 interface on Leaf2, you must specify the same ESI (00:01:01:01:01:01:01:01:01:01) that is specified for the same interface on Leaf1.

Configure IRB interfaces and associated VGAs (default L3 virtual gateways), which enable the communication between servers in different VLANs. Add the optional configuration

virtual-gateway-accept-datato allow the VGA to respond to ping packets.user@leaf1# set interfaces irb unit 101 virtual-gateway-accept-data user@leaf1# set interfaces irb unit 101 family inet address 10.1.101.1/24 virtual-gateway-address 10.1.101.254 user@leaf1# set interfaces irb unit 102 virtual-gateway-accept-data user@leaf1# set interfaces irb unit 102 family inet address 10.1.102.1/24 virtual-gateway-address 10.1.102.254 user@leaf1# set interfaces irb unit 103 virtual-gateway-accept-data user@leaf1# set interfaces irb unit 103 family inet address 10.1.103.1/24 virtual-gateway-address 10.1.103.254

Note:When configuring a VGA for an IRB interface, keep in mind that the IRB IP address and VGA must be different.

Configure a VRF routing instance for VLAN v101 and another VRF routing instance for VLAN v102 and v103. In each routing instance, associate the IRB interfaces, a

route-distinguisher, and avrf-target.user@leaf1# set routing-instances vrf101 instance-type vrf user@leaf1# set routing-instances vrf101 interface irb.101 user@leaf1# set routing-instances vrf101 route-distinguisher 10.1.255.11:101 user@leaf1# set routing-instances vrf101 vrf-target target:1001:1 user@leaf1# set routing-instances vrf102_103 instance-type vrf user@leaf1# set routing-instances vrf102_103 interface irb.102 user@leaf1# set routing-instances vrf102_103 interface irb.103 user@leaf1# set routing-instances vrf102_103 route-distinguisher 10.1.255.11:123 user@leaf1# set routing-instances vrf102_103 vrf-target target:1023:1

Configure VLANs v101, v102, and v103 and associate an IRB interface and VNI with each VLAN.

user@leaf1# set vlans v101 vlan-id 101 user@leaf1# set vlans v101 l3-interface irb.101 user@leaf1# set vlans v101 vxlan vni 101 user@leaf1# set vlans v102 vlan-id 102 user@leaf1# set vlans v102 l3-interface irb.102 user@leaf1# set vlans v102 vxlan vni 102 user@leaf1# set vlans v103 vlan-id 103 user@leaf1# set vlans v103 l3-interface irb.103 user@leaf1# set vlans v103 vxlan vni 103

Route Leaking Configuration

At this point based on the configuration, Server A and Server C should be able to reach each other. Server B andServer D should be able to reach each other. To enable all servers to be able to reach each other, we leak routes between the routing instances.

CLI Quick Configuration

To quickly configure route leaking, copy the following commands and paste them into a text file. Remove any line breaks and change any details necessary to match your network configuration. Then copy and paste the commands into the CLI at the [edit] hierarchy level.

set policy-options policy-statement vrf101_vrf_imp term 1 from community vrf102_103 set policy-options policy-statement vrf101_vrf_imp term 1 then accept set policy-options policy-statement vrf102_103_vrf_imp term 1 from community vrf101 set policy-options policy-statement vrf102_103_vrf_imp term 1 then accept set policy-options community vrf101 members target:1001:1 set policy-options community vrf102_103 members target:1023:1 set routing-instances vrf101 routing-options auto-export set routing-instances vrf101 vrf-import vrf101_vrf_imp set routing-instances vrf102_103 routing-options auto-export set routing-instances vrf102_103 vrf-import vrf102_103_vrf_imp

Configuring Route Leaking

Step-by-Step Procedure

To configure route leaking on Leaf1:

Configure the communities that match the targets you configured for the routing instances. Configure policies that are applied under each routing instance that matches on the

vrf-targetof the otherrouting-instance.user@leaf1# set policy-options policy-statement vrf101_vrf_imp term 1 from community vrf102_103 user@leaf1# set policy-options policy-statement vrf101_vrf_imp term 1 then accept user@leaf1# set policy-options policy-statement vrf102_103_vrf_imp term 1 from community vrf101 user@leaf1# set policy-options policy-statement vrf102_103_vrf_imp term 1 then accept user@leaf1# set policy-options community vrf101 members target:1001:1 user@leaf1# set policy-options community vrf102_103 members target:1023:1

In the VRF routing instances, apply the routing policies configured in the previous step. This establishes a common route target between the routing instances.

user@leaf1# set routing-instances vrf101 vrf-import vrf101_vrf_imp user@leaf1# set routing-instances vrf102_103 vrf-import vrf102_103_vrf_imp

Configure the

auto-exportoption that allows sharing routes between instances with common route targets.user@leaf1# set routing-instances vrf101 routing-options auto-export user@leaf1# set routing-instances vrf102_103 routing-options auto-export

Verification

The section describes the following verifications for this example:

Verifying BGP

Purpose

Verify that the spine devices have established BGP session connectivity.

Action

Display the BGP summary:

user@leaf1> show bgp summary

Threading mode: BGP I/O

Default eBGP mode: advertise - accept, receive - accept

Groups: 2 Peers: 4 Down peers: 0

Table Tot Paths Act Paths Suppressed History Damp State Pending

bgp.evpn.0

130 65 0 0 0 0

inet.0

8 8 0 0 0 0

Peer AS InPkt OutPkt OutQ Flaps Last Up/Dwn State|#Active/Received/Accepted/Damped...

10.1.11.1 65001 31797 32161 0 0 1w3d 3:51:49 Establ

inet.0: 4/4/4/0

10.1.21.1 65002 31794 32164 0 0 1w3d 3:51:48 Establ

inet.0: 4/4/4/0

10.1.255.1 65001 32506 32631 0 0 1w3d 3:51:47 Establ

bgp.evpn.0: 40/65/65/0

default-switch.evpn.0: 44/64/64/0

__default_evpn__.evpn.0: 0/1/1/0

10.1.255.2 65002 32530 32624 0 0 1w3d 3:51:43 Establ

bgp.evpn.0: 25/65/65/0

default-switch.evpn.0: 20/64/64/0

__default_evpn__.evpn.0: 1/1/1/0Meaning

Both underlay and overlay BGP sessions are established with the spine devices.

Verifying the ESI

Purpose

Verify the status of the ESI.

Action

Display the status of the ESI:

user@leaf1> show evpn instance esi 00:01:01:01:01:01:01:01:01:01 extensive

Instance: default-switch

Route Distinguisher: 10.1.255.11:1

Encapsulation type: VXLAN

Duplicate MAC detection threshold: 5

Duplicate MAC detection window: 180

MAC database status Local Remote

MAC advertisements: 4 22

MAC+IP advertisements: 7 22

Default gateway MAC advertisements: 6 0

Number of local interfaces: 2 (2 up)

Interface name ESI Mode Status AC-Role

.local..6 00:00:00:00:00:00:00:00:00:00 single-homed Up Root

ae0.0 00:01:01:01:01:01:01:01:01:01 all-active Up Root

Number of IRB interfaces: 3 (3 up)

Interface name VLAN VNI Status L3 context

irb.101 101 Up vrf101

irb.102 102 Up vrf102_103

irb.103 103 Up vrf102_103

Number of protect interfaces: 0

Number of bridge domains: 3

VLAN Domain-ID Intfs/up IRB-intf Mode MAC-sync IM-label MAC-label v4-SG-sync IM-core-NH v6-SG-sync IM-core-NH Trans-ID

101 101 1 1 irb.101 Extended Enabled 101 Disabled Disabled 101

102 102 0 0 irb.102 Extended Enabled 102 Disabled Disabled 102

103 103 0 0 irb.103 Extended Enabled 103 Disabled Disabled 103

Number of neighbors: 3

Address MAC MAC+IP AD IM ES Leaf-label Remote-DCI-Peer

10.1.255.12 8 8 5 3 0

10.1.255.13 7 7 3 3 0

10.1.255.14 7 7 3 3 0

Number of ethernet segments: 13

ESI: 00:01:01:01:01:01:01:01:01:01

Status: Resolved by IFL ae0.0

Local interface: ae0.0, Status: Up/Forwarding

Number of remote PEs connected: 1

Remote-PE MAC-label Aliasing-label Mode

10.1.255.12 101 0 all-active

DF Election Algorithm: MOD based

Designated forwarder: 10.1.255.12

Backup forwarder: 10.1.255.11

Last designated forwarder update: Jun 02 12:46:14

Router-ID: 10.1.255.11

Source VTEP interface IP: 10.1.255.11

SMET Forwarding: DisabledMeaning

The ESI is up and Leaf2 is the remote provider edge (PE) device and the designated forwarder.

Verifying the EVPN Database

Purpose

Verify the MAC addresses in the EVPN database.

Action

Verify the MAC addresses in the EVPN database for VLAN 101.

user@leaf1> show evpn database l2-domain-id 101

Instance: default-switch

VLAN DomainId MAC address Active source Timestamp IP address

101 00:00:5e:00:01:01 05:00:00:fd:f3:00:00:00:65:00 May 23 11:58:57 10.1.101.254

101 02:05:86:11:af:00 10.1.255.14 May 26 12:49:49 10.1.101.4

101 02:05:86:54:14:00 10.1.255.13 May 23 12:04:41 10.1.101.3

101 02:05:86:8d:d9:00 irb.101 May 23 11:58:57 10.1.101.1

101 02:05:86:c2:39:00 10.1.255.12 May 23 11:58:58 10.1.101.2

101 2c:6b:f5:01:b1:c0 00:01:01:01:01:01:01:01:01:01 Jun 02 12:46:21 10.1.101.101

101 56:04:15:00:dc:95 10.1.255.13 May 23 12:07:33 10.1.101.103Meaning

The MAC and IP addresses for Server A are shown with an active source of the ESI, and the MAC and IP addresses for Server C are shown with an active source from Leaf3. Also, the MAC for the VGA and each leaf IRB interface are shown.

Verifying Connectivity

Purpose

Verify ping works between servers.

Action

Ping from Server A to the other servers.

user@serverA> ping 10.1.102.101 count 2 PING 10.1.102.101 (10.1.102.101): 56 data bytes 64 bytes from 10.1.102.101: icmp_seq=0 ttl=63 time=117.425 ms 64 bytes from 10.1.102.101: icmp_seq=1 ttl=63 time=109.663 ms --- 10.1.102.101 ping statistics --- 2 packets transmitted, 2 packets received, 0% packet loss round-trip min/avg/max/stddev = 109.663/113.544/117.425/3.881 ms user@serverA> ping 10.1.101.103 count 2 PING 10.1.101.103 (10.1.101.103): 56 data bytes 64 bytes from 10.1.101.103: icmp_seq=0 ttl=64 time=311.050 ms 64 bytes from 10.1.101.103: icmp_seq=1 ttl=64 time=201.300 ms --- 10.1.101.103 ping statistics --- 2 packets transmitted, 2 packets received, 0% packet loss round-trip min/avg/max/stddev = 201.300/256.175/311.050/54.875 ms user@serverA> ping 10.1.103.101 count 2 PING 10.1.103.101 (10.1.103.101): 56 data bytes 64 bytes from 10.1.103.101: icmp_seq=0 ttl=63 time=311.321 ms 64 bytes from 10.1.103.101: icmp_seq=1 ttl=63 time=367.343 ms --- 10.1.103.101 ping statistics --- 2 packets transmitted, 2 packets received, 0% packet loss round-trip min/avg/max/stddev = 311.321/339.332/367.343/28.011 ms

Meaning

End-to-end connectivity is working.

Quick Configuration For All Devices

CLI Quick Configuration

To quickly configure this example, copy the following commands and paste them into a text file. Remove any line breaks and change any details necessary to match your network configuration. Then copy and paste the commands into the CLI at the [edit] hierarchy level.

Leaf2

set chassis aggregated-devices ethernet device-count 1 set interfaces xe-0/0/0 unit 0 family inet address 10.1.12.2/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.22.2/30 set interfaces xe-0/0/2 ether-options 802.3ad ae0 set interfaces xe-0/0/3 unit 0 family ethernet-switching interface-mode trunk set interfaces xe-0/0/3 unit 0 family ethernet-switching vlan members v102 set interfaces ae0 esi 00:01:01:01:01:01:01:01:01:01 set interfaces ae0 esi all-active set interfaces ae0 aggregated-ether-options lacp active set interfaces ae0 aggregated-ether-options lacp system-id 00:01:01:01:01:01 set interfaces ae0 unit 0 family ethernet-switching interface-mode trunk set interfaces ae0 unit 0 family ethernet-switching vlan members v101 set interfaces irb unit 101 virtual-gateway-accept-data set interfaces irb unit 101 family inet address 10.1.101.2/24 virtual-gateway-address 10.1.101.254 set interfaces irb unit 102 virtual-gateway-accept-data set interfaces irb unit 102 family inet address 10.1.102.2/24 virtual-gateway-address 10.1.102.254 set interfaces irb unit 103 virtual-gateway-accept-data set interfaces irb unit 103 family inet address 10.1.103.2/24 virtual-gateway-address 10.1.103.254 set interfaces lo0 unit 0 family inet address 10.1.255.12/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.12/32 exact set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.11/32 exact set policy-options policy-statement send-direct term 1 then accept set policy-options policy-statement vrf101_vrf_imp term 1 from community vrf102_103 set policy-options policy-statement vrf101_vrf_imp term 1 then accept set policy-options policy-statement vrf102_103_vrf_imp term 1 from community vrf101 set policy-options policy-statement vrf102_103_vrf_imp term 1 then accept set policy-options community vrf101 members target:1001:1 set policy-options community vrf102_103 members target:1023:1 set routing-instances vrf101 instance-type vrf set routing-instances vrf101 routing-options auto-export set routing-instances vrf101 interface irb.101 set routing-instances vrf101 route-distinguisher 10.1.255.12:101 set routing-instances vrf101 vrf-import vrf101_vrf_imp set routing-instances vrf101 vrf-target target:1001:1 set routing-instances vrf102_103 instance-type vrf set routing-instances vrf102_103 routing-options auto-export set routing-instances vrf102_103 interface irb.102 set routing-instances vrf102_103 interface irb.103 set routing-instances vrf102_103 route-distinguisher 10.1.255.12:123 set routing-instances vrf102_103 vrf-import vrf102_103_vrf_imp set routing-instances vrf102_103 vrf-target target:1023:1 set routing-options router-id 10.1.255.12 set routing-options autonomous-system 65012 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.12.1 peer-as 65001 set protocols bgp group underlay neighbor 10.1.22.1 peer-as 65002 set protocols bgp group overlay type external set protocols bgp group overlay multihop set protocols bgp group overlay local-address 10.1.255.12 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001 set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002 set protocols evpn encapsulation vxlan set protocols evpn default-gateway no-gateway-community set protocols evpn multicast-mode ingress-replication set protocols evpn vni-options vni 101 vrf-target target:101:1 set protocols evpn vni-options vni 102 vrf-target target:102:1 set protocols evpn vni-options vni 103 vrf-target target:103:1 set protocols evpn extended-vni-list all set switch-options vtep-source-interface lo0.0 set switch-options route-distinguisher 10.1.255.12:1 set switch-options vrf-target target:1:1 set switch-options vrf-target auto set vlans v101 vlan-id 101 set vlans v101 l3-interface irb.101 set vlans v101 vxlan vni 101 set vlans v102 vlan-id 102 set vlans v102 l3-interface irb.102 set vlans v102 vxlan vni 102 set vlans v103 vlan-id 103 set vlans v103 l3-interface irb.103 set vlans v103 vxlan vni 103

Leaf3

set interfaces xe-0/0/0 unit 0 family inet address 10.1.13.2/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.23.2/30 set interfaces xe-0/0/2 unit 0 family ethernet-switching interface-mode trunk set interfaces xe-0/0/2 unit 0 family ethernet-switching vlan members v101 set interfaces irb unit 101 virtual-gateway-accept-data set interfaces irb unit 101 family inet address 10.1.101.3/24 virtual-gateway-address 10.1.101.254 set interfaces irb unit 102 virtual-gateway-accept-data set interfaces irb unit 102 family inet address 10.1.102.3/24 virtual-gateway-address 10.1.102.254 set interfaces irb unit 103 virtual-gateway-accept-data set interfaces irb unit 103 family inet address 10.1.103.3/24 virtual-gateway-address 10.1.103.254 set interfaces lo0 unit 0 family inet address 10.1.255.13/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.13/32 exact set policy-options policy-statement send-direct term 1 then accept set policy-options policy-statement vrf101_vrf_imp term 1 from community vrf102_103 set policy-options policy-statement vrf101_vrf_imp term 1 then accept set policy-options policy-statement vrf102_103_vrf_imp term 1 from community vrf101 set policy-options policy-statement vrf102_103_vrf_imp term 1 then accept set policy-options community vrf101 members target:1001:1 set policy-options community vrf102_103 members target:1023:1 set routing-instances vrf101 instance-type vrf set routing-instances vrf101 routing-options auto-export set routing-instances vrf101 interface irb.101 set routing-instances vrf101 route-distinguisher 10.1.255.13:101 set routing-instances vrf101 vrf-import vrf101_vrf_imp set routing-instances vrf101 vrf-target target:1001:1 set routing-instances vrf102_103 instance-type vrf set routing-instances vrf102_103 routing-options auto-export set routing-instances vrf102_103 interface irb.102 set routing-instances vrf102_103 interface irb.103 set routing-instances vrf102_103 route-distinguisher 10.1.255.13:123 set routing-instances vrf102_103 vrf-import vrf102_103_vrf_imp set routing-instances vrf102_103 vrf-target target:1023:1 set routing-options router-id 10.1.255.13 set routing-options autonomous-system 65013 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.13.1 peer-as 65001 set protocols bgp group underlay neighbor 10.1.23.1 peer-as 65002 set protocols bgp group overlay type external set protocols bgp group overlay multihop set protocols bgp group overlay local-address 10.1.255.13 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001 set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002 set protocols evpn encapsulation vxlan set protocols evpn default-gateway no-gateway-community set protocols evpn multicast-mode ingress-replication set protocols evpn vni-options vni 101 vrf-target target:101:1 set protocols evpn vni-options vni 102 vrf-target target:102:1 set protocols evpn vni-options vni 103 vrf-target target:103:1 set protocols evpn extended-vni-list all set switch-options vtep-source-interface lo0.0 set switch-options route-distinguisher 10.1.255.13:1 set switch-options vrf-target target:1:1 set switch-options vrf-target auto set vlans v101 vlan-id 101 set vlans v101 l3-interface irb.101 set vlans v101 vxlan vni 101 set vlans v102 vlan-id 102 set vlans v102 l3-interface irb.102 set vlans v102 vxlan vni 102 set vlans v103 vlan-id 103 set vlans v103 l3-interface irb.103 set vlans v103 vxlan vni 103

Leaf4

set interfaces xe-0/0/0 unit 0 family inet address 10.1.14.2/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.24.2/30 set interfaces xe-0/0/2 unit 0 family ethernet-switching interface-mode trunk set interfaces xe-0/0/2 unit 0 family ethernet-switching vlan members v103 set interfaces irb unit 101 virtual-gateway-accept-data set interfaces irb unit 101 family inet address 10.1.101.4/24 virtual-gateway-address 10.1.101.254 set interfaces irb unit 102 virtual-gateway-accept-data set interfaces irb unit 102 family inet address 10.1.102.4/24 virtual-gateway-address 10.1.102.254 set interfaces irb unit 103 virtual-gateway-accept-data set interfaces irb unit 103 family inet address 10.1.103.4/24 virtual-gateway-address 10.1.103.254 set interfaces lo0 unit 0 family inet address 10.1.255.14/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.14/32 exact set policy-options policy-statement send-direct term 1 then accept set policy-options policy-statement vrf101_vrf_imp term 1 from community vrf102_103 set policy-options policy-statement vrf101_vrf_imp term 1 then accept set policy-options policy-statement vrf102_103_vrf_imp term 1 from community vrf101 set policy-options policy-statement vrf102_103_vrf_imp term 1 then accept set policy-options community vrf101 members target:1001:1 set policy-options community vrf102_103 members target:1023:1 set routing-instances vrf101 instance-type vrf set routing-instances vrf101 routing-options auto-export set routing-instances vrf101 interface irb.101 set routing-instances vrf101 route-distinguisher 10.1.255.14:101 set routing-instances vrf101 vrf-import vrf101_vrf_imp set routing-instances vrf101 vrf-target target:1001:1 set routing-instances vrf102_103 instance-type vrf set routing-instances vrf102_103 routing-options auto-export set routing-instances vrf102_103 interface irb.102 set routing-instances vrf102_103 interface irb.103 set routing-instances vrf102_103 route-distinguisher 10.1.255.14:123 set routing-instances vrf102_103 vrf-import vrf102_103_vrf_imp set routing-instances vrf102_103 vrf-target target:1023:1 set routing-options router-id 10.1.255.14 set routing-options autonomous-system 65014 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.14.1 peer-as 65001 set protocols bgp group underlay neighbor 10.1.24.1 peer-as 65002 set protocols bgp group overlay type external set protocols bgp group overlay multihop set protocols bgp group overlay local-address 10.1.255.14 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001 set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002 set protocols evpn encapsulation vxlan set protocols evpn default-gateway no-gateway-community set protocols evpn multicast-mode ingress-replication set protocols evpn vni-options vni 101 vrf-target target:101:1 set protocols evpn vni-options vni 102 vrf-target target:102:1 set protocols evpn vni-options vni 103 vrf-target target:103:1 set protocols evpn extended-vni-list all set switch-options vtep-source-interface lo0.0 set switch-options route-distinguisher 10.1.255.14:1 set switch-options vrf-target target:1:1 set vlans v101 vlan-id 101 set vlans v101 l3-interface irb.101 set vlans v101 vxlan vni 101 set vlans v102 vlan-id 102 set vlans v102 l3-interface irb.102 set vlans v102 vxlan vni 102 set vlans v103 vlan-id 103 set vlans v103 l3-interface irb.103 set vlans v103 vxlan vni 103

Spine 1

set interfaces xe-0/0/0 unit 0 family inet address 10.1.11.1/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.12.1/30 set interfaces xe-0/0/2 unit 0 family inet address 10.1.13.1/30 set interfaces xe-0/0/3 unit 0 family inet address 10.1.14.1/30 set interfaces lo0 unit 0 family inet address 10.1.255.1/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.1/32 exact set policy-options policy-statement send-direct term 1 then accept set routing-options router-id 10.1.255.1 set routing-options autonomous-system 65001 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.11.2 peer-as 65011 set protocols bgp group underlay neighbor 10.1.12.2 peer-as 65012 set protocols bgp group underlay neighbor 10.1.13.2 peer-as 65013 set protocols bgp group underlay neighbor 10.1.14.2 peer-as 65014 set protocols bgp group overlay type external set protocols bgp group overlay multihop no-nexthop-change set protocols bgp group overlay local-address 10.1.255.1 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.11 peer-as 65011 set protocols bgp group overlay neighbor 10.1.255.12 peer-as 65012 set protocols bgp group overlay neighbor 10.1.255.13 peer-as 65013 set protocols bgp group overlay neighbor 10.1.255.14 peer-as 65014 set protocols bgp group overlay neighbor 10.1.255.2 peer-as 65002

Spine 2

set interfaces xe-0/0/0 unit 0 family inet address 10.1.21.1/30 set interfaces xe-0/0/1 unit 0 family inet address 10.1.22.1/30 set interfaces xe-0/0/2 unit 0 family inet address 10.1.23.1/30 set interfaces xe-0/0/3 unit 0 family inet address 10.1.24.1/30 set interfaces lo0 unit 0 family inet address 10.1.255.2/32 set policy-options policy-statement load-balancing-policy then load-balance per-packet set policy-options policy-statement send-direct term 1 from protocol direct set policy-options policy-statement send-direct term 1 from route-filter 10.1.255.2/32 exact set policy-options policy-statement send-direct term 1 then accept set routing-options router-id 10.1.255.2 set routing-options autonomous-system 65002 set routing-options forwarding-table export load-balancing-policy set protocols bgp group underlay type external set protocols bgp group underlay export send-direct set protocols bgp group underlay multipath multiple-as set protocols bgp group underlay neighbor 10.1.21.2 peer-as 65011 set protocols bgp group underlay neighbor 10.1.22.2 peer-as 65012 set protocols bgp group underlay neighbor 10.1.23.2 peer-as 65013 set protocols bgp group underlay neighbor 10.1.24.2 peer-as 65014 set protocols bgp group overlay type external set protocols bgp group overlay multihop no-nexthop-change set protocols bgp group overlay local-address 10.1.255.2 set protocols bgp group overlay family evpn signaling set protocols bgp group overlay multipath multiple-as set protocols bgp group overlay neighbor 10.1.255.11 peer-as 65011 set protocols bgp group overlay neighbor 10.1.255.12 peer-as 65012 set protocols bgp group overlay neighbor 10.1.255.13 peer-as 65013 set protocols bgp group overlay neighbor 10.1.255.14 peer-as 65014 set protocols bgp group overlay neighbor 10.1.255.1 peer-as 65001