Route Server Implementation

Traditionally you would expect a physical box, for example a MX Series router running Junos 17.4R1, to be used for the route server role in a network. This is obviously possible, but you could just as well be running virtual appliances, such as vMX or vRR. However, since Juniper containerized its routing protocol daemon (rpd), it is now also possible to run typical control plane functions like a route server off-box as a container. What this means, how it works, and the advantages or disadvantages of either of these options are all covered in this chapter.

Junos OS Route Server Platforms

Any Juniper platform running an appropriate version of Junos can function as a route server. However, because route server deployment may maintain a local RIB for each EBGP client, as is often the case, BGP tends to require slightly more memory than a normal BGP deployment. As a result, the memory capacity of a normal router may not provide a scalable solution.

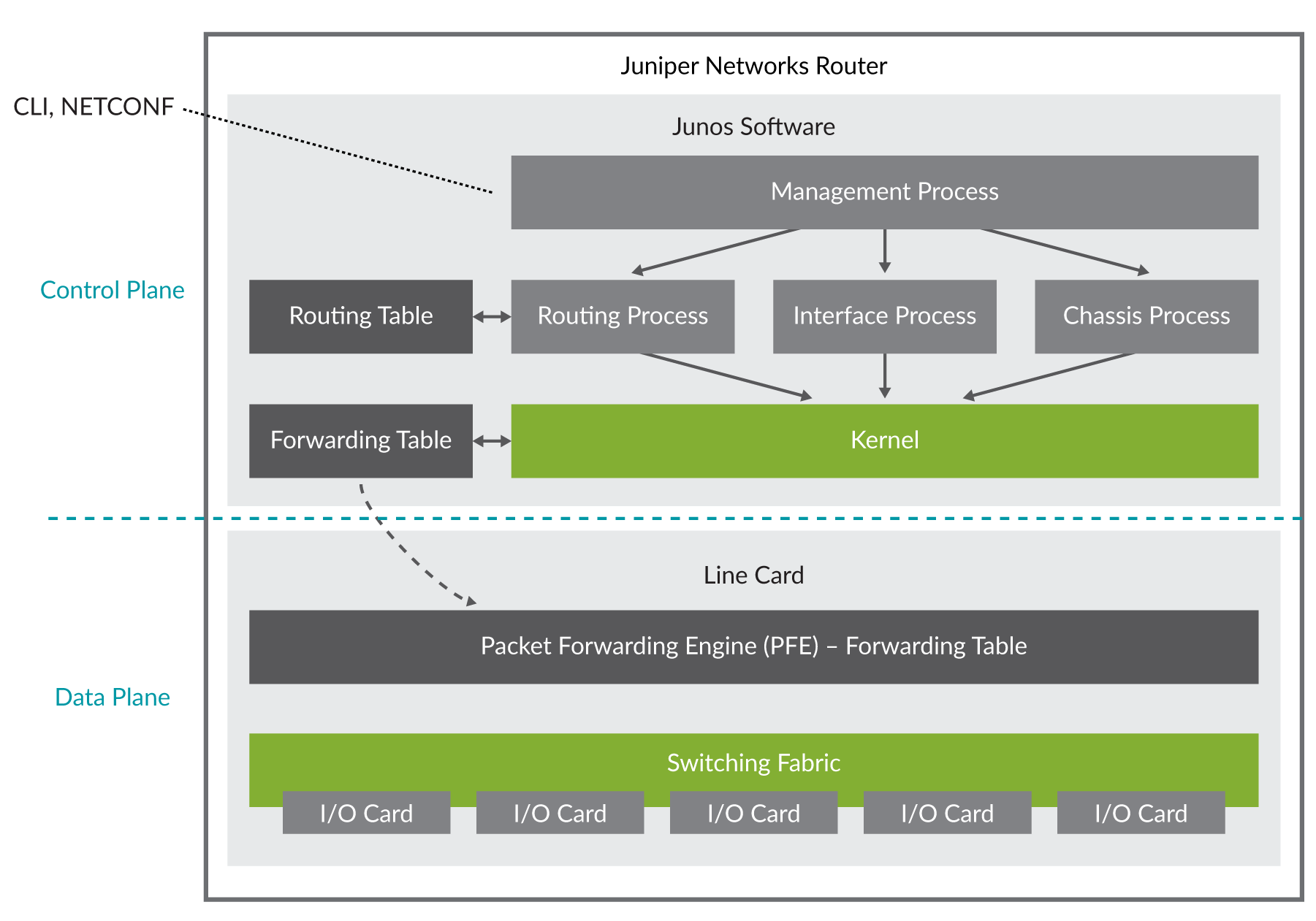

Figure 1 shows a block diagram of a typical Juniper MX Series router.

The memory capacity of a typical router is often dimensioned to meet the target deployment “role” of that router. For example, a Juniper PTX10000 Series serving as a core router doesn’t have the same feature set – and thus memory requirements – as a route server, not to mention the additional hardware in the form of line cards and packet forwarding engines that are necessary for a core router.

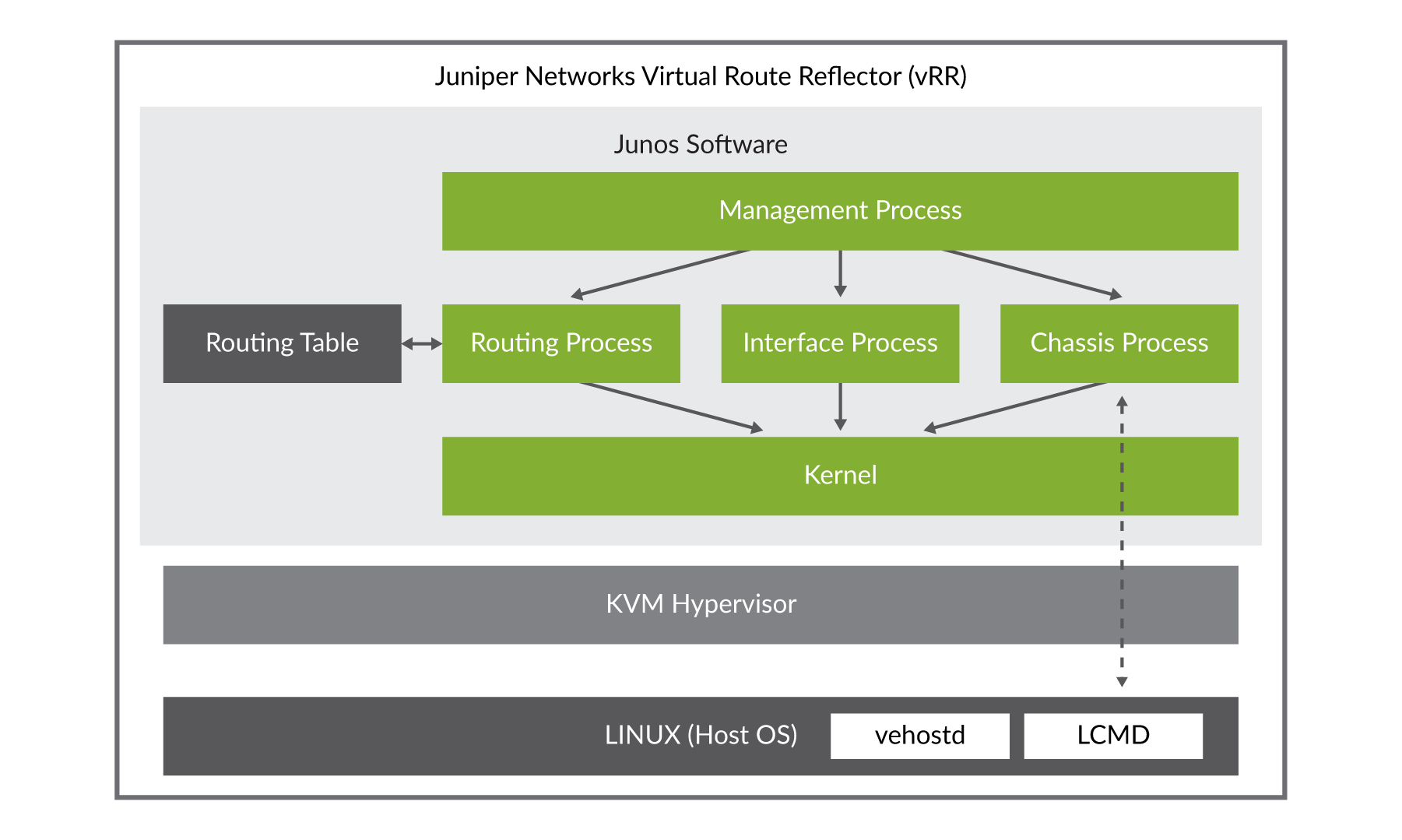

Junos OS Virtual Route Reflector (vRR)

The Junos OS virtual Route Reflector (vRR) allows the deployment of the Junos OS control plane using a general-purpose VM that can run on a 64-bit Intel-based server like the Juniper NFX platform or an appliance like the Juniper JRR200. A vRR on an Intel-based server or appliance works the same as a route re flector on a router running BGP, providing a scalable and low-cost alternative to a dedicated hardware platform. The vRR has the following benefits:

Scalability: Scalability improvements, depending on the server core hardware (CPU and memory) on which the vRR runs.

Faster and more flexible deployment: vRR running on an Intel server, using open source tools, which reduces typical router maintenance.

Deploying a route server using a vRR platform allows the IXP to dimension the server’s CPU and memory to that of the target deployment. Since modern server platforms have far more compute and storage capacity than a traditional Routing Engine, it ensures that the route server can both scale and perform well beyond a dedicated router.

Junos OS Containerized RPD (cRPD)

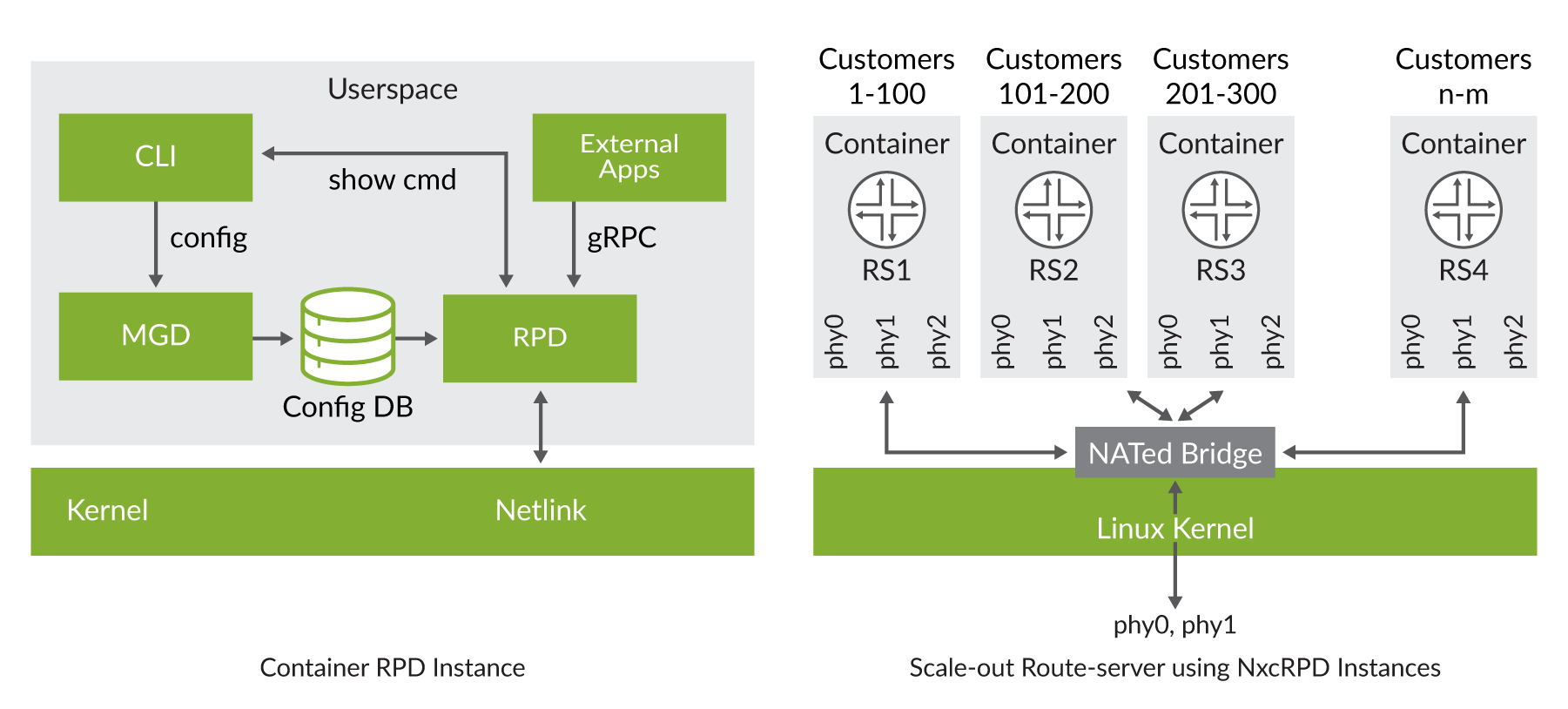

As depicted in the previous two diagrams the rpd is a core component of the Junos OS, and it is responsible for running various routing protocols (OSPF, ISIS, RIP, BGP, MPLS, etc.) to learn and distribute route state. A containerized rpd (cRPD) is designed to facilitate rpd as a standalone module that is decoupled from the base Junos OS and able to run in Linux-based environments. These environments can be as diverse as host or server systems, VMs, containers, and network devices with separate physical or virtual forwarding planes.

Additional options for route-server deployments are provided by cRPD, with the simplest being a model much like using a vRR. However, cRPD provides many other avenues to be explored by spawning many instances of rpd behind a Docker bridge. Thus, a single route server instance is presented to the IXP clients, but behind that Docker bridge may be a cluster of rpd instances. This potentially leads to possibly greater scale, new high availability models, various software versions, and in general, a new way to think about what a route server can be. The basic cRPD instance and a high-level view of what a scaled-out route server may look like is shown in Figure 3.

While specific procedures will vary, you can import a Junos OS docker image into your environment; create a container and bring cRPD up using these steps.

- Download cRPD from https://support.juniper.net/support/downloads/:docker load –i crpd:19.2R1.tar.gz

- Create persistent storage volume for config and logs (default

size: 10GB):docker volume create crpd1-configdocker volume create crpd1-log

- Create and launch container:docker run --rm –-detach --name crpd1 -h crpd1 --privileged -v crpd1-config:/config -v crpd1-log:/var/log -it crpd:19.2R1

It is important to launch cRPD in privileged mode. Failure to do so results in the daemons not starting.

To limit the amount of memory and CPU allocated to a cRPD container (for example, 256 MB and 1 CPU):

256MB is the lowest amount of memory needed to support cRPD. For a route-server scenario, a much higher amount of memory is recommended.

To launch a cRPD container in host networking mode (shares networking name space with host, default is separate name space):

Basic Management of cRPD

Checking the container status:

Access cRPD CLI: Launches JUNOS CLI:

Access cRPD bash shell:

Pause/Resume/Stop/Destroy the container:

See Appendix C for specific cRPD configuration examples and considerations.

Route Server Configuration

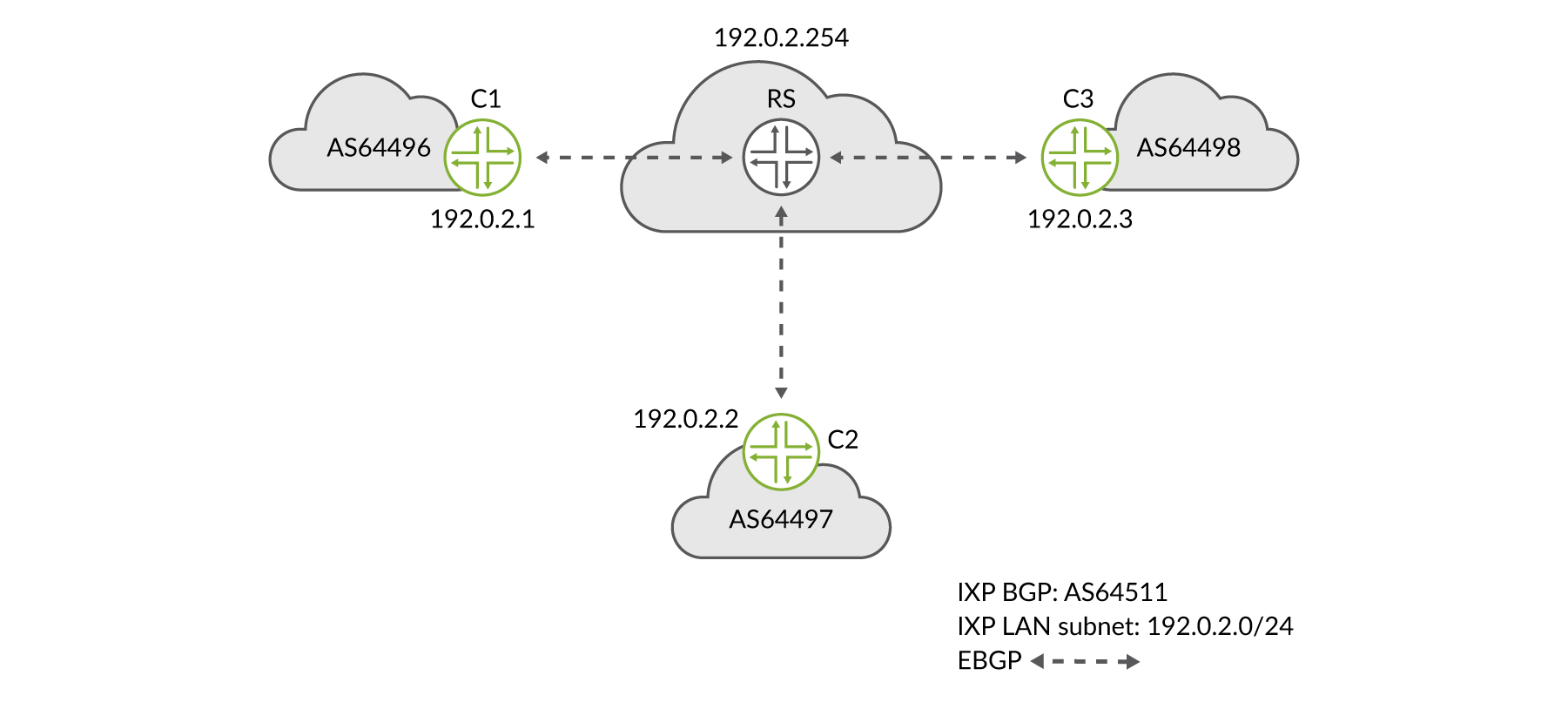

The following configuration, monitoring, and troubleshooting examples are based on the example IXP network shown in Figure 4.

Basic Junos Route-Server Client Configuration

The Junos route server supports EBGP transparency by modifying

the normal BGP route propagation such that neither transitive nor

non-transitive attributes are stripped or modified while propagating

routes. Changes to normal EBGP behavior are controlled by the CLI

configurationroute-server-client:

Junos Route Server-Client Configuration using a Non-Forwarding Routing Instance

A route-server client-specific RIB is a distinct view of a BGP Loc-RIB that can contain different routes for the same destination than other views. Route-server clients, via their peer groups, may associate with one individual client-specific view or a shared common RIB.

In order to provide the ability to advertise different routes to different clients for the same destination, it is conceptually necessary to allow for multiple instances of the BGP path selection to occur for the same destination but in different client/group contexts.

Junos OS implements flexible policy control with per client/group path selection, using non-forwarding routing instances to provide multiple instances of the BGP pipeline, including BGP path selection, Loc-RIB, and policy. A Junos route server will be configured to group route-server clients within BGP groups configured within separate non-forwarding routing instances. This approach leverages the fact that BGP running within a routing instance does path selection and has a RIB that is separate from BGP running in other routing instances:

Basic Route-Server Client Configuration

The following CLI example shows a basic IXP member client router’s configuration. As you can see, it’s quite simple, compared to the configuration of the route server, since the client, in the most basic sense, needs only to tag the routes they want to advertise at the IXP with the required communities, and the route server does the rest:

In this example, let’s assume that C1’s policy is open and allows its prefixes to be advertised to all IXP members, thus it attaches the standard BGP community 64496:123 to all of its advertised prefixes:

Whereas C2’s peering policies dictate that their prefixes only be advertised to C1, but not to anyone else. Their prefixes are in turn advertised with BGP standard community 64497:64496 indicating to the route server to restrict instance-imports to only C1’s routing-instance:

Default EBGP Route Propagation Behavior Without Policies (RFC8212)

Junos OS does not yet natively support RFC8212 (https://tools.ietf.org/html/rfc8212), therefore a SLAX script is available and should be used in order to accomplish this behavior.

Rfc8212.slax, is a strict implementation that replaces the absence of RFC8212 implementation with a deny-all statement if no export policy is present.

A loose form of the policy can be found at: https://github.com/packetsource/rfc8212-Junos.

Apply the following configuration change:

Generalized TTL Security Mechanism (RFC3682)

The Generalized TTL Security Mechanism (GTSM) is designed to protect a router’s IP-based control plane from CPU utilization-based attacks. GTSM is based on the fact that the vast majority of protocol peerings are established between adjacent routers, as is the case of EBGP peers on an IX LAN. Since TTL spoofing is considered nearly impossible, a mechanism based on an expected TTL value can provide a simple and reasonably robust defense from infrastructure attacks based on forged protocol packets from outside the network:

Maximum Prefix Limits

The BGP prefix-limit feature allows

you to control how many prefixes can be received from a BGP neighbor.

The prefix-limit feature is useful to ensure a client router does

not accept more than a previously agreed upon number of prefixes from

a route server. This prevents intentionally or unintentionally overloading

a router server by an IXP member:

Alternatively, the route server can be configured to limit the number of prefixes that are accepted from an IXP member. This may or may not be useful, depending on how the input prefix validation is handled by the IXP. For example, if invalid routes are discarded, then the number of accepted prefixes may differ from the number of advertised prefixes:

Local RIB Import/Export Policy Configuration Examples

To propagate routes between route server clients, routes need

to be leaked between the RIBs of the routing-instances based on configured

policies and/or well-defined community values. Configuring a full

mesh of route leaking between n client-specific routing instances

using instance-import and the instance qualifier requires n table-specific policies,

each with n-1 policy terms, explicitly naming the routing instances

from which to leak. Thus, the policy configuration explodes on the

order of O (n^2).

As a configuration convenience, an instance-any policy qualifier is provided for use with instance-import policies. The instance-any qualifier

has the functionality of leaking routes from all other routing instances

into the instance in which instance-import is configured. The instance-import may

have other qualifying terms to implement further filtering to match

the IXP global community policies.

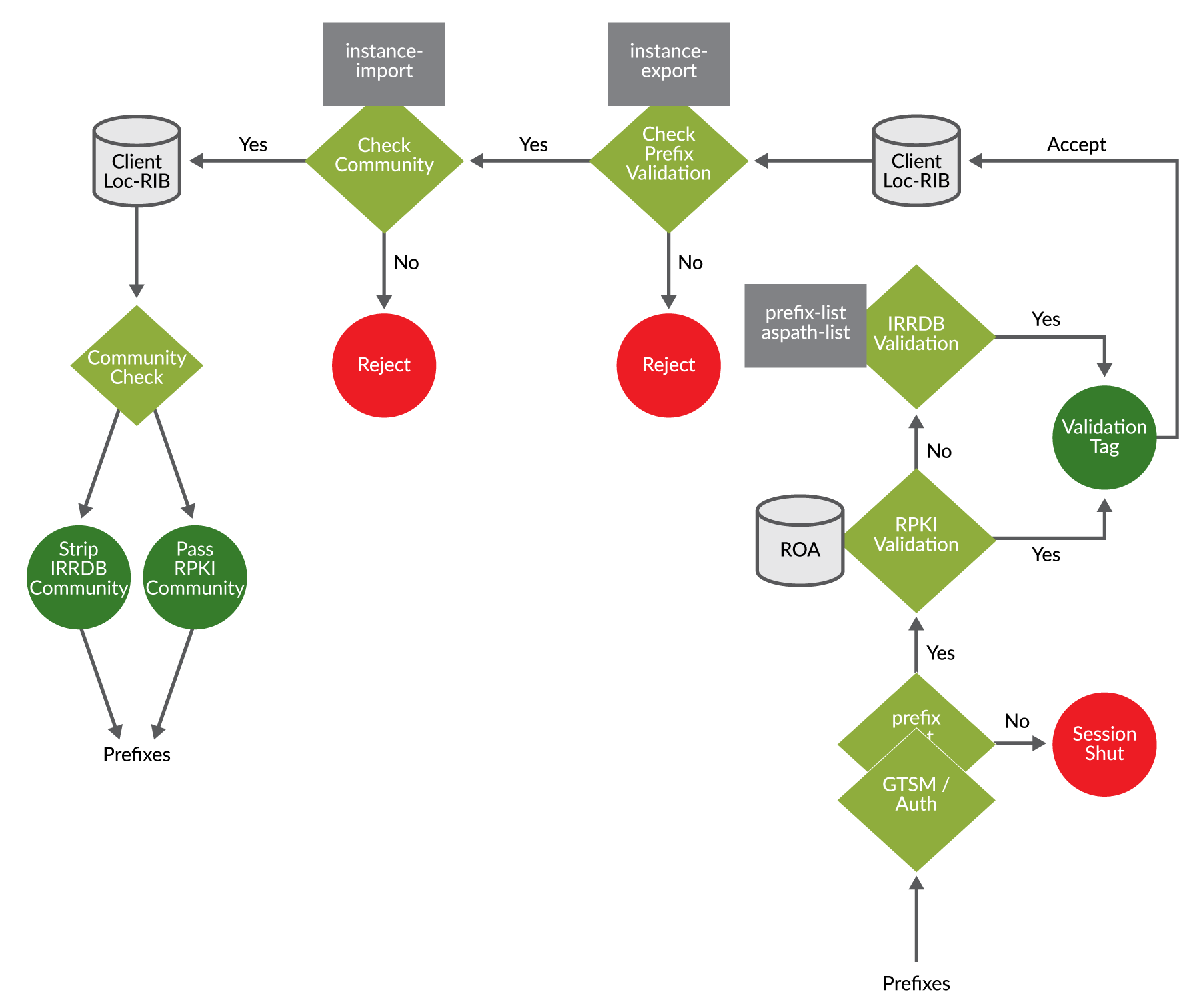

Furthermore, the example routing policies described next will follow the workflow illustrated in Figure 5 and described in Internet Exchange Point Overview chapter. The workflow follows routes announced from client C1 to the route server and then onto client C2.

Example Instance Import Policies

Let’s first look at how to use the instance-any policy qualifier along with a standard community matching filter

to import routes from all routing instances that have an open routing

policy.

You can see how the policy term first uses the instance-any qualifier and then matches community 64511:111

which, for this example, represents the IXP global community for an

open policy. Then that policy is applied at the routing-instance

instance-import attachment point:

As alluded to at the beginning of this section, an alternative

way to configure a subset of instances is to use the instance-list identifier. You can see next that instead of using the instance-any

qualifier we have listed each individual client routing instance along

with a community value of 0:100, which is the IXP global community,

to block routing advertisements to specific route-server clients:

Example Import Policy

This example policy accepts and tags prefixes with a valid/invalid

community value based on IRR object validation as described in the

previous prefix validation section. Several examples using third-party

tool integration, as described in a later section, may be leveraged

to automatically build theroute-filter-list and as-path-group lists. The example

policy here adds the community tags to be used in later instance import/export

policies:

Example Origin Validation for BGP (RPKI)

An alternative to writing and maintaining explicit as-path-group and route-filter-list lists is to use RPKI, as described earlier in this book. The following

policy checks the RPKI validation database and marks destinations

with the returned validation community; valid, invalid, or unknown:

The format of the communities shown here result from RFC8097 Section 2, where the value of the high-order octet of the extended type field is 0x43, which indicates it is non-transitive. The value of the low-order octet of the extended type field, as assigned by IANA, is 0x00. And the last octet of the extended community is an unsigned integer that gives the route’s validation state with the following values:

0 = Lookup result = valid

1 = Lookup result = not found

2 = Lookup result = invalid

Example Routing Instance Export Policies

This next instance export policy example rejects any prefix

that has been marked as invalid by either the RPKI database lookup,

a route-filter-list miss, or an as-path-group miss. All other prefixes allowed are

then checked by instance-import policies:

Example Export Policy

Most, if not all, policy has already been implemented between

import policy, instance-export policy, and instance-import policy.

The only remaining policy may be to remove the prefix validation communities

before sending the prefixes on to route server clients. This may also

include the RPKI valid/invalid/unknown communities. The example RPKI

policy shown above is a pretty strict policy in that RPKI invalid

prefixes are dropped at instance-export. It can be the IXP policy to merely pass on the results, as a service,

to the members of the IXP, thus allowing them to decide how to use

the validation results.

Never propagate ‘RPKI invalids’; there is no single

good reason to do so: “invalid == reject” is the only legitimate option https://www.google.nl/search?q=Invalid+%3D%3D+reject.