Appendixes

Appendix A: Mutually Agreed Upon Norms for Routing Security (MANRS)

By now you should have an idea how to deploy a Junos OS-based route server. Or maybe you have even built one already?

If so, did you take into account the MANRS? MANRS is a global initiative, supported by the Internet Society, that provides crucial fixes to reduce the most common routing threats. There’s a special section for IXPs on their website: https://www.manrs.org/ixps/#actions.

For example, MANRS asks you to prevent propagation of incorrect routing information or offer assistance to the IXP members to maintain accurate routing information in an appropriate repository (IRR and/or RPKI).

Joining MANRS, actively implementing the guidelines and promoting it shows that you care about the stability and security of the Internet.

Appendix B: Relevant Standardization Documents

Most of what we’ve described in this book is to be found in Requests for Comments (RFCs). We’re linking some of them here for reference and further reading.

RFC7947: Internet Exchange BGP Route Server https://tools.ietf.org/html/rfc7947

RFC7948: Internet Exchange BGP Route Server Operations https://tools.ietf.org/html/rfc7948

RFC1997: BGP Community Attribute https://tools.ietf.org/html/rfc1997

RFC4360: BGP Extended Communities Attribute https://tools.ietf.org/html/rfc4360

RFC3682: Generalized TTL Security Mechanism https://tools.ietf.org/html/rfc3682

RFC8212: Default eBGP Route Propagation Behavior Without Policies https://tools.ietf.org/html/rfc8212

BGP Maximum Prefix LimitsDraft https://tools.ietf.org/html/draft-sa-grow-maxprefix-02

Appendix C: Emerging Technologies

Ethernet VPNs

Ethernet VPN (EVPN) enables you to connect dispersed customer sites using a Layer 2 virtual bridge. EVPN operates in contrast to the existing virtual private LAN service (VPLS) by enabling control plane based MAC learning in the core. In EVPN, PEs participating in the EVPN instances learn customer MAC routes in the control plane using MP-BGP protocol.

Control plane MAC learning brings a number of benefits that allow EVPN to address the VPLS shortcomings, including support for multi-homing with per-flow load balancing.

EVPN provides the following benefits:

Integrated Services: Integrated Level 2 and Level 3 VPN services, L3VPN-like principles and operational experience for scalability and control, all-active multihoming and PE load-balancing using ECMP, and load balancing of traffic to and from Customer Edge routers (CEs) that are multihomed to multiple PEs.

Network Efficiency: Eliminates flood and learn mechanism, fast-reroute, resiliency, and faster reconvergence when the link to a dual-homed server fails, optimized Broadcast, Unknown-unicast, and Multicast (BUM) traffic delivery.

Service Flexibility: MPLS data plane encapsulation, support existing and new services types (E-LAN, E-Line), peer PE auto-discovery, and redundancy group auto-sensing.

The following EVPN modes are supported:

Single-homing: This enables you to connect a CE device to one PE device.

Multihoming: This enables you to connect a CE device to more than one PE device. Multihoming ensures redundant connectivity. The redundant PE device ensures that there is no traffic disruption when there is a network failure. Following are the types of multihoming:

All-Active: In all-active mode, all the PEs attached to the particular Ethernet segment is allowed to forward traffic to and from that Ethernet segment.

For more information see: https://www.juniper.net/uk/en/training/jnbooks/day-one/proof-concept-labs/using-ethernet-vpns/ as a proof of concept straight from Juniper’s Proof of Concept Labs (POC Labs). The book supplies a sample topology, all the configurations, validation testing, as well as some high availability (HA) tests.

Routing in Fat Trees (RIFT)

IXPs have been steadily growing and connecting more networks within a single L2 network. Because of their topologies (traditional and emerging), IXP networks have a unique set of requirements for traffic patterns that need fast restoration and low human intervention.

Lately Clos and Fat-Tree topologies have gained popularity in data center networks as a result of a trend towards centralized data center network architectures that may deliver computation and storage services. It may be worth investigating whether such a topology could be suitable for an IXP environment as well.

The Routing in Fat Trees (RIFT) protocol addresses the demands of routing in Clos and Fat-Tree networks via a mixture of both link-state and distance-vector techniques colloquially described as link-state towards the spine, and distance vector towards the leafs. RIFT uses this hybrid approach to focus on networks with regular topologies with a high degree of connectivity, a defined directionality, and large scale.

The RIFT protocol:

Provides automatic construction of fat-tree topologies based on detection of links,

Minimizes the amount of routing state held at each topology level,

Automatically prunes topology distribution exchanges to a sufficient subset of links,

Support automatic disaggregation of prefixes on link and node failures to prevent black-holing and sub-optimal routing,

Allows traffic steering and re-routing policies, and

Provides mechanisms to synchronize a limited key-value data-store that can be used after protocol convergence.

Nodes participating in the protocol need only very light configuration and should be able to join a network as leaf nodes simply by connecting to the network using default configuration.

For more information on RIFT see https://datatracker.ietf.org/doc/draft-ietf-rift-rift/.

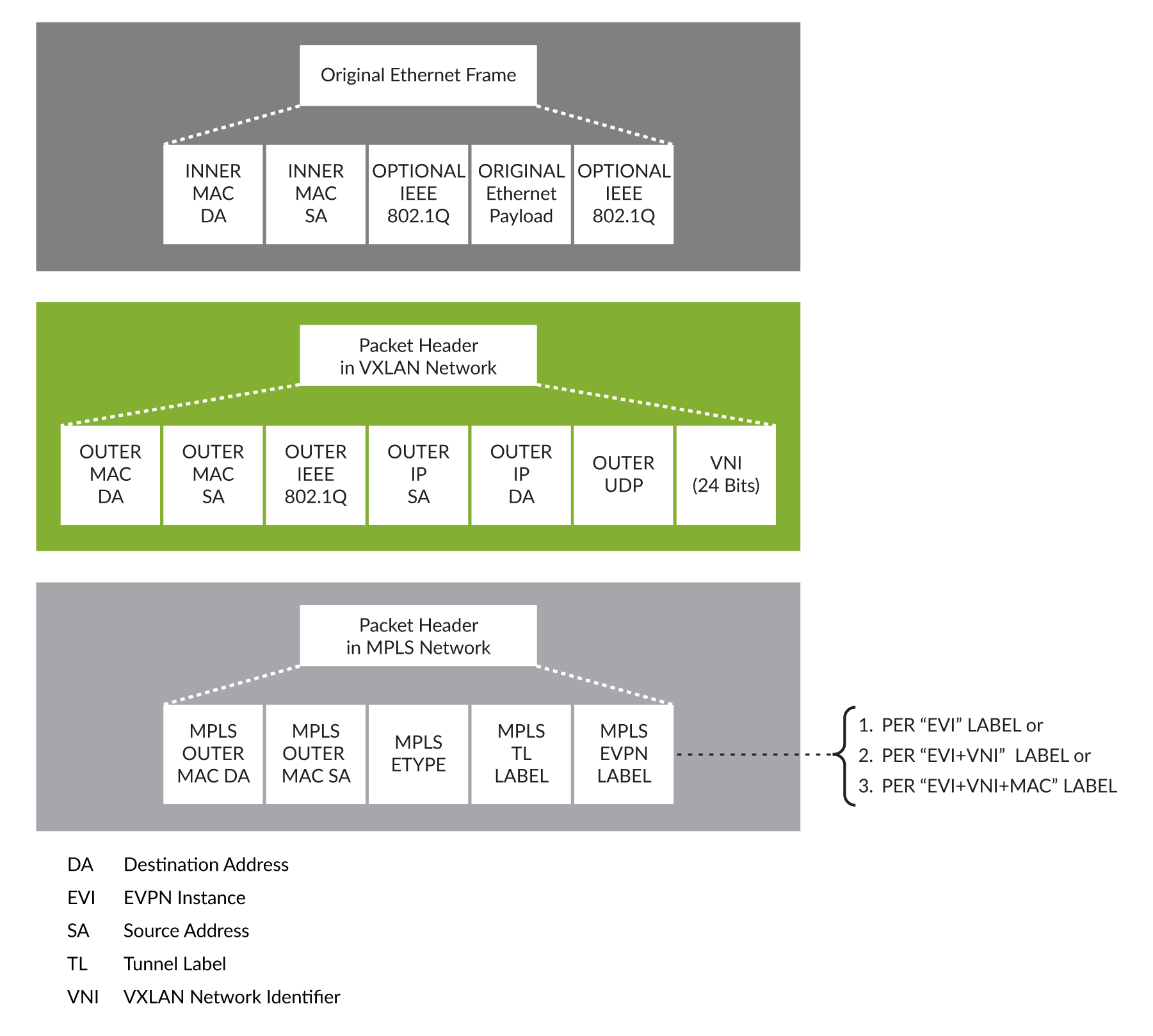

VXLAN

VXLAN is a MAC in IP/UDP (MAC-in-UDP) encapsulation technique with a 24-bit segment identifier in the form of a VXLAN ID. The larger VXLAN ID allows LAN segments to scale to 16 million in a cloud network. In addition, the IP/UDP encapsulation allows each LAN segment to be extended across existing Layer 3 networks making use of Layer 3 ECMP.

VXLAN provides a way to extend Layer 2 networks across Layer 3 infrastructure using MAC-in-UDP encapsulation and tunneling. VXLAN enables flexible workload placements using the Layer 2 extension. It can also be an approach to building a multi-tenant data center by decoupling tenant Layer 2 segments from the shared transport network.

VXLAN has the following benefits:

Flexible placement of multi-tenant segments throughout the data center.

It provides a way to extend Layer 2 segments over the underlying shared network infrastructure so that tenant workloads can be placed across physical pods in the data center.

Higher scalability to address more Layer 2 segments.

VXLAN uses a 24-bit segment ID, the VXLAN network identifier (VNID). This allows a maximum of 16 million VXLAN segments to coexist in the same administrative domain. (In comparison, traditional VLANs use a 12-bit segment ID that can support a maximum of 4096 VLANs.)

Utilization of available network paths in the underlying infrastructure.

VXLAN packets are transferred through the underlying network based on its Layer 3 header. It uses ECMP routing and link aggregation protocols to use all available paths.

VXLAN is often described as an overlay technology because it allows you to stretch Layer 2 connections over an intervening Layer 3 network by encapsulating (tunneling) Ethernet frames in a VXLAN packet that includes IP addresses. Devices that support VXLANs are called virtual tunnel endpoints (VTEPs)—they can be end hosts or network switches or routers. VTEPs encapsulate VXLAN traffic and de-encapsulate that traffic when it leaves the VXLAN tunnel. To encapsulate an Ethernet frame, VTEPs add a number of fields, including the following fields:

Outer media access control (MAC) destination address (MAC address of the tunnel endpoint VTEP)

Outer MAC source address (MAC address of the tunnel source VTEP)

Outer IP destination address (IP address of the tunnel endpoint VTEP)

Outer IP source address (IP address of the tunnel source VTEP)

Outer UDP header

A VXLAN header that includes a 24-bit field – called the VXLAN network identifier (VNI) – that is used to uniquely identify the VXLAN. The VNI is similar to a VLAN ID, but having 24 bits allows you to create many more VXLANs than VLANs.

For more information see Understanding EVPN with VXLAN Data Plane Encapsulation: https://www.juniper.net/documentation/en_US/junos/topics/concept/evpn-vxlan-data-plane-encapsulation.html.