Overview of IP Clos Fabrics for Campus Networks

About This Network Configuration Example

This network configuration example (NCE) describes how to deploy an IP Clos architecture to support a campus networking environment. The use case shows how you can deploy a single campus fabric that uses EVPN in the control plane and VXLAN tunnels in the overlay network with Juniper Mist Access Points integration.

Use Case Overview

Enterprise networks are undergoing massive transitions to accommodate the growing demand for cloud-ready networks and the plethora of IoT and mobile devices. As the number of devices grows, so does network complexity with an ever greater need for scalability and segmentation. To meet these challenges, you need a network with increased scalability and operational simplification. IP Clos networks provide increased scalability and segmentation using a well-understood standards-based approach.

Most traditional campus architectures use single-vendor, chassis-based technologies that work well in small, static campuses with few endpoints. However, they are too rigid to support the scalability and changing needs of modern large enterprises.

A Juniper Networks EVPN-VXLAN fabric is a highly scalable architecture that is simple, programmable, and built on a standards-based architecture that is common across campuses and data centers.

The EVPN-VXLAN campus architecture uses a Layer 3 IP-based underlay network and an EVPN-VXLAN overlay network. The simple IP-based Layer 3 network underlay limits the Layer 2 broadcast domain and eliminates the need for spanning tree protocols (STP). A flexible overlay network based on a VXLAN tunnels combined with an EVPN control plane efficiently provides Layer 3 or Layer 2 connectivity.

This architecture decouples the virtual topology from the physical topology, which improves network flexibility and simplifies network management. Endpoints that require Layer 2 adjacency, such as IoT devices, can be placed anywhere in the network and remain connected to the same logical Layer 2 network.

With an EVPN-VXLAN campus architecture, you can easily add core, distribution, and access layer devices as your business grows without having to redesign the network. EVPN-VXLAN is vendor-agnostic, so you can use the existing access layer infrastructure and gradually migrate to access layer switches that support EVPN-VXLAN capabilities.

Benefits of Campus Fabric: IP Clos

With increasing number of devices connecting to the network, you will need to scale your campus network rapidly without adding complexity. Many IoT devices have limited networking capabilities and require Layer 2 adjacency across buildings and campuses. Traditionally, this problem was solved by extending VLANs between endpoints using data plane based flood and learn mechanisms. This approach is inefficient because it uses excessive network bandwidth. It is also difficult to manage because you need to configure and manually manage VLANs to extend them to new network ports. This problem increases multifold when you take into consideration the explosive growth of IoT and mobile devices.

The benefit of having an IP Clos network is that you can easily connect a number of switches in an IP Clos network or campus fabric. IP Clos extends the EVPN fabric to connect VLANs across multiple buildings by stretching the Layer 2 VXLAN network with routing occurring in the access device. The IP Clos network encompasses the distribution, core, and access layers of your topology.

An EVPN-VXLAN fabric solves these issues and provides the following benefits:

Reduced flooding and learning—Control plane-based Layer 2/Layer 3 learning reduces the flood and learn issues associated with data plane learning. Learning MAC addresses in the forwarding plane has an adverse impact on network performance as the number of endpoints grows. The EVPN control plane handles the exchange and learning of routes, so newly learned MAC addresses are not exchanged in the forwarding plane

Scalability—Faster control plane-based Layer 2/Layer 3 learning allows the EVPN-VXLAN network to scale up to support a larger number of mobile devices.

Consistent network—A universal EVPN-VXLAN-based architecture across campuses and data centers means a consistent end-to-end network for endpoints and applications. In addition, you can enable microsegmentation and macrosegmentation with EVPN-VXLAN to minimize Layer 2 flooding, reduce security threats, and simplify the network.

Location-agnostic connectivity—The EVPN-VXLAN campus architecture provides a consistent endpoint experience no matter where the endpoint is located. Some endpoints require Layer 2 reachability, such as legacy building security systems or IoT devices. The Layer 2 VXLAN overlay provides Layer 2 reachability across campuses without any changes to the underlay network. With our standards-based network access control integration, an endpoint can be connected anywhere in the network.

Technical Overview

- Understanding VXLAN

- VXLAN Control Plane Limitations

- Understanding EVPN

- Underlay Network

- Overlay Network Control Plane

- Overlay Data Plane

- Access Layer

- Juniper Access points

- Campus IP Clos Fabric High Level Architecture

Understanding VXLAN

Network overlays are created by encapsulating traffic and tunneling it over a physical network. The Virtual Extensible LAN (VXLAN) tunneling protocol encapsulates Layer 2 Ethernet frames in Layer 4 UDP datagrams that are themselves encapsulated into IP for transport over the underlay. VXLAN enables virtual Layer 2 subnets (or VLANs) that can span the underlying physical Layer 3 network.

In a VXLAN overlay network, each Layer 2 subnet or segment is uniquely identified by a virtual network identifier (VNI). A VNI segments traffic the same way that a VLAN ID does. As is the case with VLANs, endpoints within the same virtual network can communicate directly with each other. Endpoints in different virtual networks require a device that supports inter-VXLAN routing, which is typically a router or a high-end switch.

The entity that performs VXLAN encapsulation and decapsulation is called a VXLAN tunnel endpoint (VTEP). Each VXLAN tunnel endpoint is assigned a unique IP address. Normally these VTEP addresses match the device’s loopback address.

VXLAN Control Plane Limitations

VXLAN can be deployed as a tunneling protocol across a Layer 3 IP fabric data center without a control plane protocol. However, the use of VXLAN tunnels alone does not change the flood and learn behavior of the Ethernet protocol, which has inherent limitations in terms of scalability and efficiency.

The two primary methods for using VXLAN without a control plane protocol—static unicast VXLAN tunnels and VXLAN tunnels that are signaled with a multicast underlay—do not solve the inherent flood and learn problem and are difficult to scale in large multitenant environments. An EVPN control plane provides a scalable solution for the flood and learn problems with Ethernet.

Understanding EVPN

Ethernet VPN (EVPN) is a standards-based protocol that provides virtual multipoint bridged connectivity between different domains over an IP or IP/MPLS backbone network. EVPN enables seamless multitenant, flexible services that can be extended on demand.

EVPN leverages BGP signaling to allow the network to carry both Layer 2 MAC and Layer 3 IP information simultaneously to optimize routing and switching decisions. This control plane technology uses Multiprotocol BGP (MP-BGP) for MAC and IP address endpoint distribution, where MAC addresses are treated as routes. EVPN enables devices acting as VTEPs to exchange reachability information with each other about their endpoints.

EVPN provides multipath forwarding and redundancy through an all-active model. The access layer can connect to two or more distribution devices and forward traffic using all of the links. If an access link or distribution device fails, traffic flows from the access layer toward the distribution layer using the remaining active links. For traffic in the other direction, remote distribution devices update their forwarding tables to send traffic to the remaining active distribution devices connected to the multihomed Ethernet segment.

The benefits of using EVPNs include:

MAC address mobility

Multitenancy

Load balancing across multiple links

Fast convergence

The technical capabilities of EVPN include:

Minimal flooding—EVPN creates a control plane that shares end host MAC addresses between VTEPs in the same EVPN segment, which minimizes flooding and facilitates MAC address learning.

Multihoming—EVPN supports multihoming for client devices. A control protocol like EVPN that enables synchronization of endpoint addresses between the distribution switches is needed to support multihoming, because traffic traveling across the topology needs to be intelligently moved across multiple paths.

Aliasing—EVPN leverages all-active multihoming to allow a remote distribution device to load-balance traffic across the network toward the access layer.

Split horizon—Split horizon prevents the looping of broadcast, unknown unicast, and multicast (BUM) traffic in a network. With split horizon, a packet is never sent back over the same interface it was received on.

Underlay Network

An EVPN-VXLAN fabric architecture makes the network infrastructure simple and consistent across campuses and data centers. All the core and distribution devices must be connected to each other using a Layer 3 infrastructure. We recommend deploying a Clos-based IP fabric with a spine-leaf-based topology to ensure predictable performance and to enable a consistent, scalable architecture.

The primary requirement in the underlay network is that all core and distribution devices have loopback reachability to one another. The loopback addresses are used to establish IBGP peering relationships used to exchange EVPN routes in the overlay network.

You can use any Layer 3 routing protocol to exchange loopback addresses between the access, core, and distribution devices. BGP provides benefits like better prefix filtering, traffic engineering, and route tagging, while OSPF is relatively simple to configure and troubleshoot.

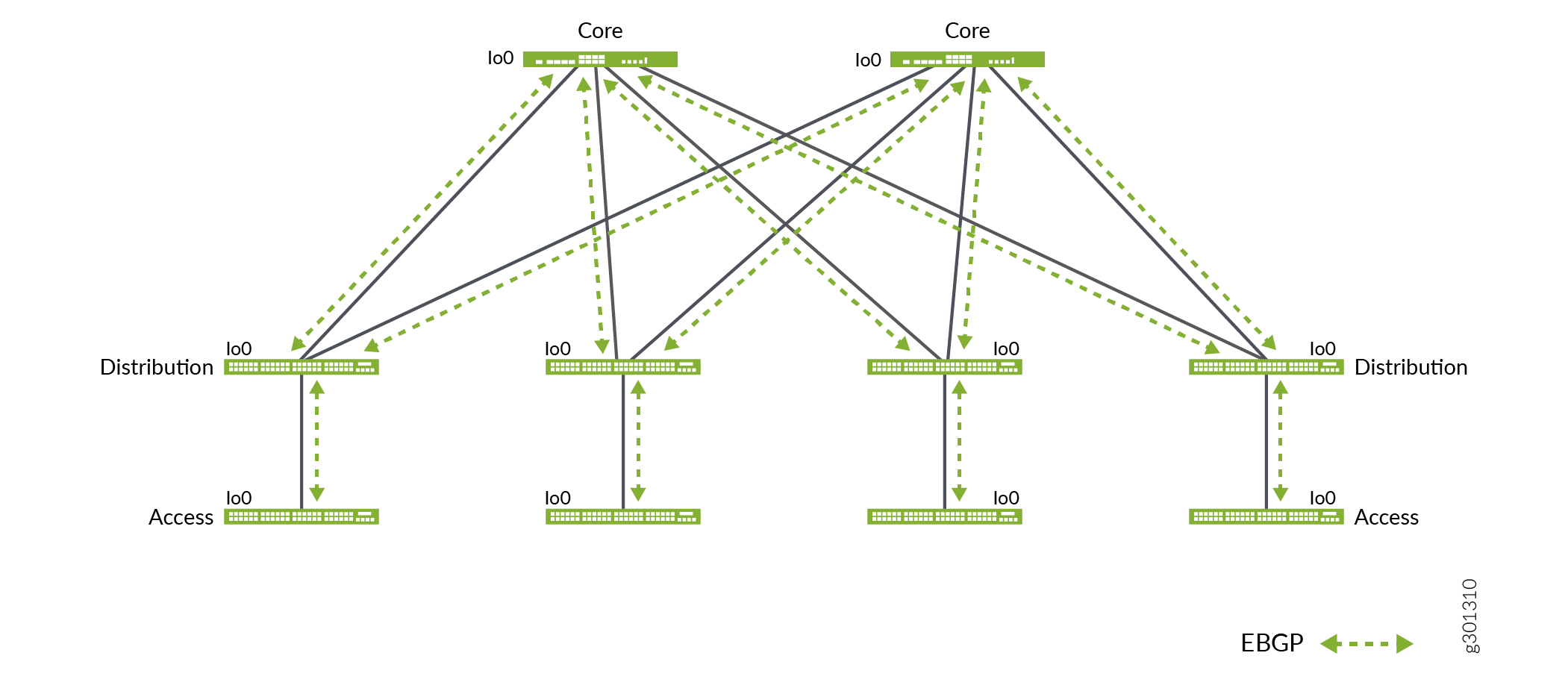

We are using EBGP as the underlay routing protocol in this example because of its ease of use. Figure 1 shows the topology of the underlay network.

Overlay Network Control Plane

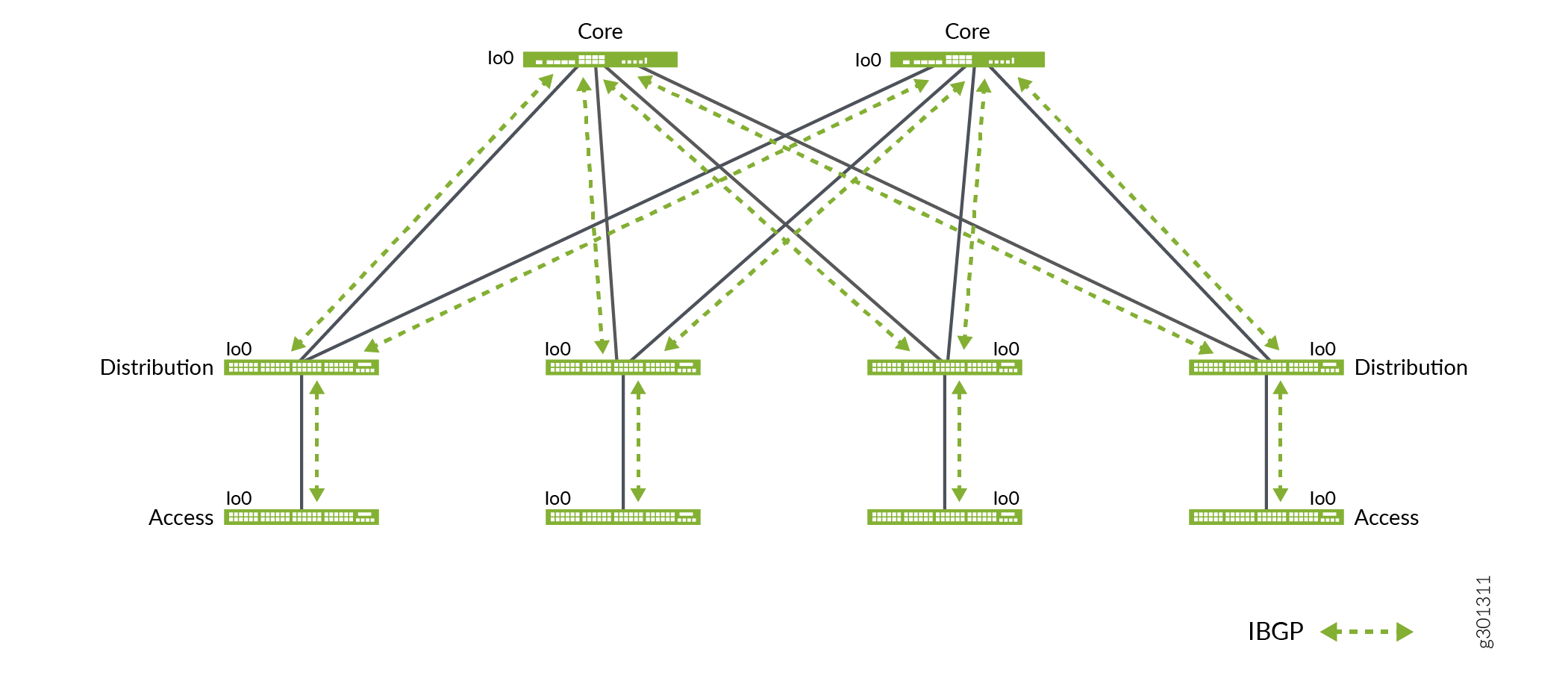

MP-BGP with EVPN signaling acts as the overlay control plane protocol. The core and distribution devices establish IBGP sessions between each other.

To eliminate the need for full mesh IBGP sessions between all devices, the core switches act as route reflectors with the access and distribution devices functioning as route reflector clients. Route reflectors enable simple and consistent IBGP configuration on all distribution switches and dramatically improves control plane scalability. In this example, we use hierarchical route-reflectors. Figure 2 shows the topology of the overlay network.

Overlay Data Plane

This architecture uses VXLAN as the overlay data plane encapsulation protocol. A Juniper switch that functions as a Layer 2 or Layer 3 VXLAN gateway acts as the VTEP to encapsulate and decapsulate data packets.

Access Layer

The access layer provides network connectivity to end-user devices, such as personal computers, VoIP phones, printers, IoT devices, as well as connectivity to wireless access point devices. In this IP Clos campus design, the EVPN-VXLAN network extends all the way to the access layer switches.

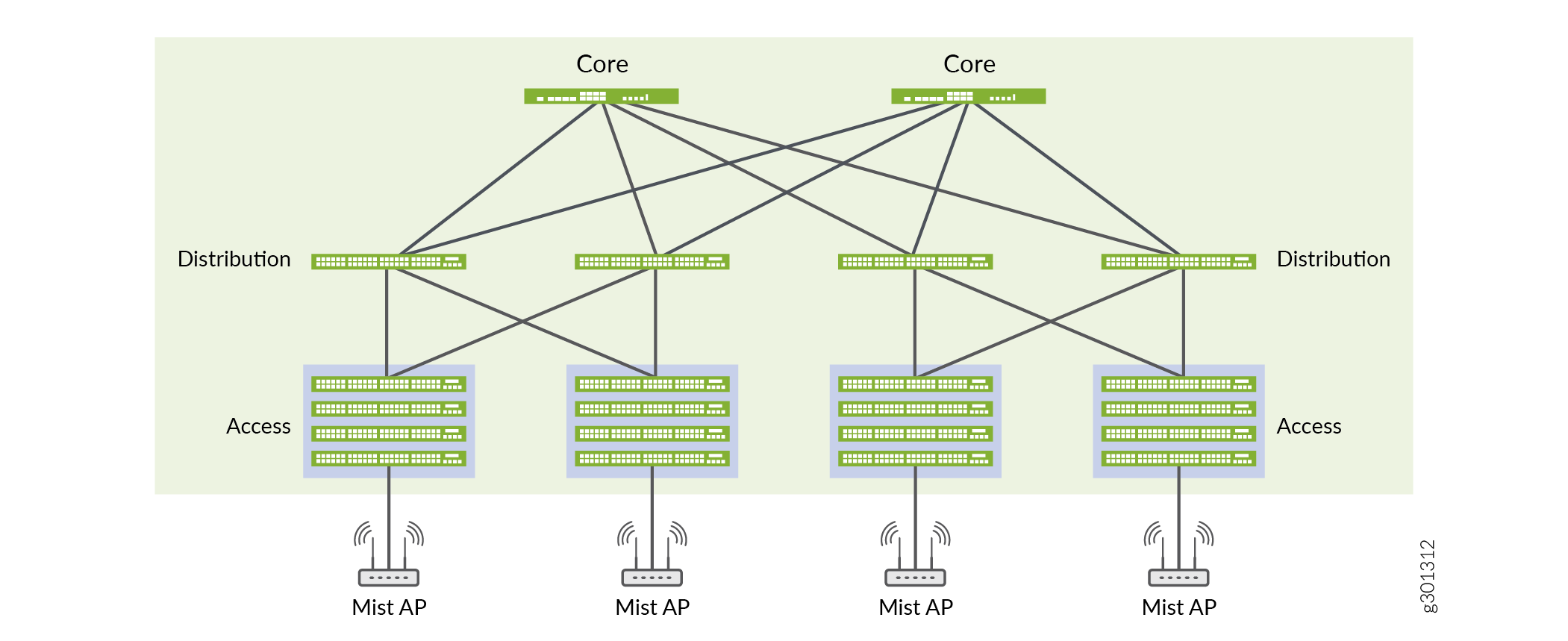

In this example, each access switch or Virtual Chassis is multihomed to two or more distribution switches. With EVPN running as the control plane protocol, any access switch or Virtual Chassis device can enable active-active multihoming on its interfaces. EVPN provides a standards-based multihoming solution that scales horizontally across any number of distribution layer switches.

Figure 3 shows the topology of the access layer devices after multihoming.

Juniper Access points

For this example we choose Juniper Access points as our preferred access point devices. They are designed from the ground up to meet the stringent networking needs of the modern cloud and smart-device era. Juniper Mist delivers unique capabilities for both wired and wireless LAN.

Wired and wireless assurance—Mist is enabled with wired and wireless assurance. Once configured, Service Level Expectations (SLE) for key wired and wireless performance metrics such as throughput, capacity, roaming, and uptime are addressed in the Mist platform. This NCE uses Mist wired assurance services.

Marvis—An integrated AI engine that provides rapid wired and wireless troubleshooting, trending analysis, anomaly detection, and proactive problem remediation.

Today’s IT departments look for a cohesive approach for managing wired and wireless networks. Juniper Networks offers a solution that simplifies and automate operations, provides end-to-end troubleshooting, and ultimately evolves into the Self-Driving Network™. The Integration of the Mist platform in this NCE addresses both of these challenges. For more details on Mist integration and EX switches, see How to Connect Mist Access Points and Juniper EX Series Switches.

Campus IP Clos Fabric High Level Architecture

The campus fabric, with an EVPN-VXLAN architecture, decouples the overlay network from the underlay network. This approach addresses the needs of the modern enterprise network by allowing network administrators to create logical Layer 2 networks across one or more Layer 3 networks. By configuring different routing instances, you can enforce the separation of virtual networks because each routing instance has its own separate routing and switching table.

VXLAN is the overlay data plane encapsulation protocol that tunnels Ethernet frames between network endpoints over the Layer 3 IP network. Devices that perform VXLAN encapsulation and decapsulation for the network are referred to as a VXLAN tunnel endpoint (VTEP). Before a VTEP sends a frame into a VXLAN tunnel, it wraps the original frame in a VXLAN header that includes a virtual network identifier (VNI). The VNI maps the packet to the original VLAN at the ingress switch. After applying a VXLAN header, the frame is encapsulated into a UDP/IP packet for transmission to the remote VTEP over the IP fabric.

A campus fabric based on EVPN-VXLAN is a modern and scalable network that uses a BGP, OSPF, or IS-IS underlay from the core to the access layer switches. The access layer switches function as VTEPs that encapsulate and decapsulate the VXLAN traffic. In addition, these devices route and bridge packets in and out of VXLAN tunnels.

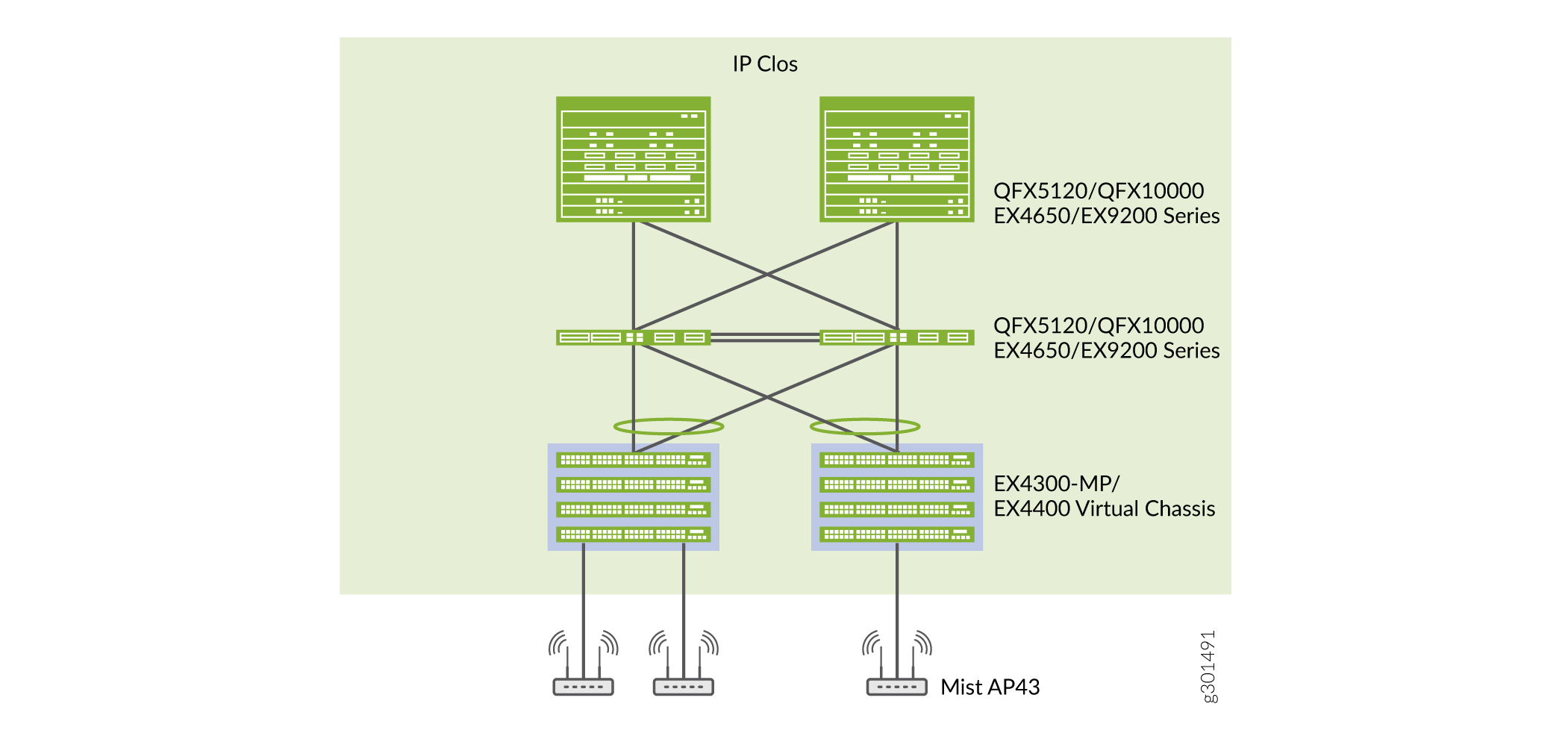

Figure 4 show a campus fabric: IP Clos network with Juniper EX4300-MP, EX4650, EX9200, QFX 5120, and QFX10000 switches.