Fabric configuration Walkthrough using Juniper Apstra

This section describes the steps to deploy the AI GPU Backend IP fabrics in the AI JVD lab, as an example of how to deploy a fabric using Juniper Apstra.

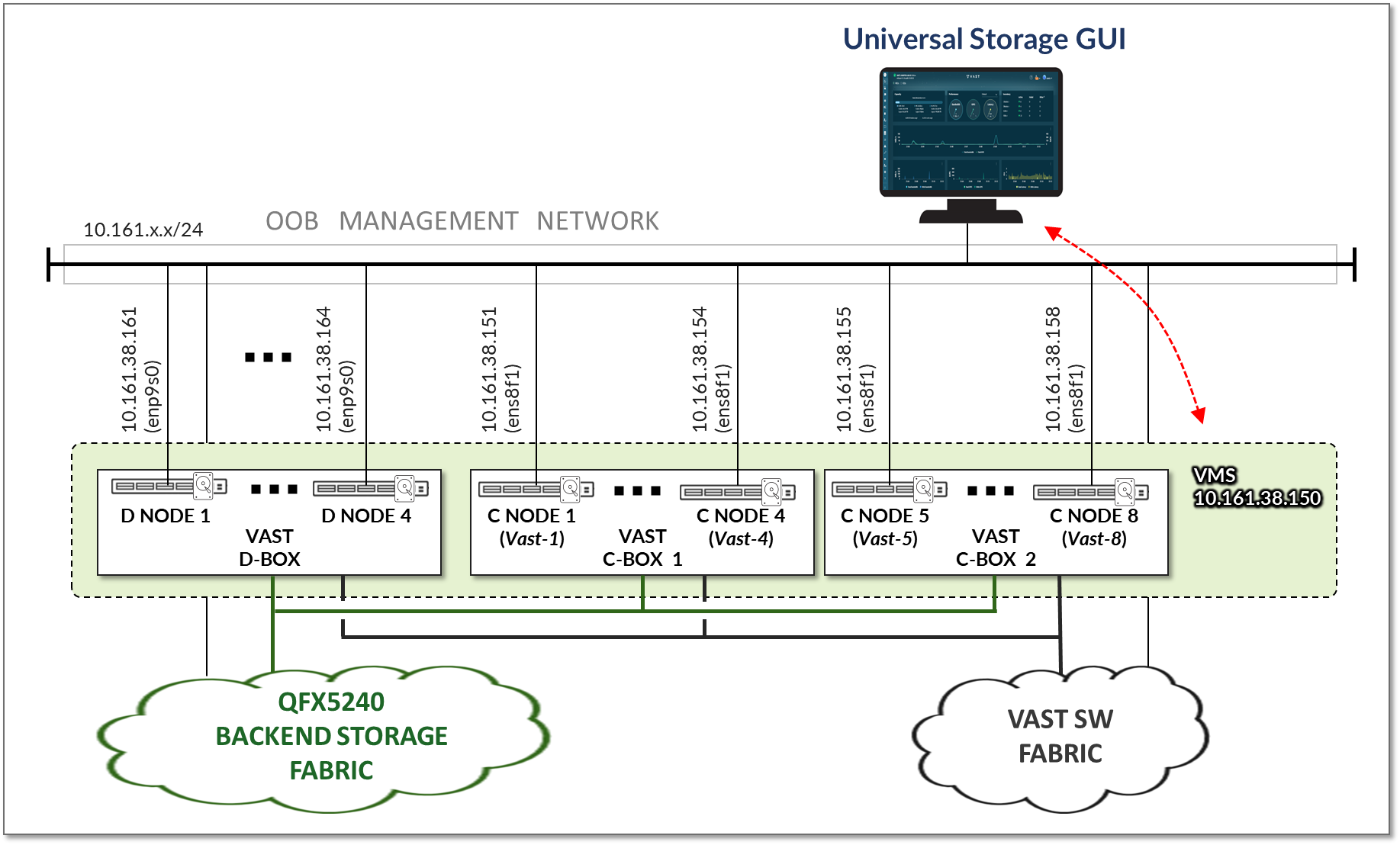

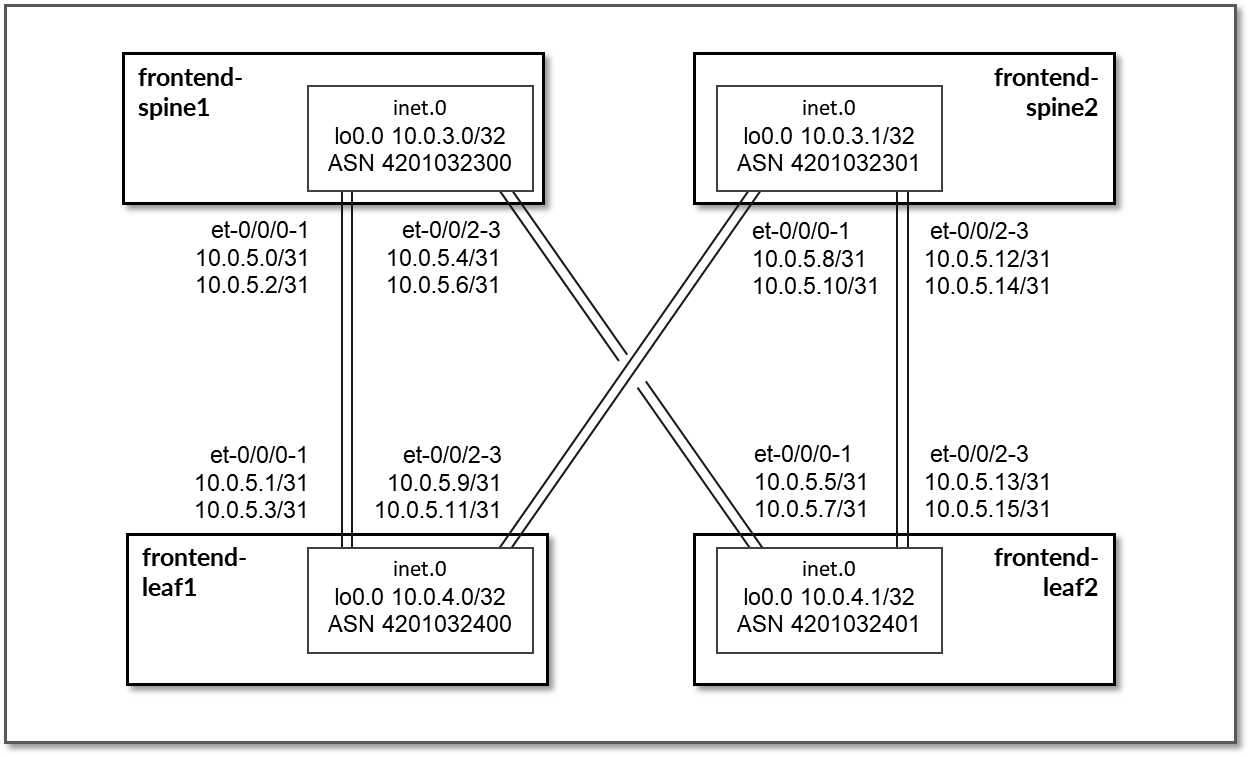

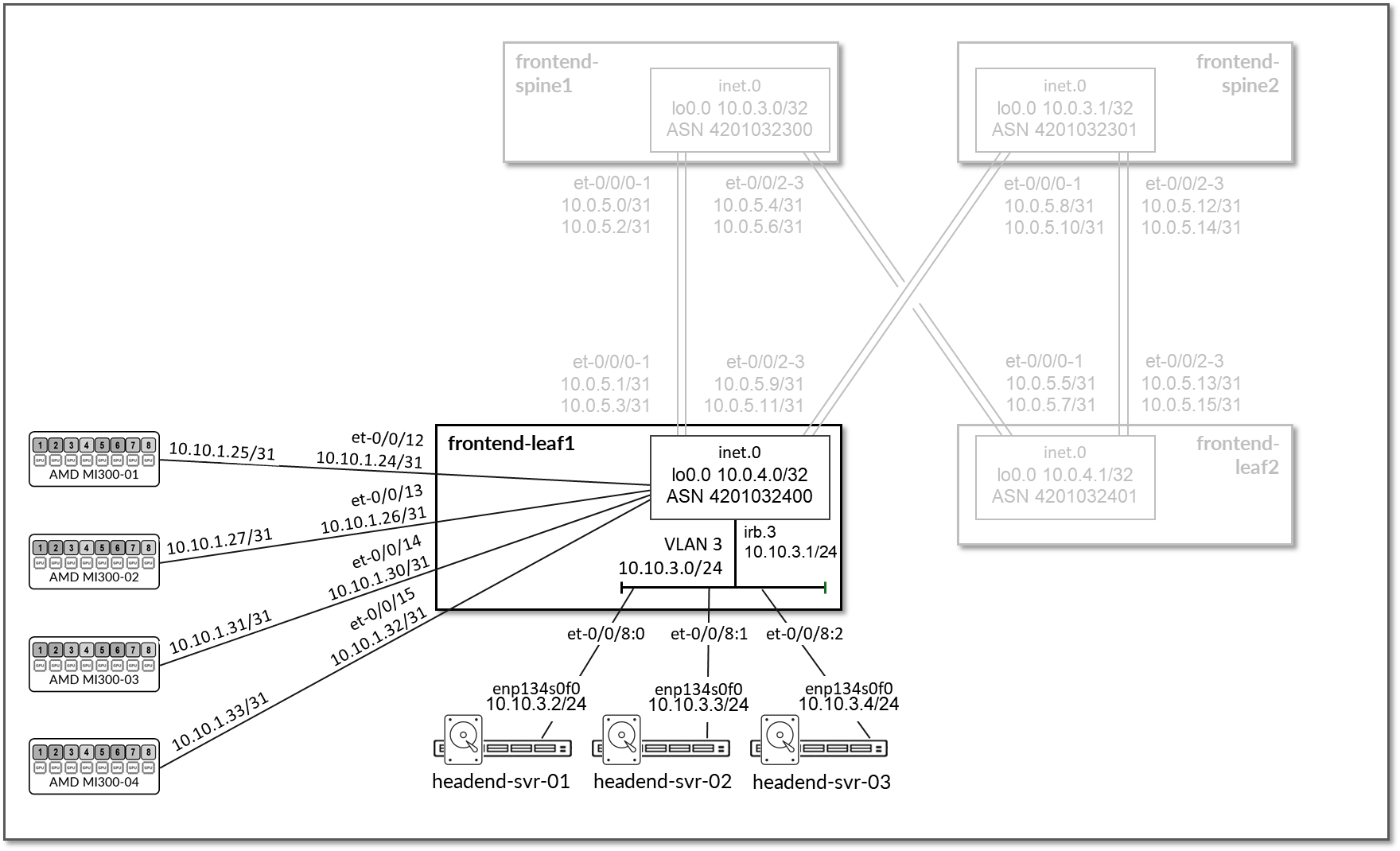

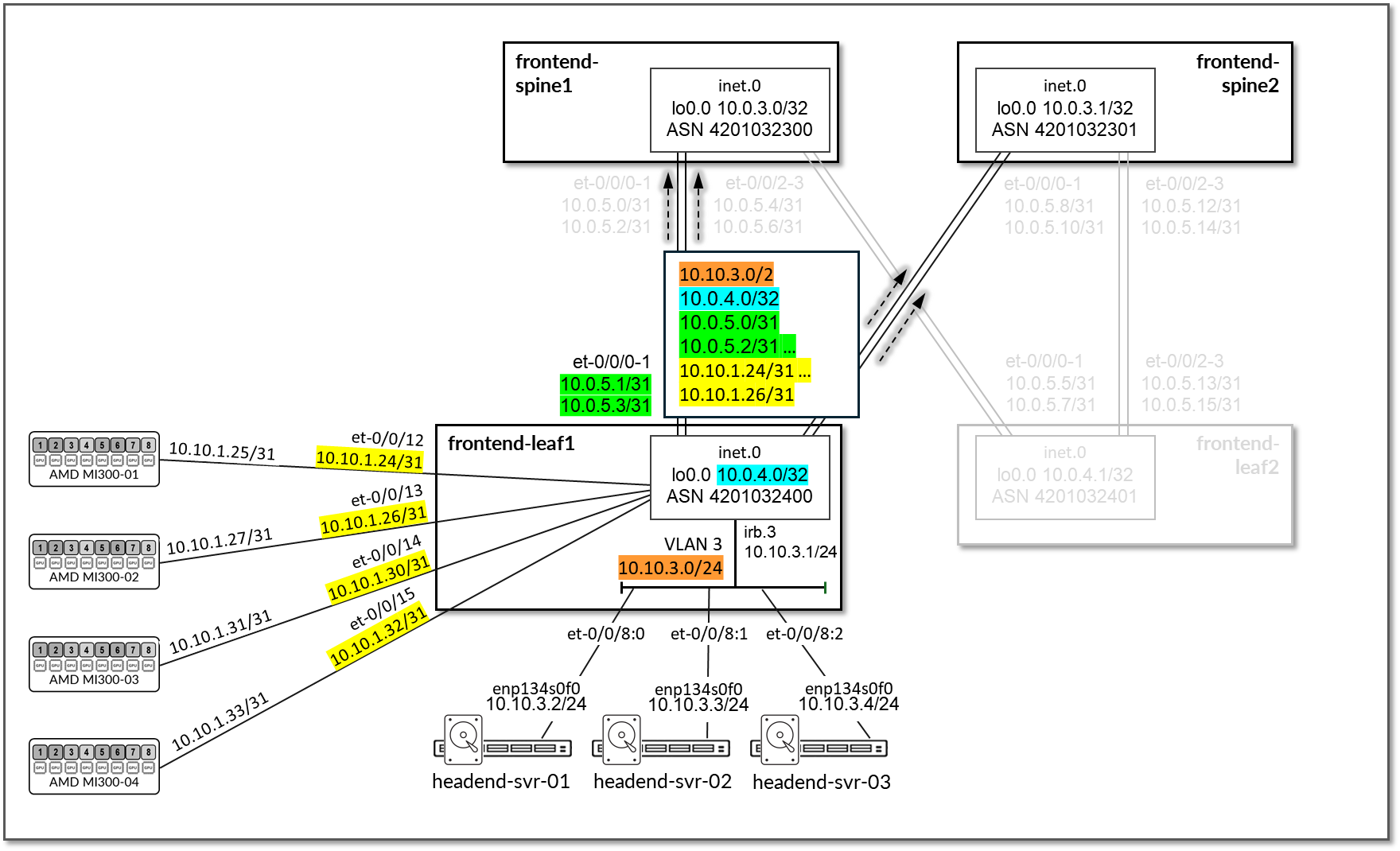

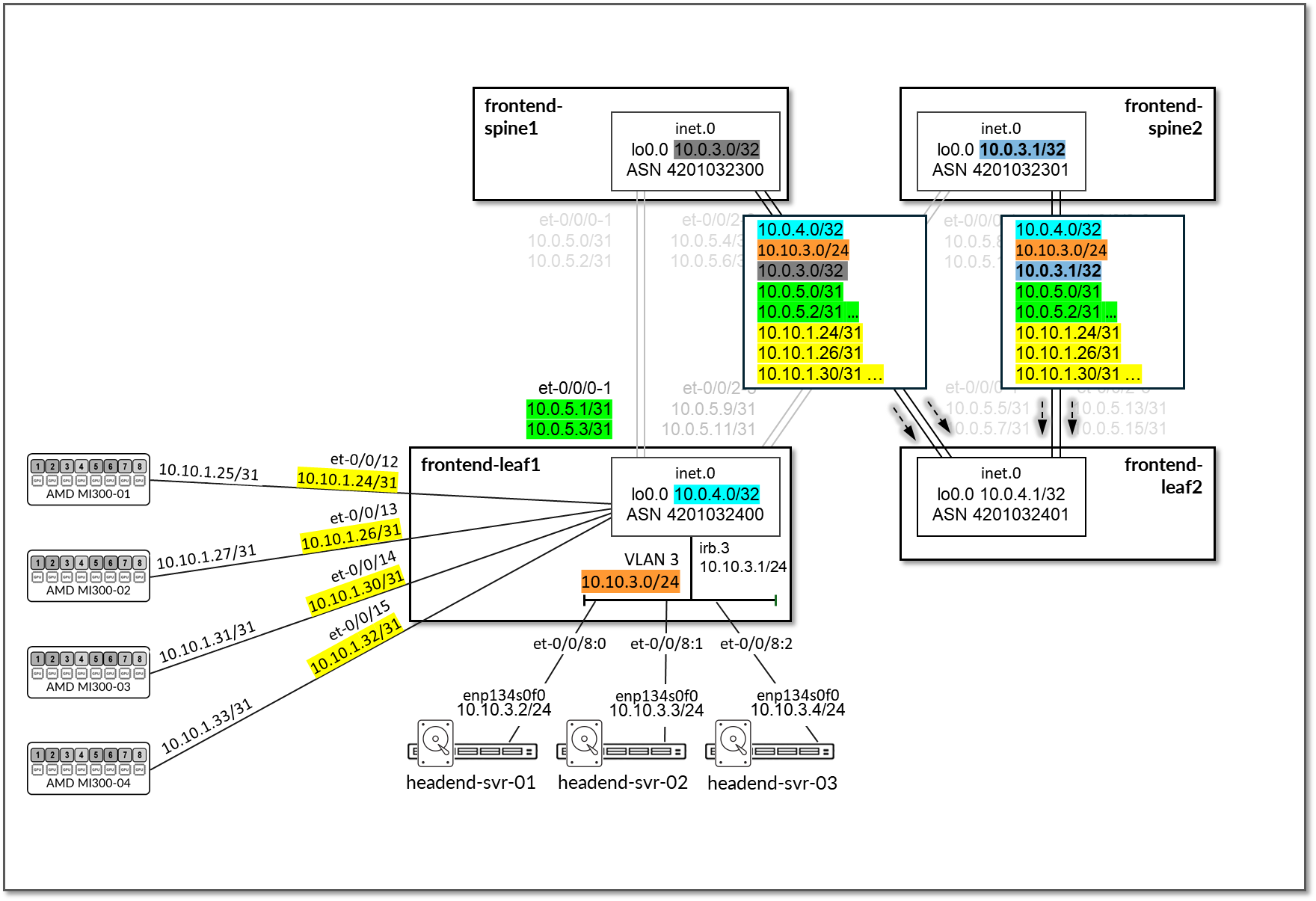

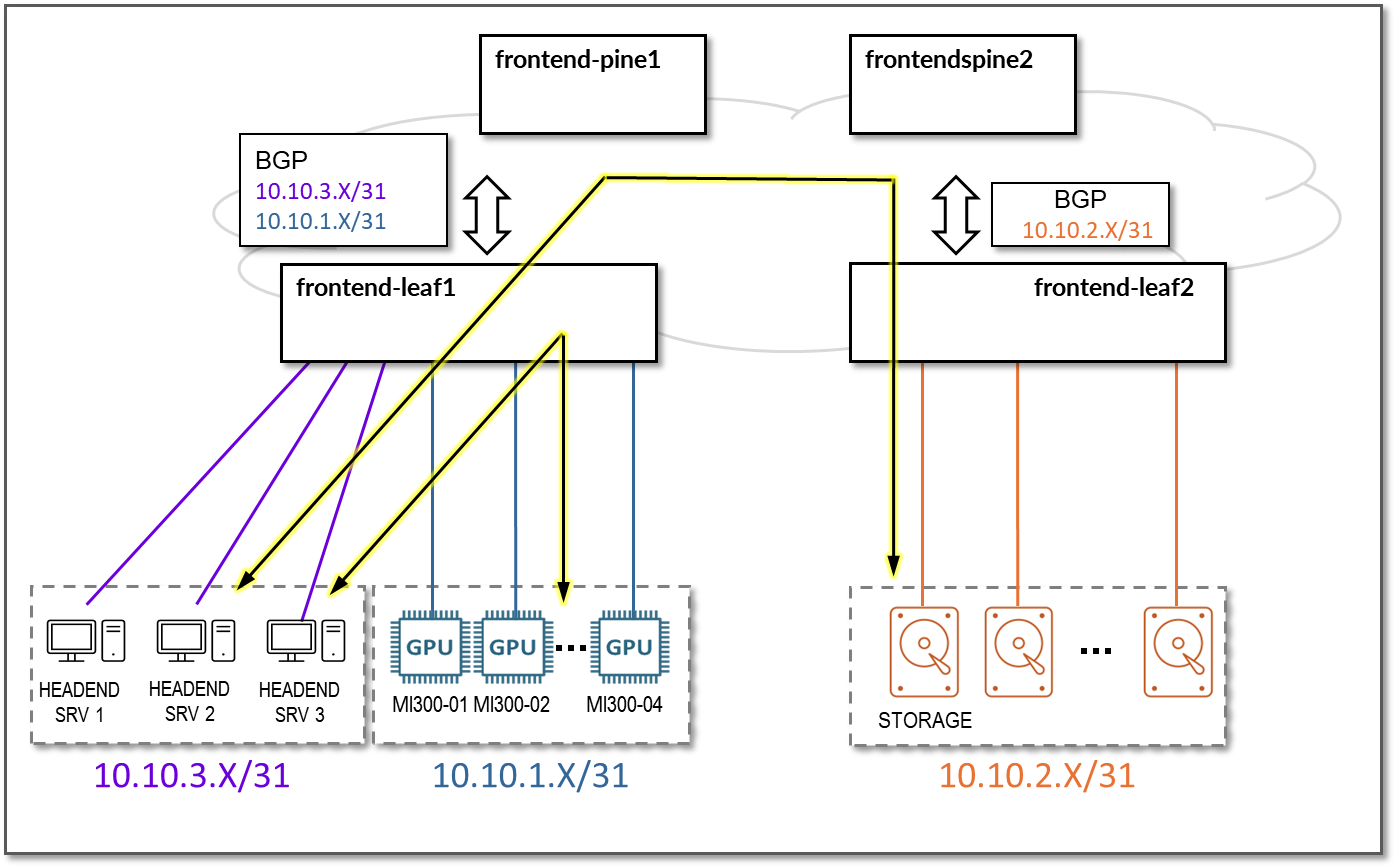

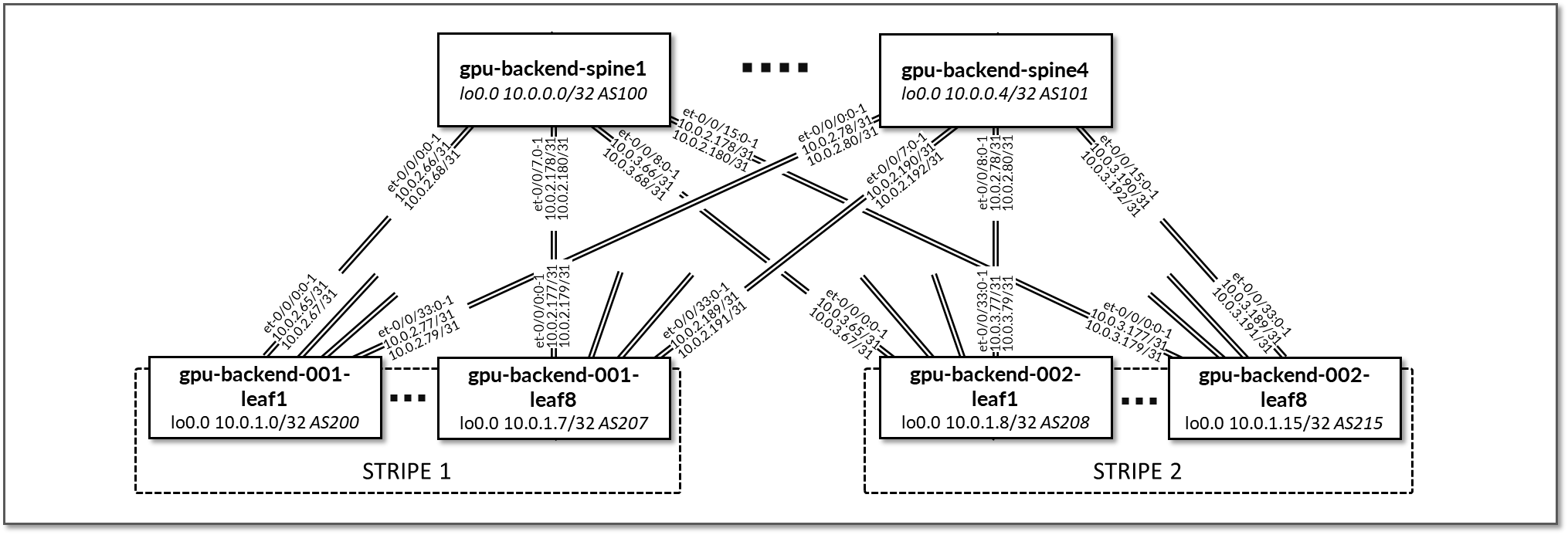

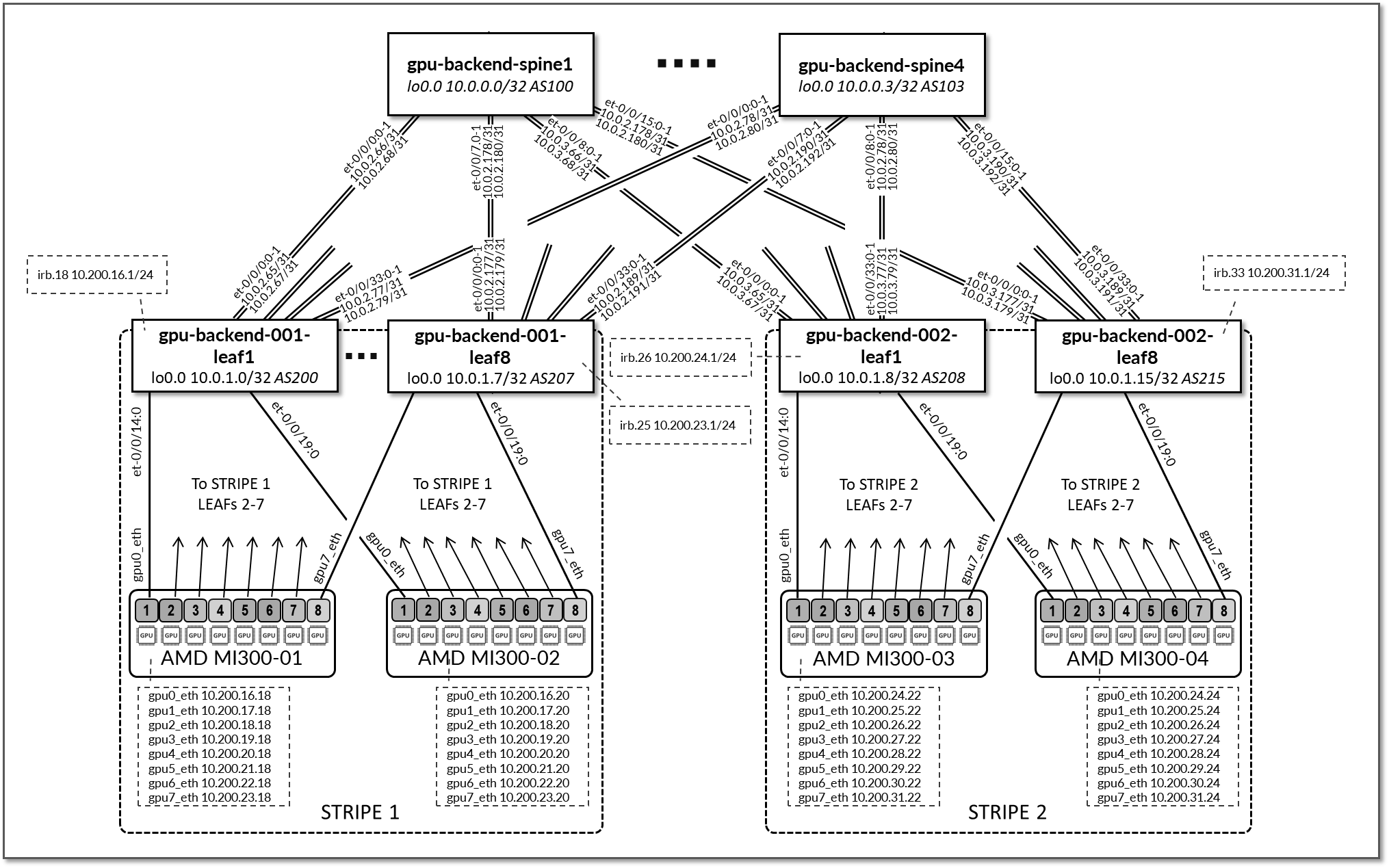

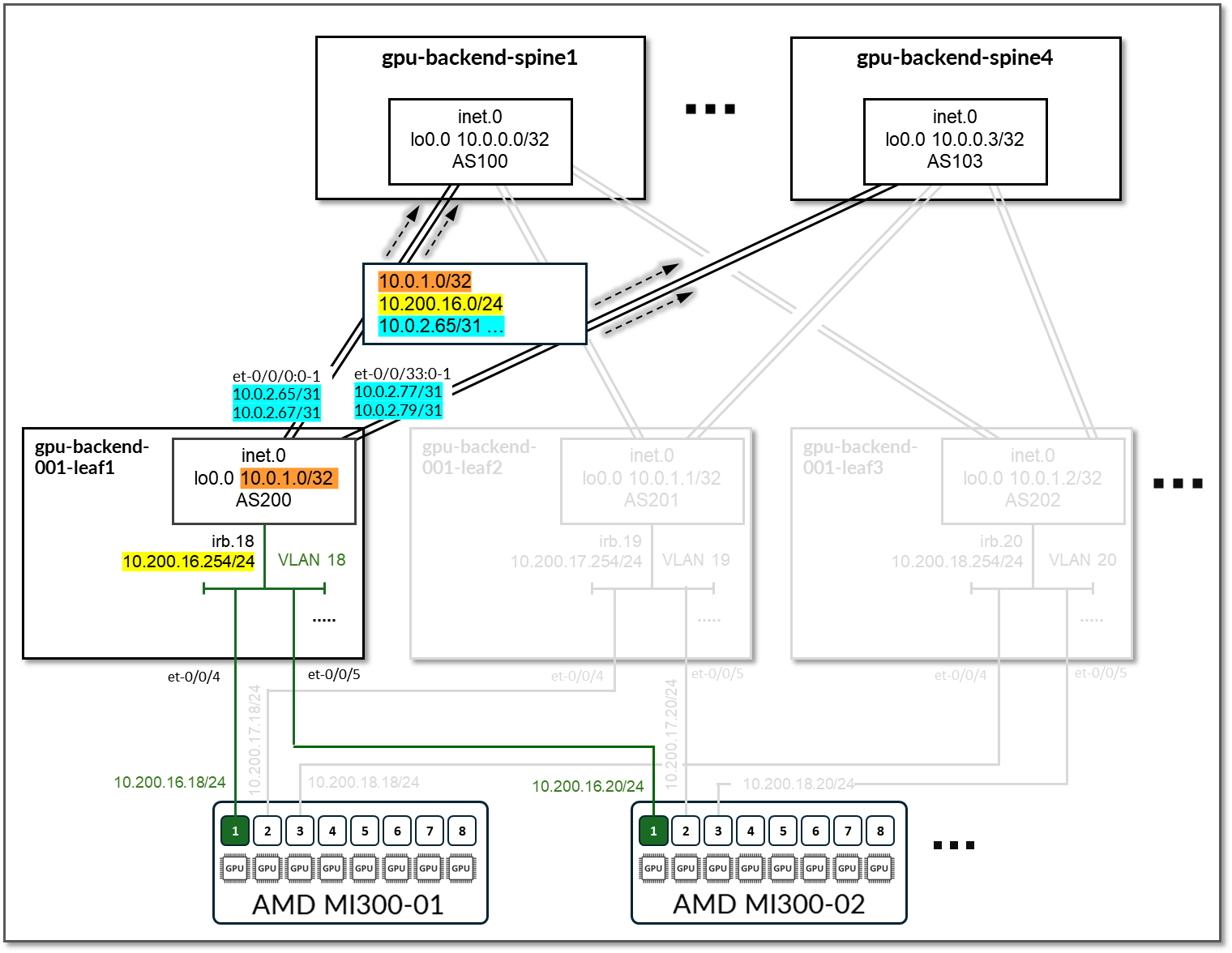

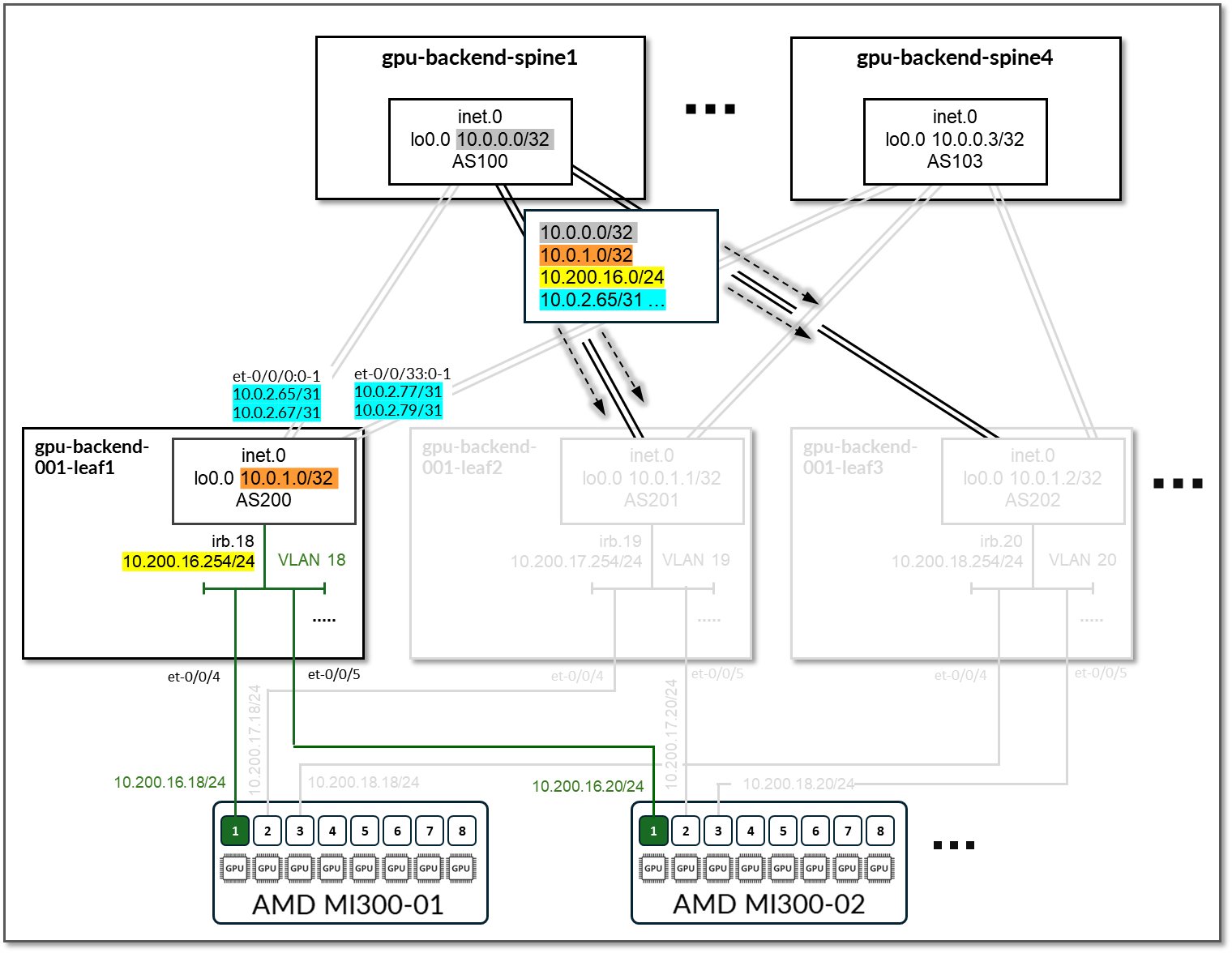

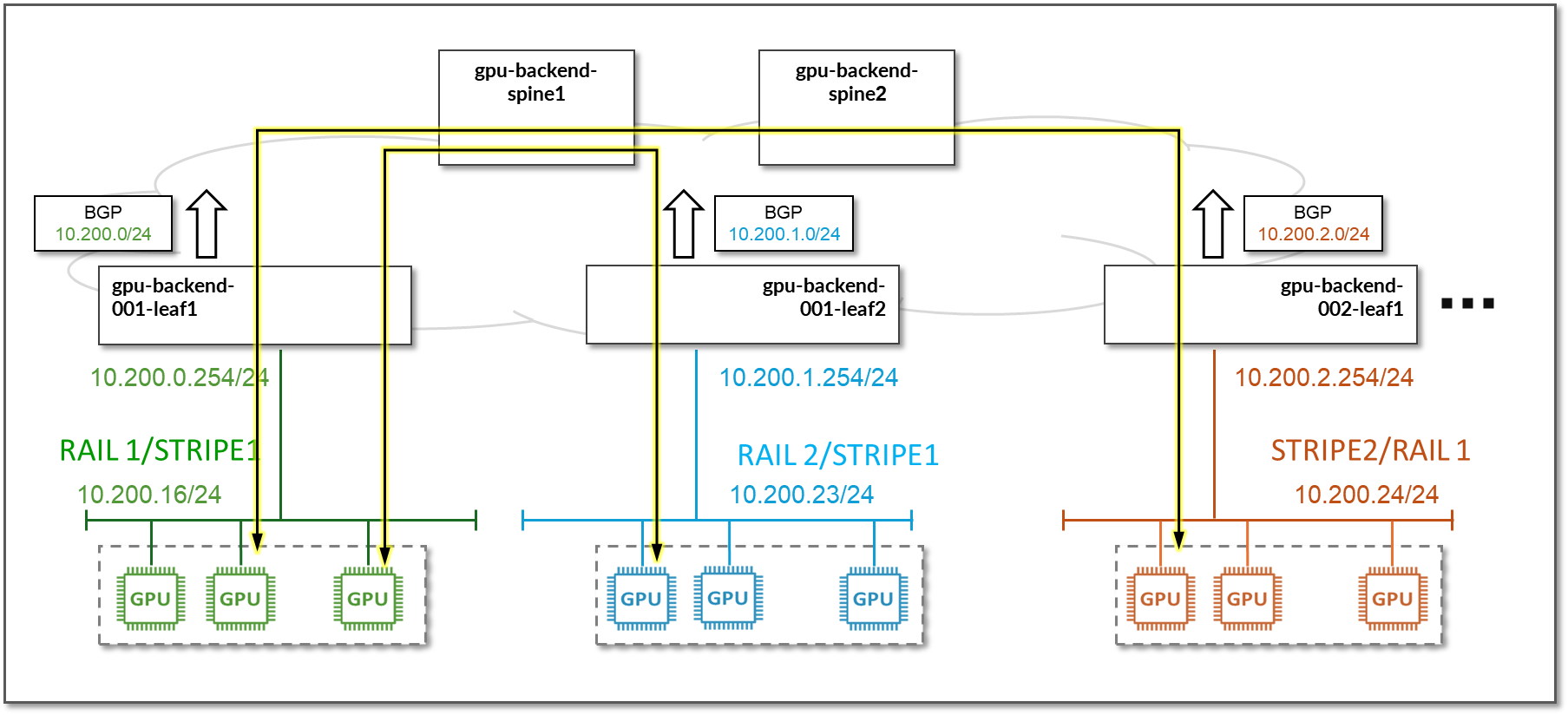

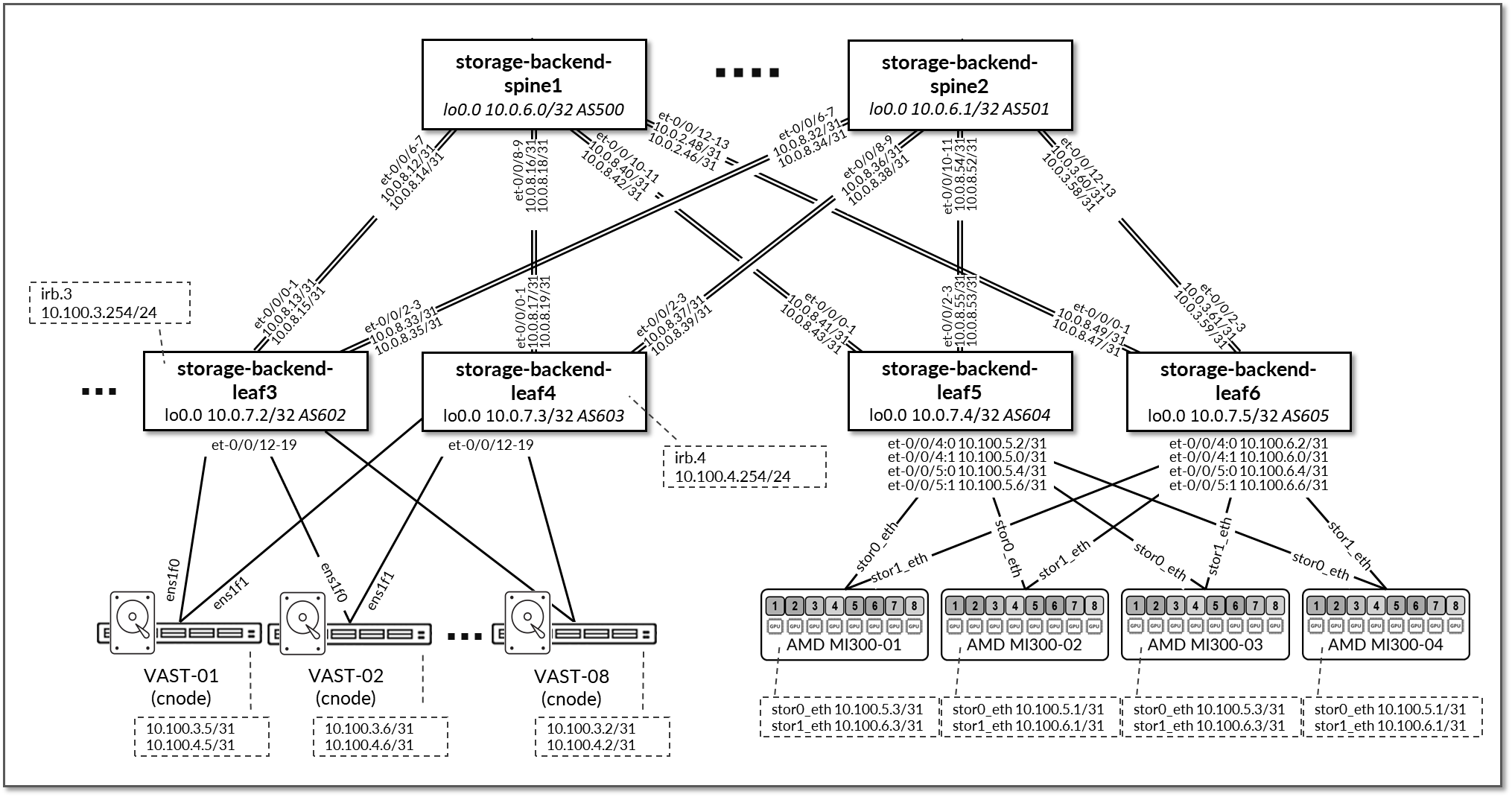

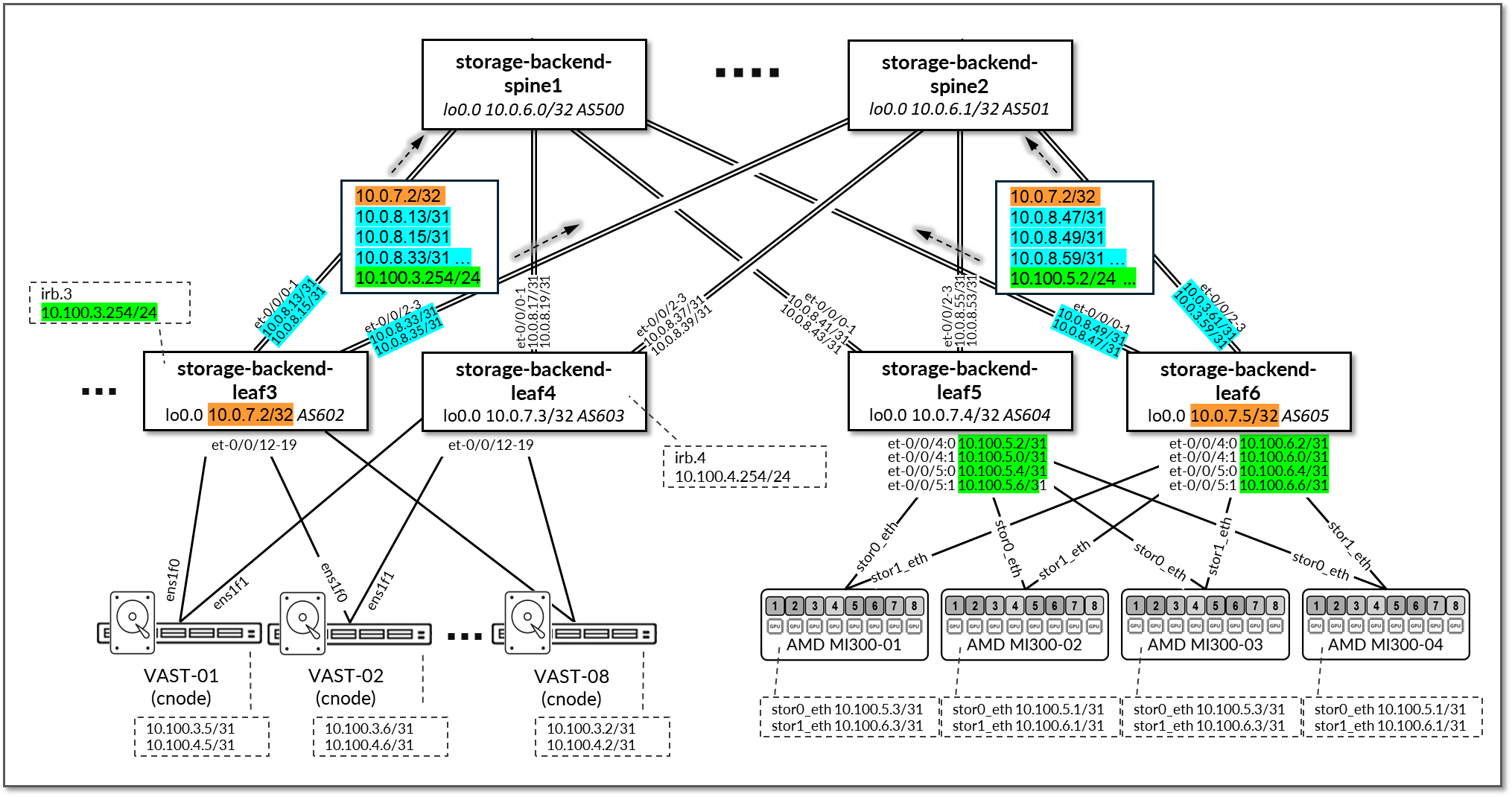

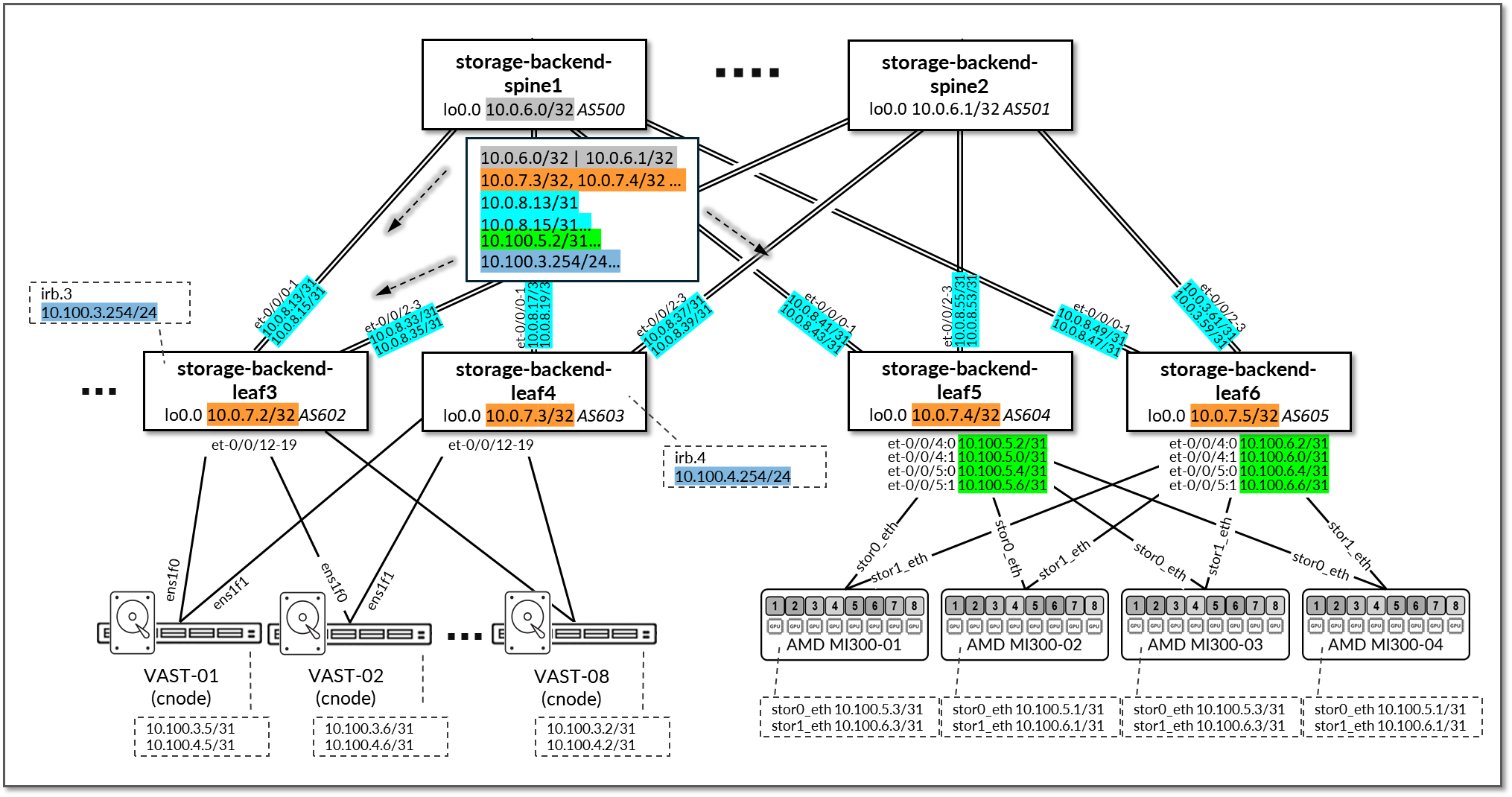

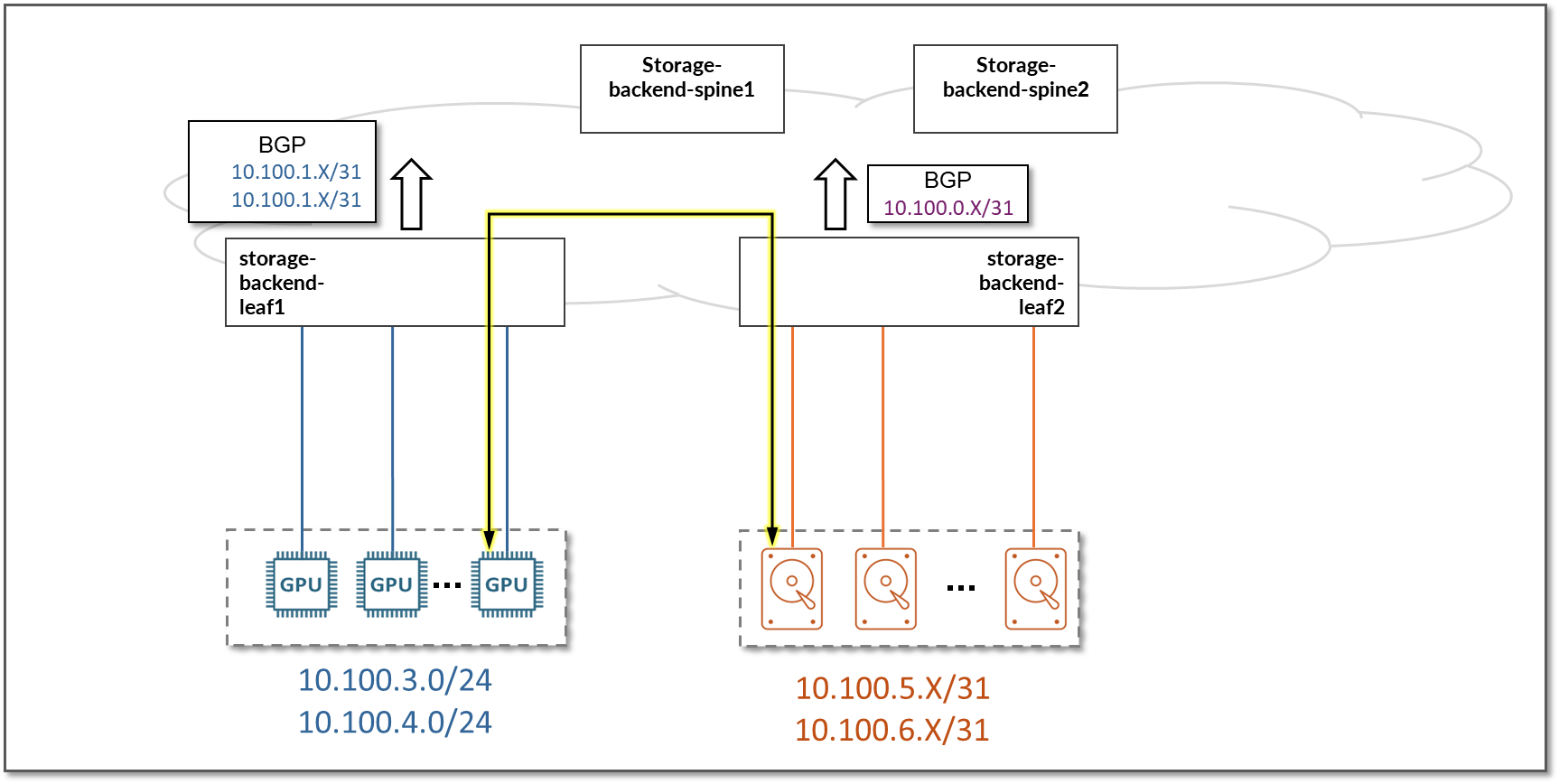

These steps will cover the AI GPU Backend IP fabric using QFX5240-64CD switches in the spine and leaf role along with AMD GPU servers and Vast storage devices. Similar steps should be followed to set up the Frontend and Storage Backend fabrics.

The section also provides configuration steps for the AMD GPU servers.

Setting up the Apstra Server

A configuration wizard launches upon connecting to the Apstra server VM for the first time. At this point, passwords for the Apstra server, Apstra UI, and network configuration can be configured.

For more detailed information about installation and step-by-step configuration with Apstra, refer to the Juniper Apstra User Guide.

Onboarding devices in Apstra

There are two methods for adding Juniper devices into Apstra:

- Using ZTP

From the Apstra ZTP server, follow the ZTP steps described in the Juniper Apstra User Guide.

- Manually (covered in detail in the next section)

Apstra imports the configuration from the devices into a baseline configuration called pristine configuration, which is a clean, minimal, and free of any pre-existing settings that could interfere with the intended network design managed by Apstra. Apstra ignores the Junos configuration ‘groups’ stanza and does not validate any group configuration listed in the inheritance model, refer to the configuration groups usage guide. It is best practice to avoid setting loopbacks, interfaces (except management interface), routing-instances (except management-instance) or any other settings as part of this baseline configuration. Apstra sets the protocols LLDP and RSTP when the device is successfully Acknowledged.

To onboard each device, follow these steps in the Apstra Web UI:

Step 1: Create Agent Profile for Junos devices.

- Navigate to Devices >> Agent Profiles

- Click on Create Agent Profile.

Figure 26. Create Agent Profile in Apstra

Note:

Note:For the purposes of this JVD, the same username and password are used across all devices. Thus, only one Junos Agent Profile is needed to onboard all the devices.

- Enter the Agent profile name, select platform (Junos), and

enter the username and password that will be used by Apstra to

communicate with the devices.

- This requires that the devices be preconfigured with a root password, the username and password configured in Apstra, a management IP and proper static routing if needed, as well as ssh Netconf, so that they can be accessed and configured by Apstra.

Figure 27: Apstra Agent Profile Parameters

- After entering the required information, click Create.

- Confirm Agent Profile creation.

Figure 28: New Apstra Agent Profile Verification

Step 2: Create Agent Profile for other devices.

- Navigate to Devices >> Agent Profiles

- Click on Create Agent Profile.

Figure 29. Create Agent Profile in Apstra

.png)

- Enter the Agent profile name, select platform (Junos), and

enter the username and password that will be used by Apstra to

communicate with the devices.

This requires that the devices are preconfigured with a root password, the username and password configured in Apstra, a management IP and proper static routing if needed, as well as ssh Netconf, so that they can be accessed and configured by Apstra.

Figure 30: Apstra Agent Profile Parameters

- Click Create.

- Confirm Agent Profile creation.

Figure 31: New Apstra Agent Profile Verification

Step 3: Create Offbox Agents for QFX devices.

- Navigate to Devices >> Managed Devices

- Click on Create Offbox Agents. Make sure

you select Offbox Agent(s).

Figure 32: Create Offbox Agent in Apstra

- Identify the device(s) to onboard.

You can enter a comma-separated list of hostnames, individual IP

addresses, or IP address ranges.Note:

An IP address range can also be provided to onboard multiple devices in Apstra at once. The ranges shown in the example below are shown for demonstration purposes only.

- Select the platform, and Agent Profile (select

the profile created in the previous step).

Figure 33: Identifying the device(s) to onboard using a range of IP Addresses

Note:

Note:NOTE: Apstra uses the information from the profile that was created in the previous step, if “ Set username?” and “ Set password?” are unchecked.

- Click Create.

- Confirm that the devices have been added for onboarding. Once the offbox agent has been created, devices will be added to the list of Managed Devices. Apstra will attempt to connect, and if successful, it will populate the relevant information.

Figure 34: New devices being onboarded

Step 4: Create Onbox Agents and onboard NVIDIA servers.

- Navigate to Devices >> Managed Devices

- Click on Create Onbox Agents. Make sure

you select Onbox Agent(s).

Figure 35: Create Onbox Agent in Apstra

- Identify the device(s) to onboard.

You can enter a comma-separated list of hostnames, individual IP

addresses, or IP address ranges.

NOTE: An IP address range can also be provided to onboard multiple devices in Apstra at once. The ranges shown in the example below are shown for demonstration purposes only.

- Select the platform, and Agent Profile (select

the profile created in the previous step).

Figure 36: Identifying the device(s) to onboard using a range of IP Addresses

Note:

Note:Apstra uses the information from the profile that was created in the previous step, if “ Set username?” and “ Set password?” are unchecked.

- Click Create.

- Confirm that the devices have been added for onboarding. Once the onbox agent has been created, devices will be added to the list of Managed Devices. Apstra will attempt to connect, and if successful, it will populate the relevant information.

Figure 37: New devices being onboarded

Step 5: Acknowledge Managed Devices for Use in Apstra Blueprints.

The devices must be acknowledged by the user to complete the onboarding and allow them to be part of Apstra Blueprints.

Once the agent creation has been successfully executed for each device, the devices must be acknowledged by the user to complete the onboarding and make them part of the Apstra Blueprints. This moves the device state from OOS-QUARANTINE to OOS-READY.

- Select device(s)

- Click on the “Acknowledge selected system button”

Figure 38: Acknowledging Managed Devices in Apstra Blueprints

Fabric Provisioning in the Apstra Web UI

To provision the fabric, follow these steps in the Apstra Web UI:

The steps are demonstrated using the GPU Backend Fabric as an example.

Step 1: Create Logical Devices and Interface maps for leaf and spine nodes.

The example shows how to create the interface map and Logical device for the PTX10008 with LC1301 spine nodes.

- Navigate to Design > Logical Devices

- Click on Create Logical Device.

Figure 39: Creating a Logical Device

- Provide a name, add additional ports, and change the speed to 800G.

-

Click on Add Pannel.

Figure 40: Creating Logical Device panel 1.

- Provide a name, add additional ports, and change the speed to

800G on the second panel.

Figure 41: Creating Logical Device panel 2.

- Click on Create Port Group for panels 1 and 2.

Figure 42: Creating Logical Device Port groups.

- Click Create.

Figure 43: Creating Logical Device

- Verify Logical Device Creation.

Figure 44: New Logical Device

- Navigate to Design > Logical Devices

Figure 45: Creating an Interface Map

For the QFX5220 leaf nodes, the Logical Device and Interface Map are shown in Figures 46 and 47:

Figure 46: Apstra Logical Device for the QFX5220 Leaf Nodes

Figure 47: Apstra Interface Map for the QFX5220 Leaf Nodes

For the QFX5230-64CD leaf nodes, the Logical Device and Interface Map are shown in Figures 48 and 49:

Figure 48: Apstra Logical Device for the QFX5230 Leaf Nodes

Figure 49: Apstra Interface Map for the QFX5230 Leaf Nodes

For the QFX5230 spine nodes, the Logical Device and Interface Map are shown in Figures 50 and 51:

Figure 50: Apstra Logical Device for the QFX5230 Spine Nodes

Figure 51: Apstra Interface Map for the QFX5230 Spine Nodes

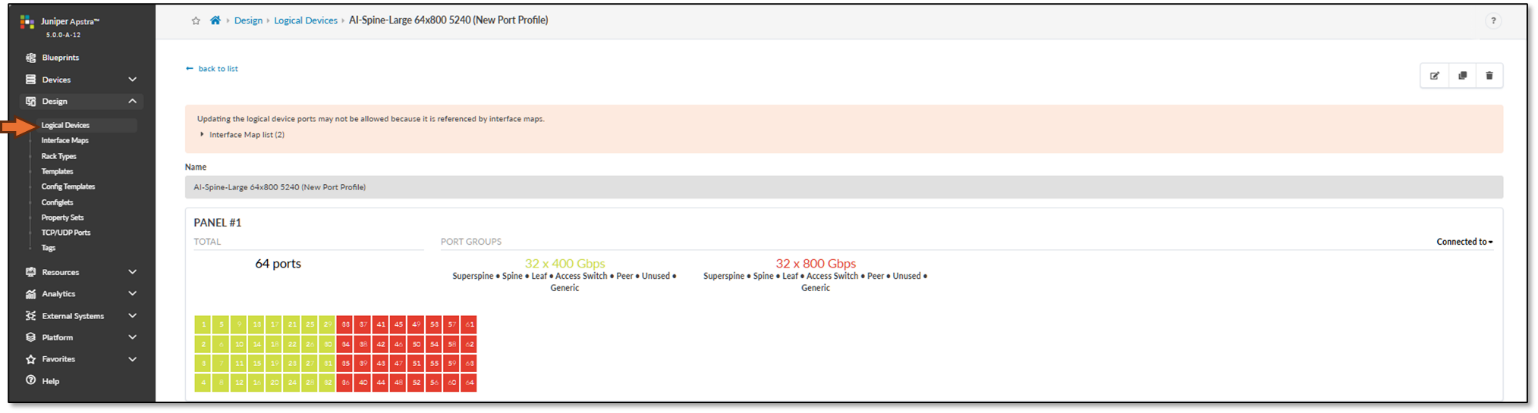

For the QFX5240 spine and leaf nodes, the Logical Device and Interface Map are shown in Figures 52-53 and 54-55 respectively.

The QFX5240s port numbering in Junos OS Release 23.4R2 was modified. Table 24 shows the differences between the old and the new port mappings.

The following table shows the differences between the old and the new port mappings.

Table 24. QFX5240-64DC port mappings

Figure 52: Apstra Interface Map for the QFX5240 Spine Nodes

Figure 53: Apstra Logical Device for the QFX5240 Spine Nodes

Figure 54: Apstra Interface Map for the QFX5240 Leaf Nodes

Figure 55: Apstra Logical Device for the QFX5240 Leaf Nodes

For the PTX10008 LC1201 spine nodes also tested, the Logical Device and Interface Map are shown in Figures 56-57.

Figure 56: Apstra Interface Map for the PTX Spine Nodes

Figure 57: Apstra Logical Device for the PTX Spine Nodes

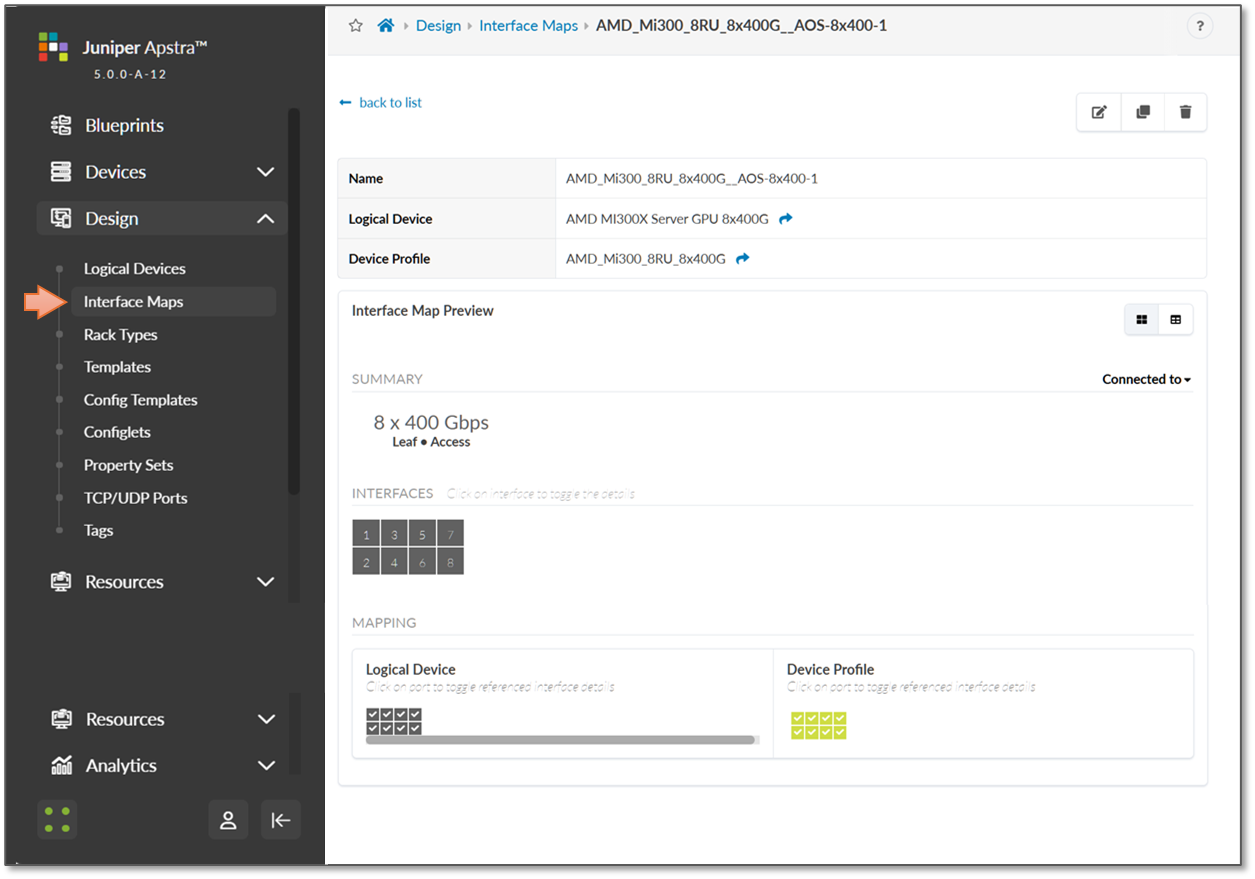

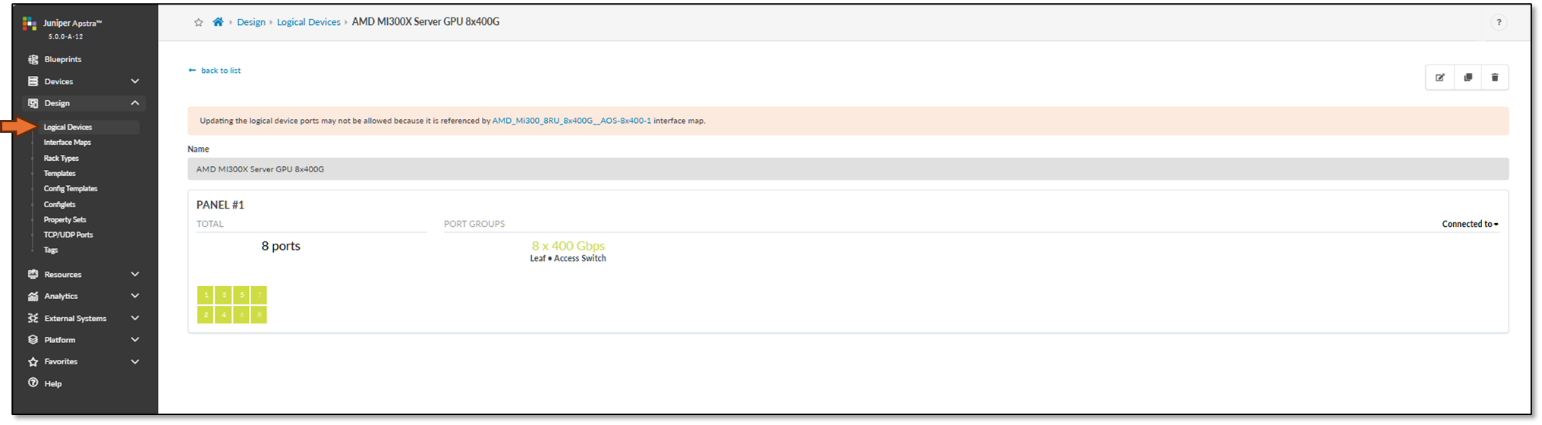

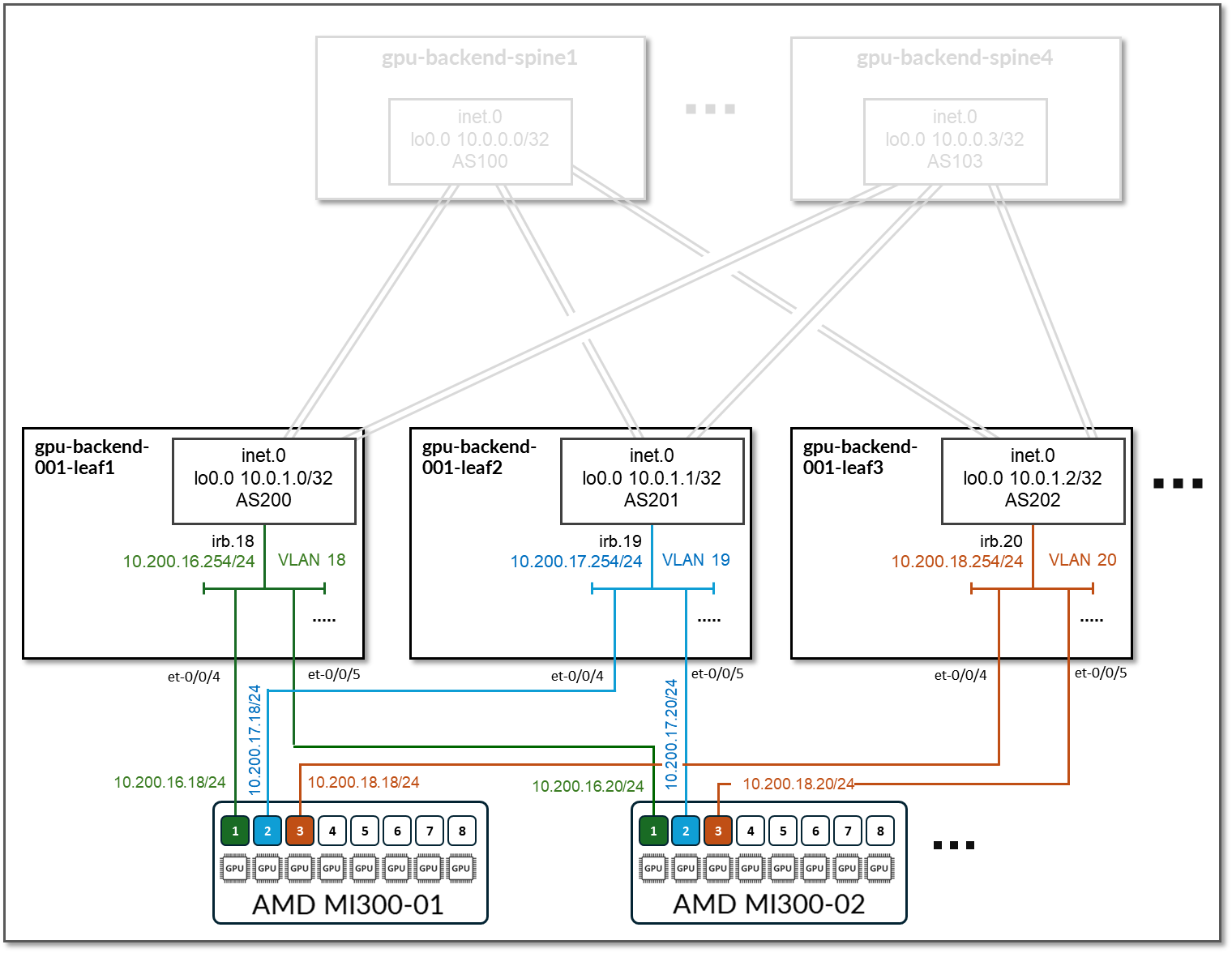

Step 2: Create Interface Map and Logical Device for the GPU servers

The logical Device and Interface Map for the AMD MI300X GPU servers are shown in Figures 58-59, respectively.

Figure 58: Apstra Interface Map for the AMD Servers

Figure 59: Apstra Logical Device for the AMD Servers

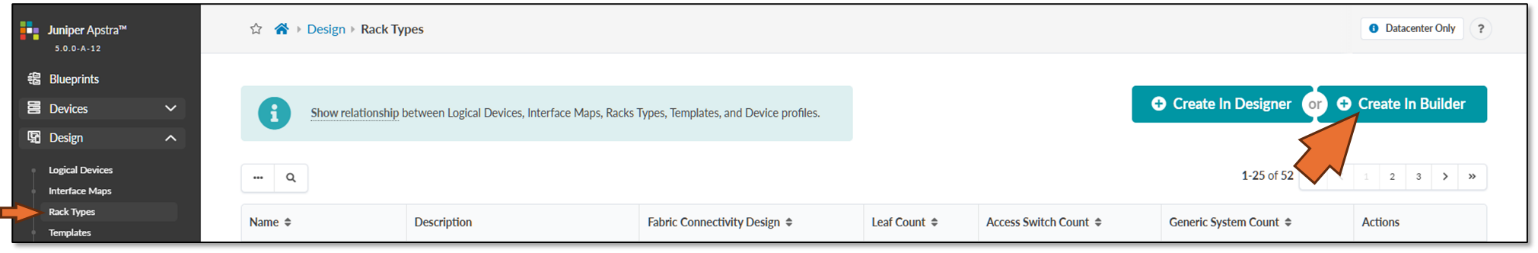

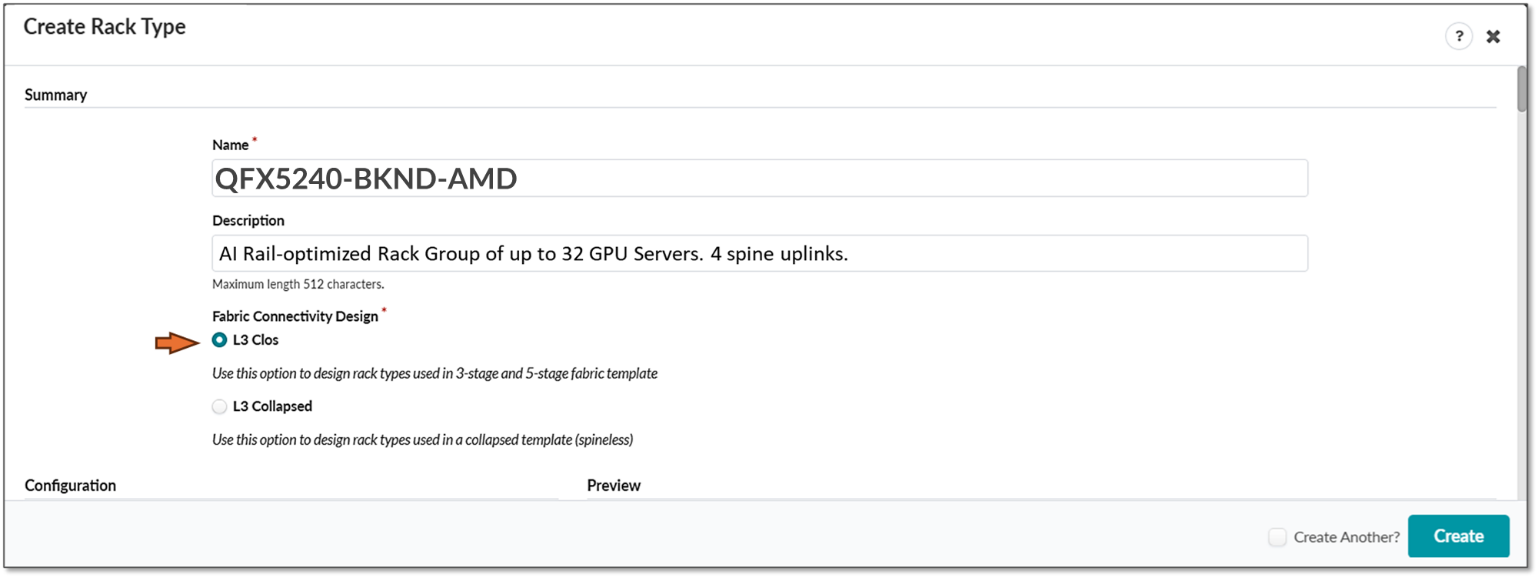

Step 3. Create Rack type for the GPU Backend Fabric

- Navigate to Design → Rack Types → Create in Builder

NOTE: In Apstra, a Rack is technically equivalent to a stripe in the context of an AI Fabric

- Click on Create Rack Type

Figure 60: Create Rack Type in Apstra

Note:

Note:You can choose between Create In Builder or Create In Designer (graphical version). We demonstrate the Create In Builder option here.

-

Provide a name and description and select L3 Clos.

Figure 61: Creating a Rack in Apstra using the Create In Builder option.

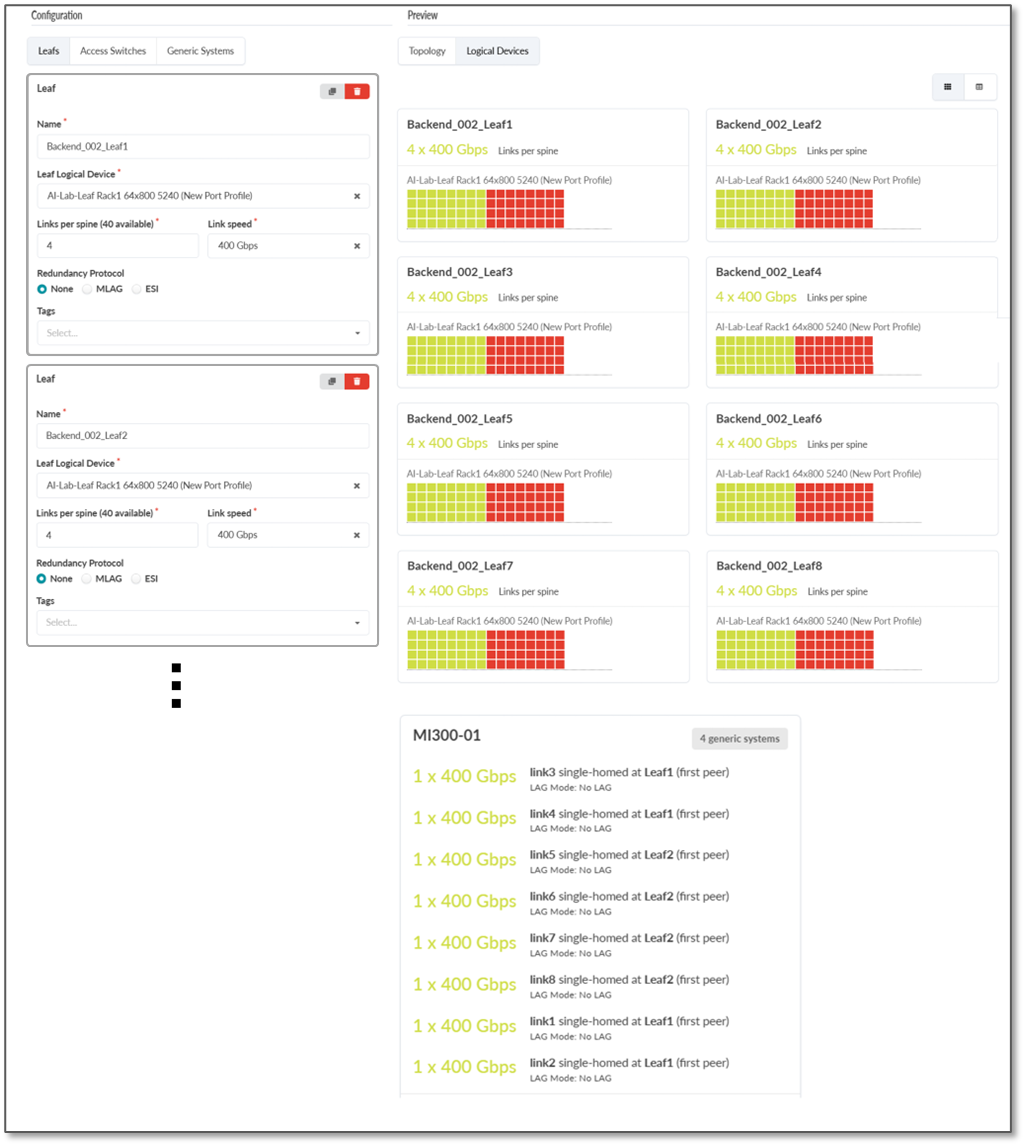

- Create the first leaf by clicking on the Add leaf button.

Figure 62: Creating Leaf nodes

Select the switch that was created and click on Manage properties of selected node(s).

Select the appropriate Logical Device.

Figure 63: Configure Leaf nodes properties

- Create additional leaf nodes by cloning the leaf created in the

previous step. Repeat until 8 leaf total have been added.

Figure 64: Creating additional leaf nodes by cloning the leaf node

Figure 65: Additional leaf nodes created

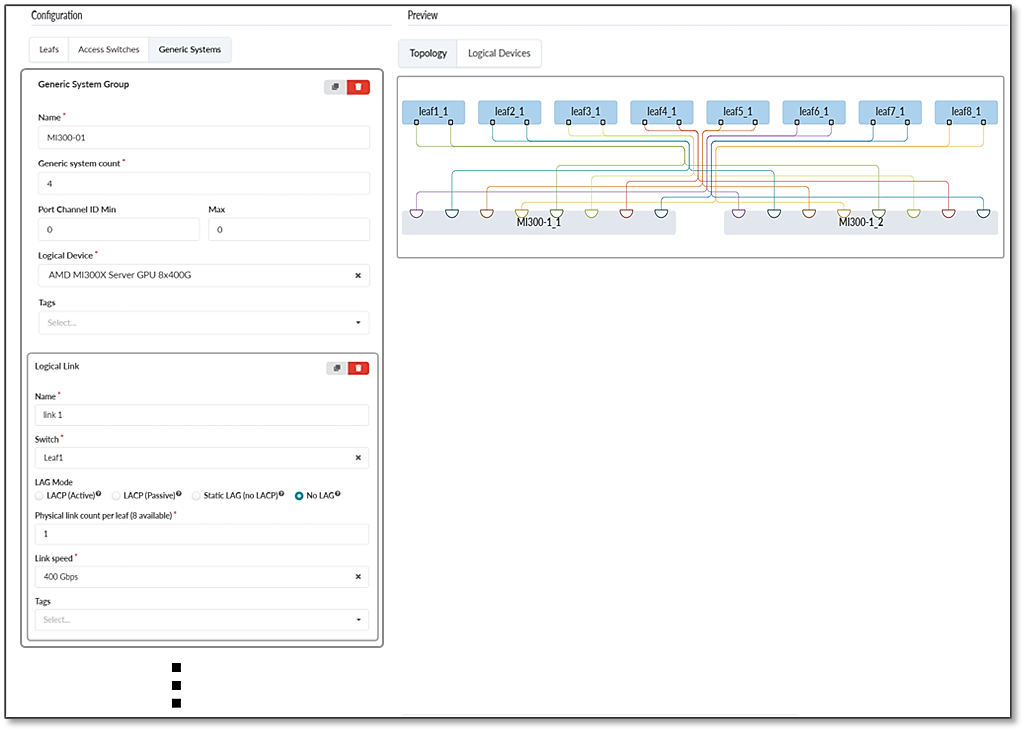

- Create the first GPU server (generic systems) by clicking on

Add generic system

Figure 66: Cloning leaf nodes.

Select the server that was created and click on Manage properties of selected node(s).

Provide a name for the server and select the appropriate Logical Device.

Figure 67: Creating and configuring first GPU server (generic system)

- Create GPU server to Leaf node

connections.

Select the Server and the first leaf and click on Manage Links.

Make sure the box “Is a Part of the Rail” is checked.

Repeat until all connections between the server and all the leaf nodes have been created.

Figure 68: Creating connections between GPU servers and leaf nodes

Figure 69: Creating connections between GPU servers and leaf nodes

- Create additional servers by cloning the server created in the

previous step. Repeat until 8 leaf total have been added. All connections will be

cloned.

Figure 70: Creating connections between GPU servers and leaf nodes

Figure 71: Creating connections between GPU servers and leaf nodes

- Verify the Rack has been created correctly:

Figure 72: Creating connections between GPU servers and leaf nodes

Figure 73: Creating connections between GPU servers and leaf nodes

Figure 74: Creating connections between GPU servers and leaf nodes

Figure 75: Creating connections between GPU servers and leaf nodes

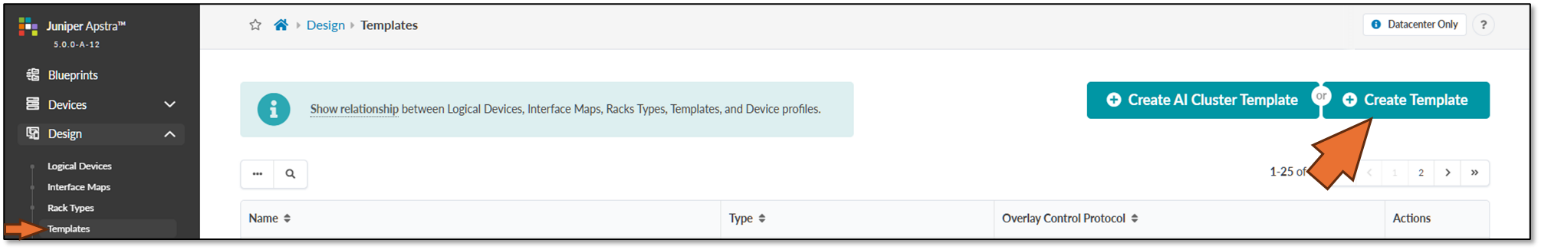

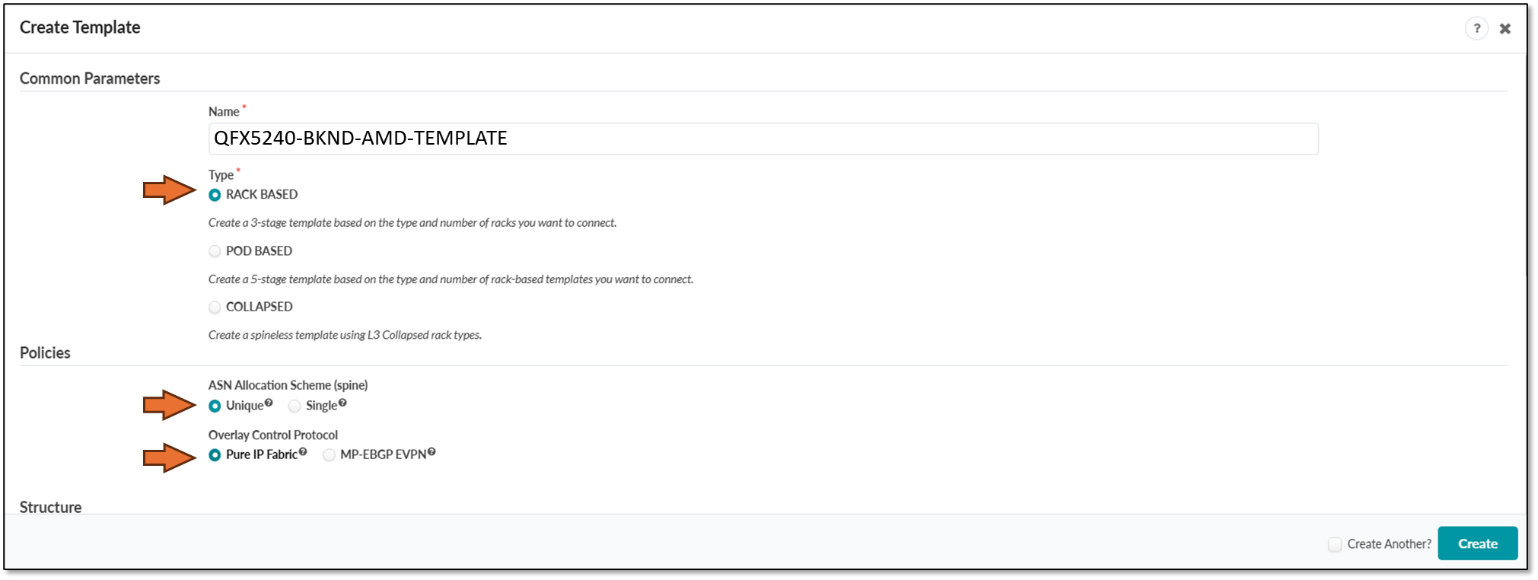

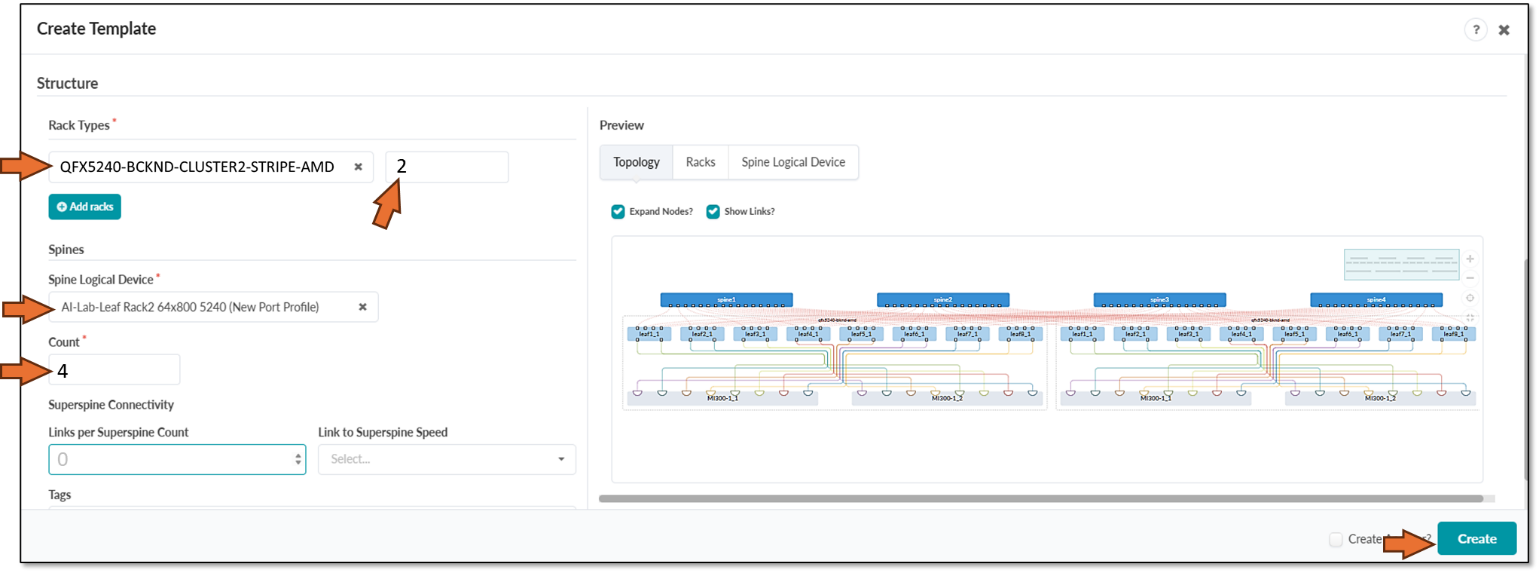

Step 4: Create a Template.

- Navigate to Design -> Templates

-

Click on Create Template

Figure 76: Create Apstra Template

Note:

Note:You can choose between Create Template or Create AI Cluster Template (Select from pre-existing designs). We demonstrate the Create Template option here.

- Enter the name of the template, and select Type RACK BASED,

policies ASN allocation Unique, and Overlay Pure IP Fabric.

Figure 77: Creating a Template in Apstra - Parameters

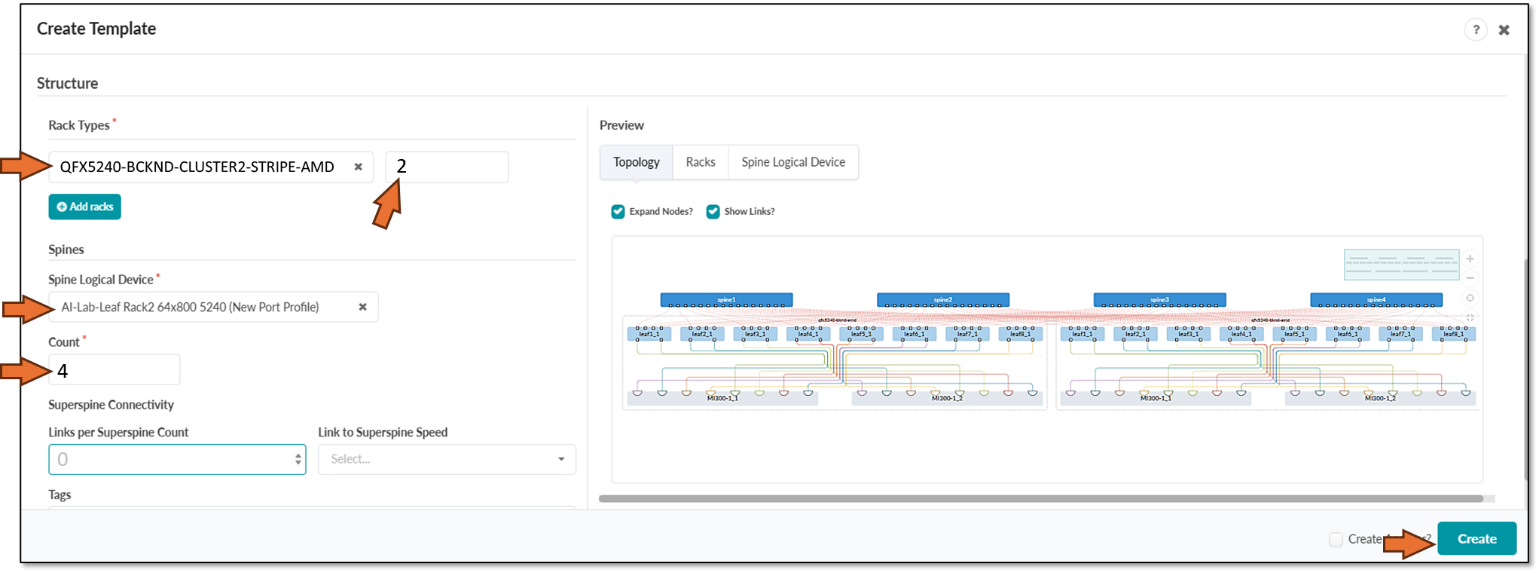

-

Scroll down and select the Rack type and Spine logical device created in previous steps, set the number of Racks (which is equivalent to saying number of stripes), and the number of spines. Click on create when ready, as shown in Figure 75.

Figure 78: Creating a Template in Apstra - Structure

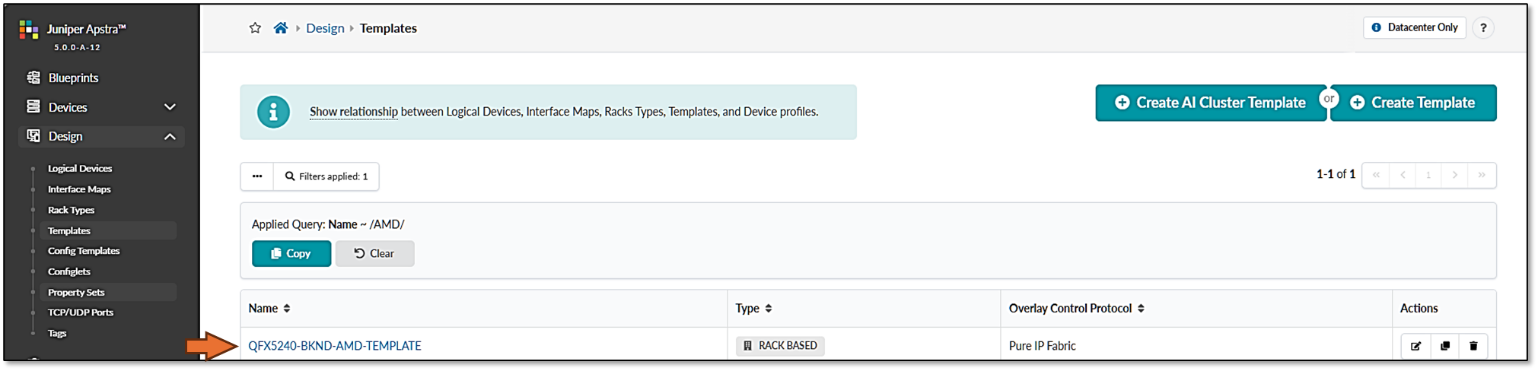

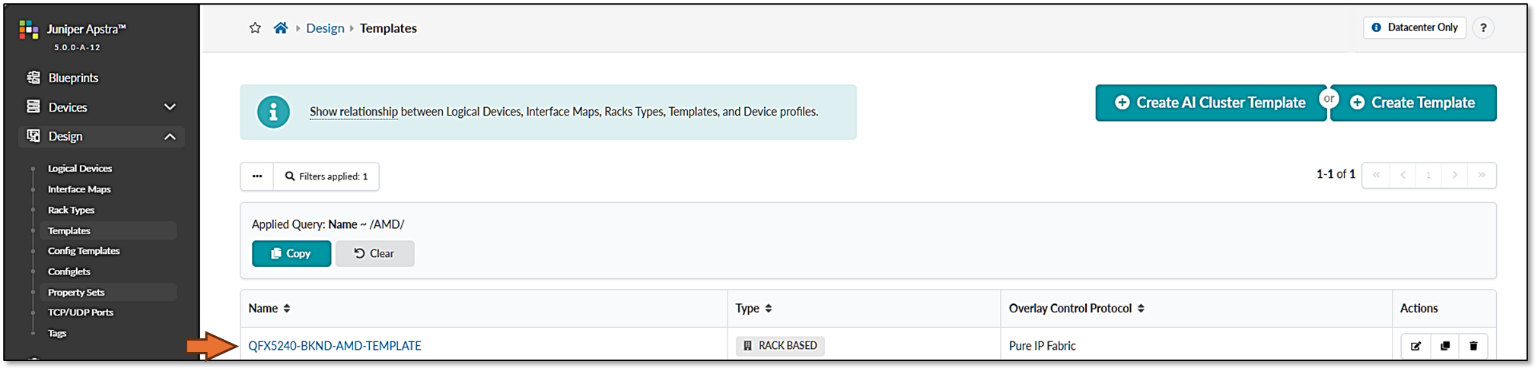

Figure 79: Verifying new template creation

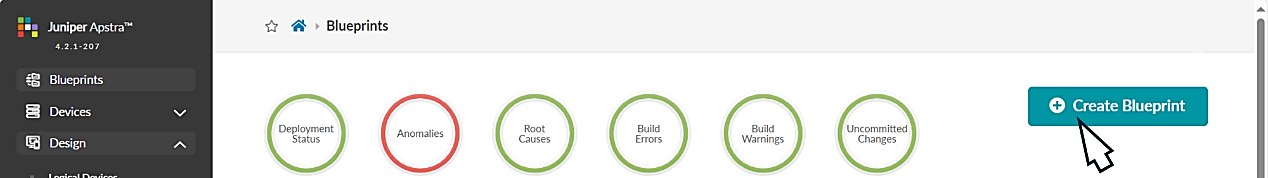

Step 5: Create the GPU Backend Fabric Blueprint.

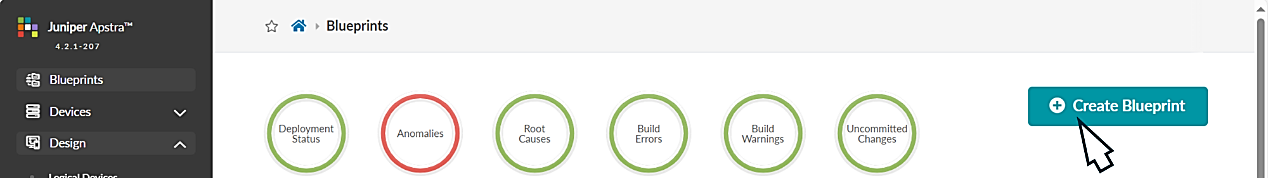

- Navigate to the Blueprints section and click

on Create Blueprint, as shown in Figure 80.

Figure 80: Creating a Blueprint in Apstra

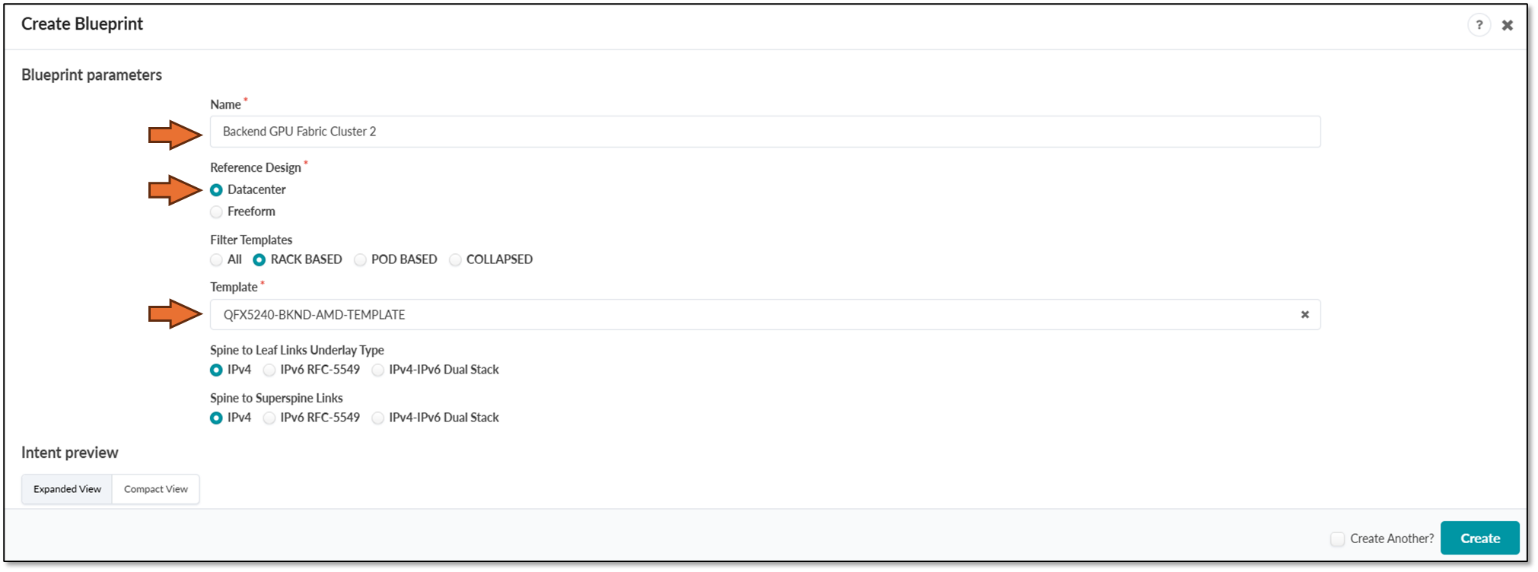

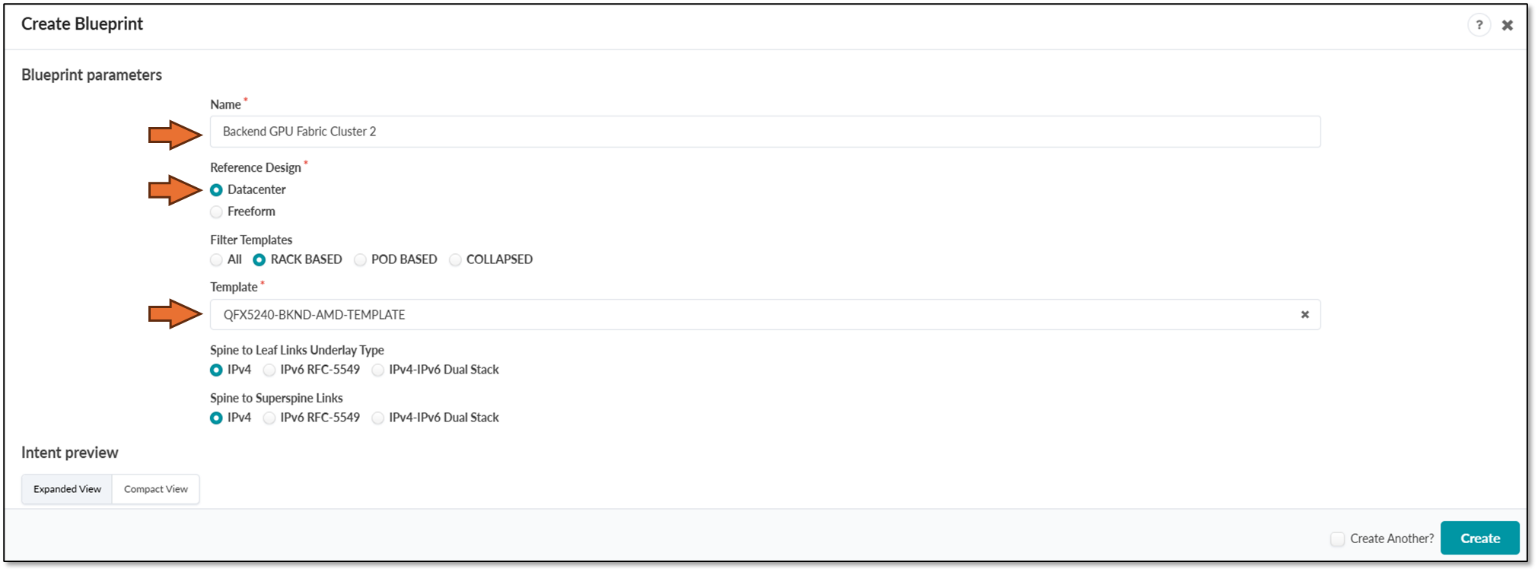

- Provide a name for the new blueprint, select data center as the reference design, and select Rack-based. Then select the template that was created in the previous step, which will include the two rack types that were created before.

Figure 81: New Blueprint Attributes in Apstra

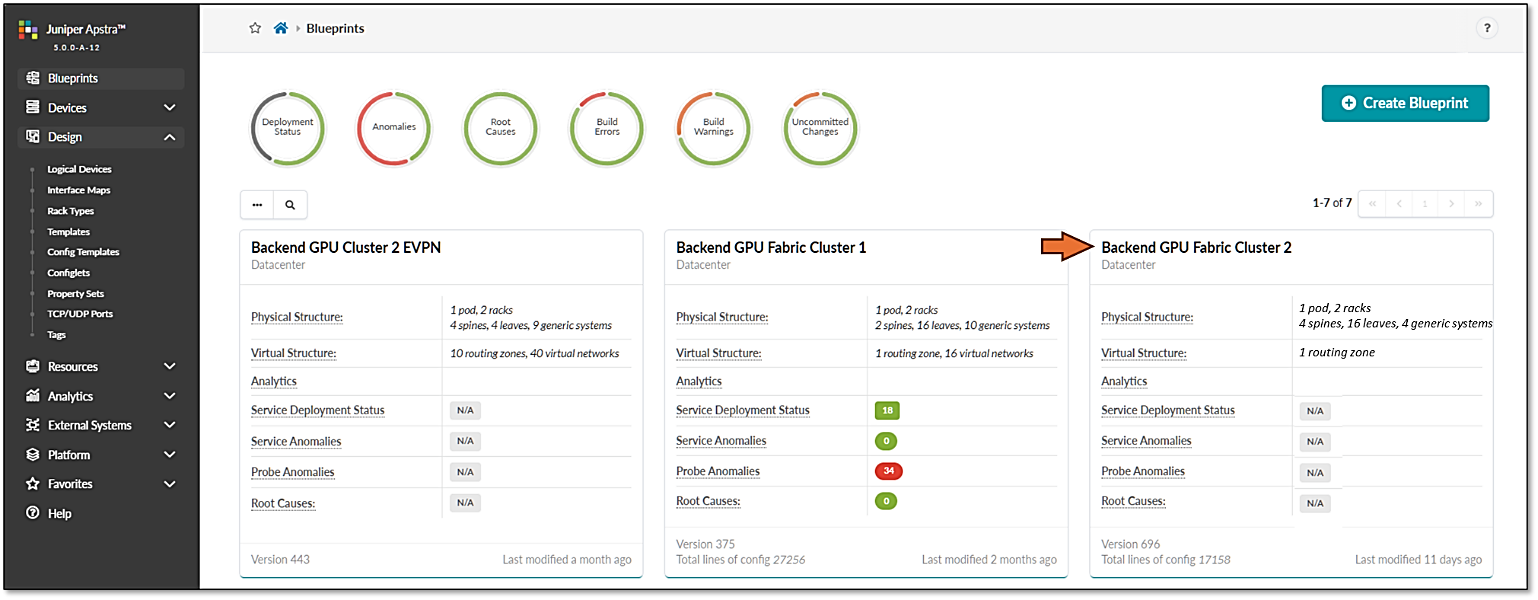

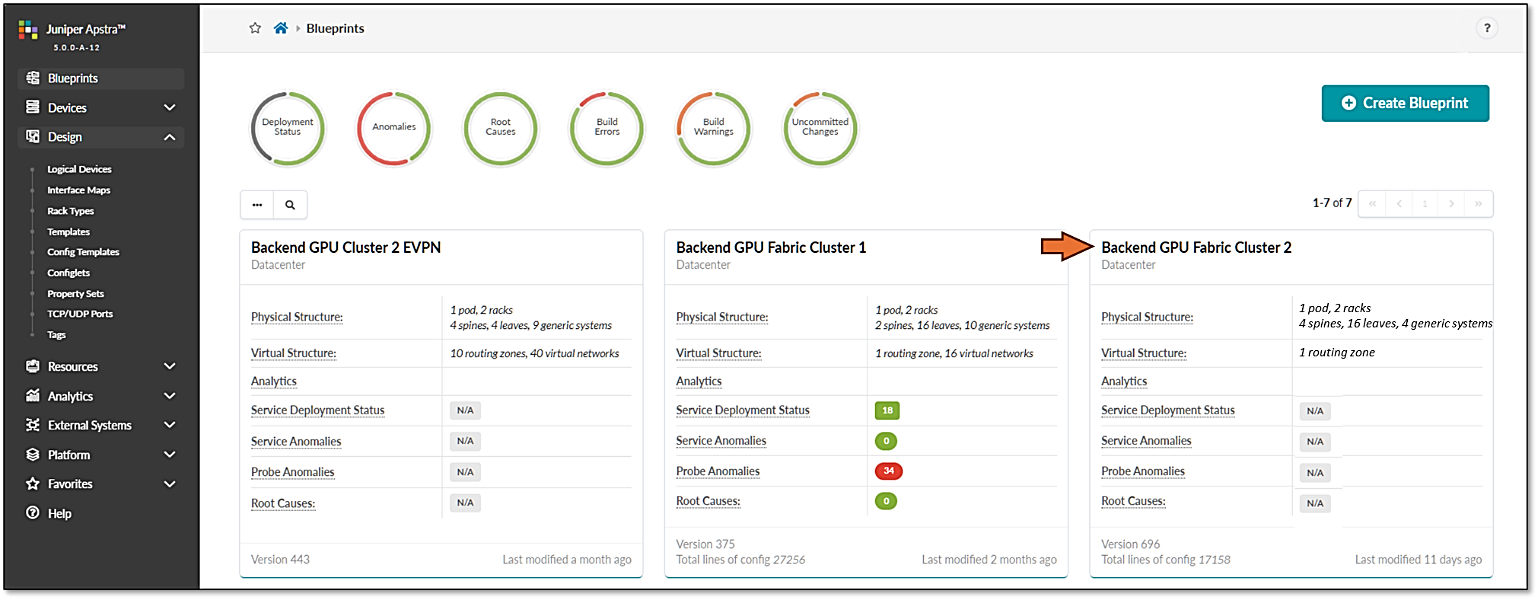

Once the blueprint is successfully initiated by Apstra, it will be included in the Blueprint dashboard as shown below.

Figure 82: New Blueprint Added to Blueprint Dashboard

Notice that the Deployment Status, Service Anomalies, Probe Anomalies and Root Causes are all shown as N/A. This is because you will need to complete additional steps that include mapping the different roles in the blueprint to the physical devices, defining which interfaces will be used, etc.

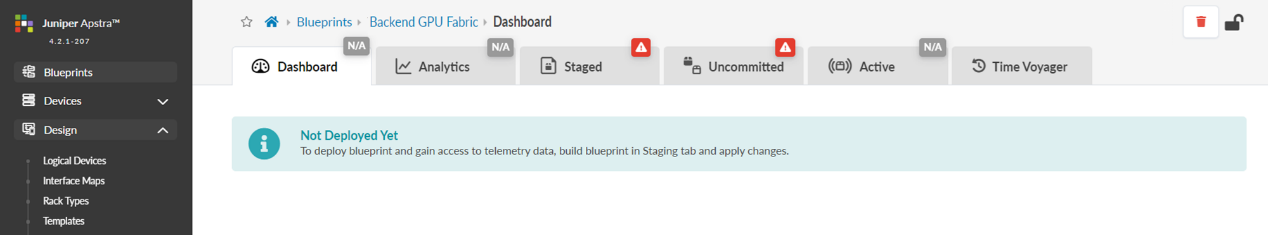

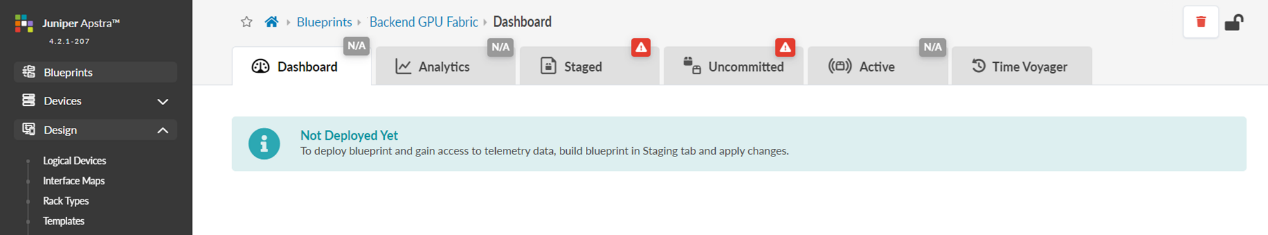

When you click on the blueprint name and enter the blueprint dashboard it will indicate that the blueprint has not been deployed yet.

Figure 83: New Blueprint’s dashboard

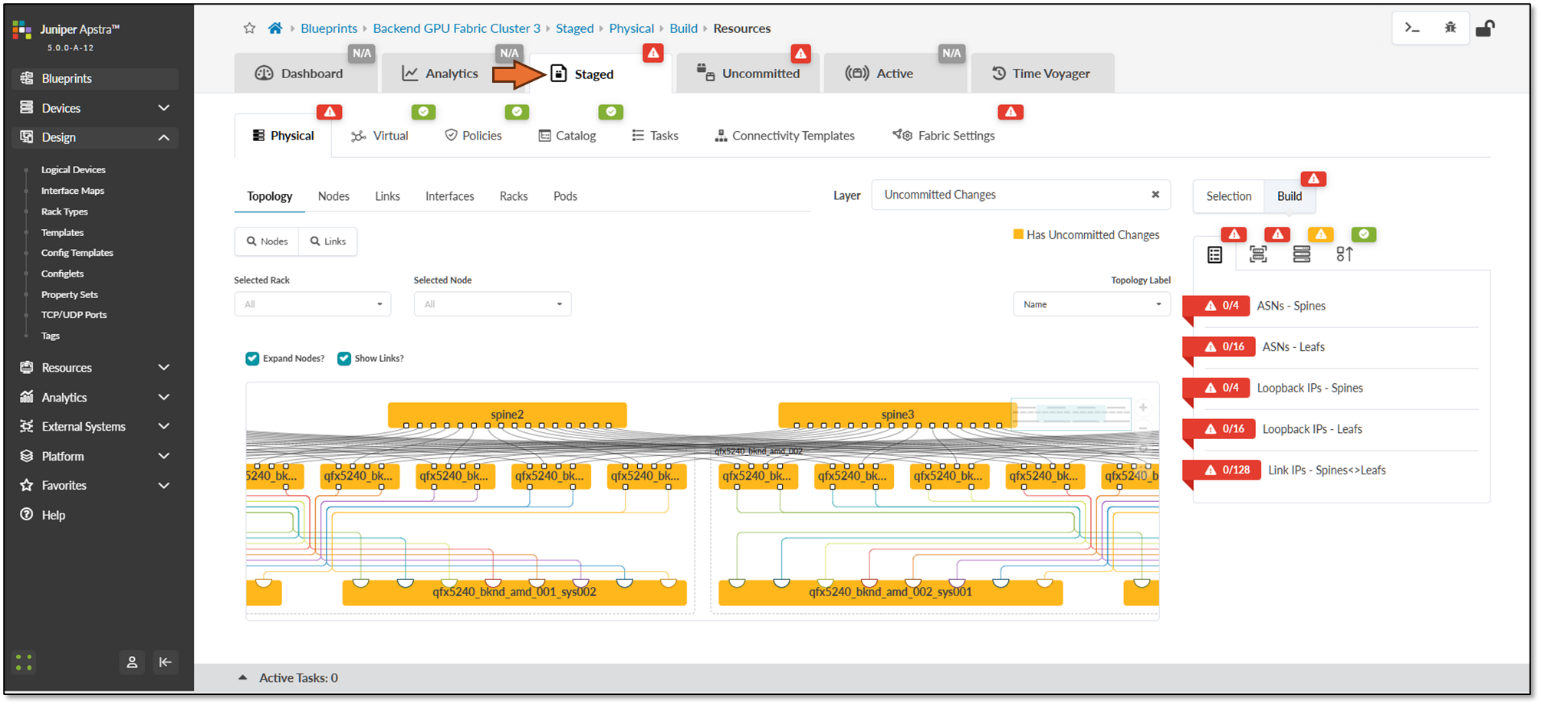

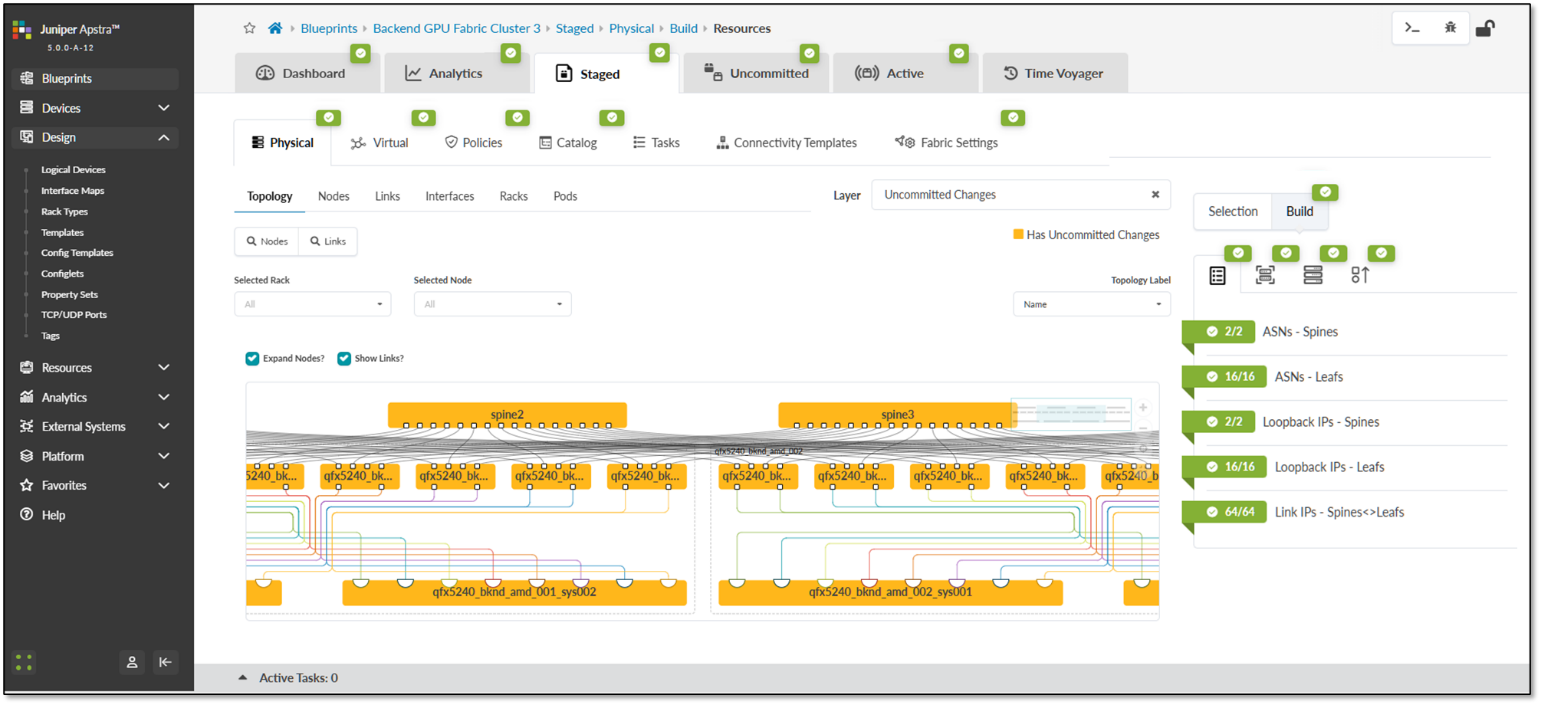

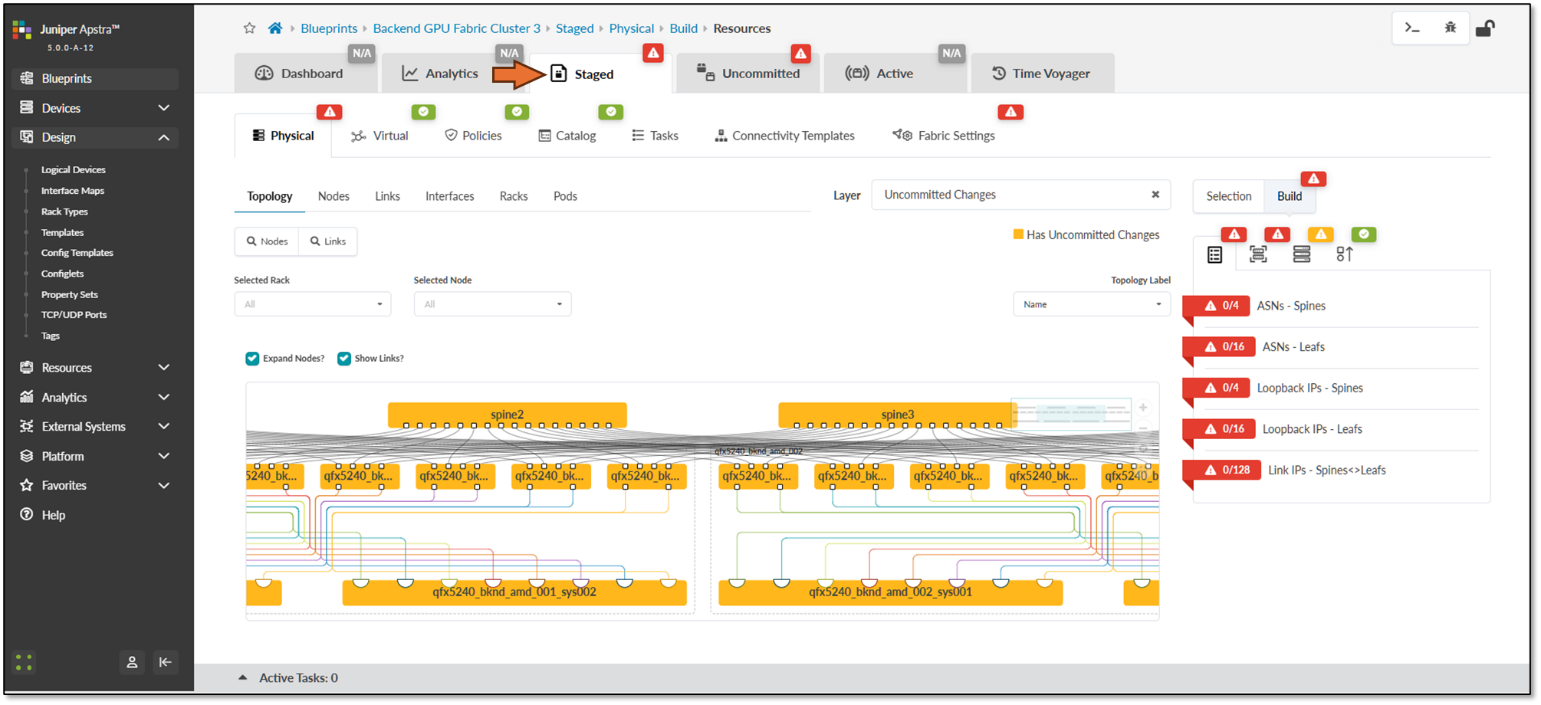

The Staged view, as depicted in Figure 84, shows that the topology is correct, but attributes such as mandatory ASNs and loopback addresses for the spines and the leaf nodes, and the spine to leaf links addressing must still be provided by the user.

Figure 84: Undeployed Blueprint Dashboard

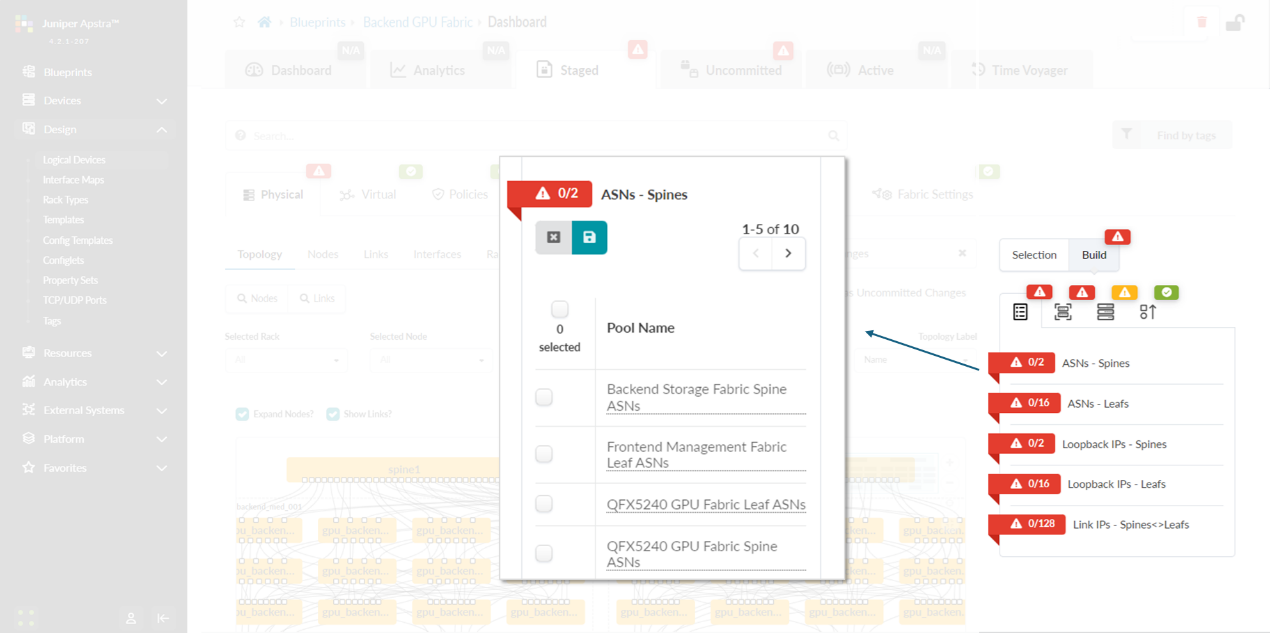

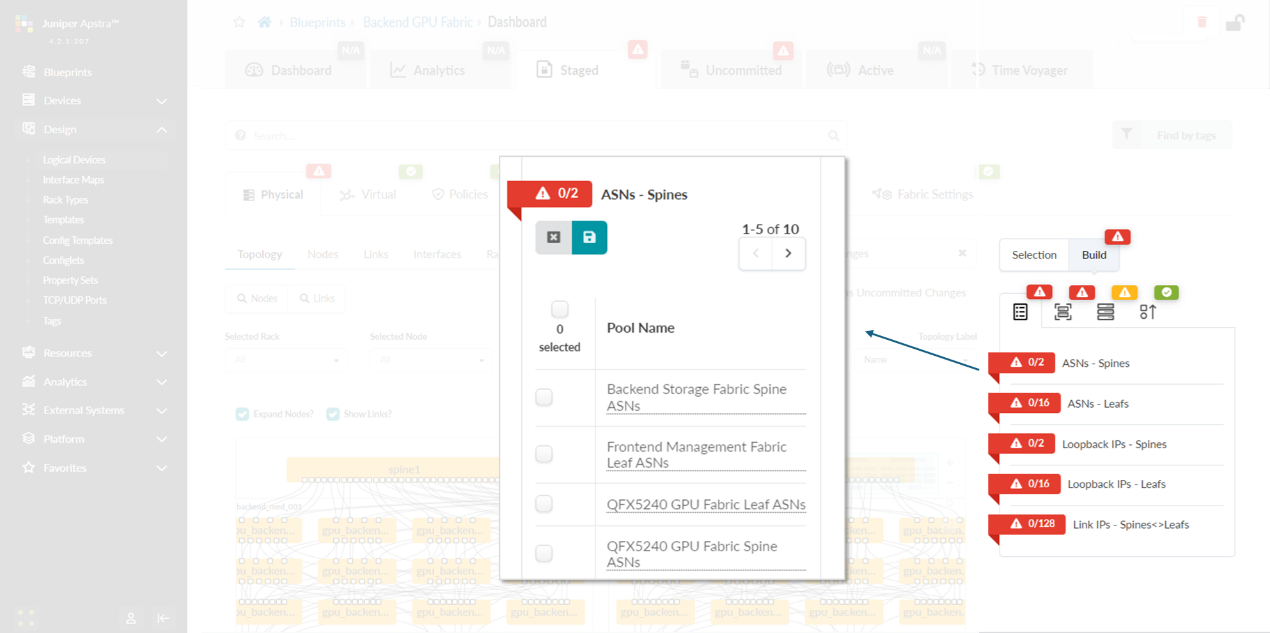

You will need to edit each one of these attributes and select from predefined pools of addresses and ASNs, as shown in the example on Figure 85, to fix this issue.

Figure 85: Selecting ASN Pool for Spine Nodes

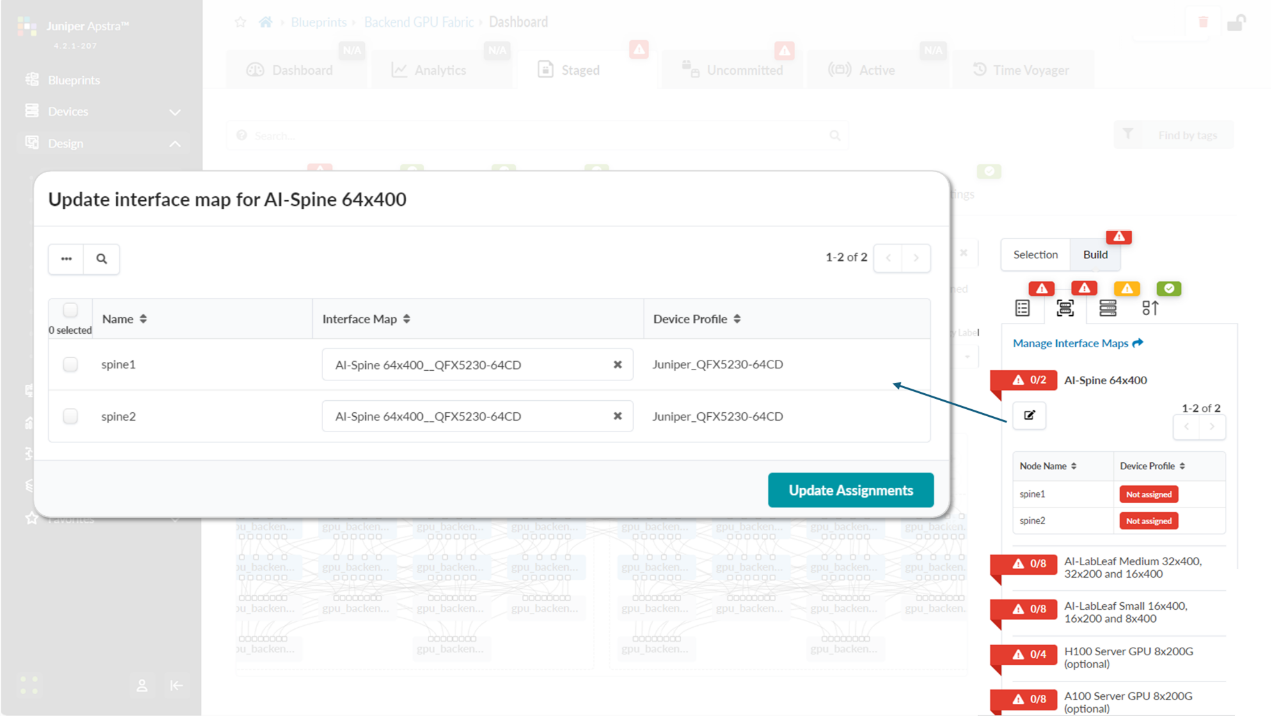

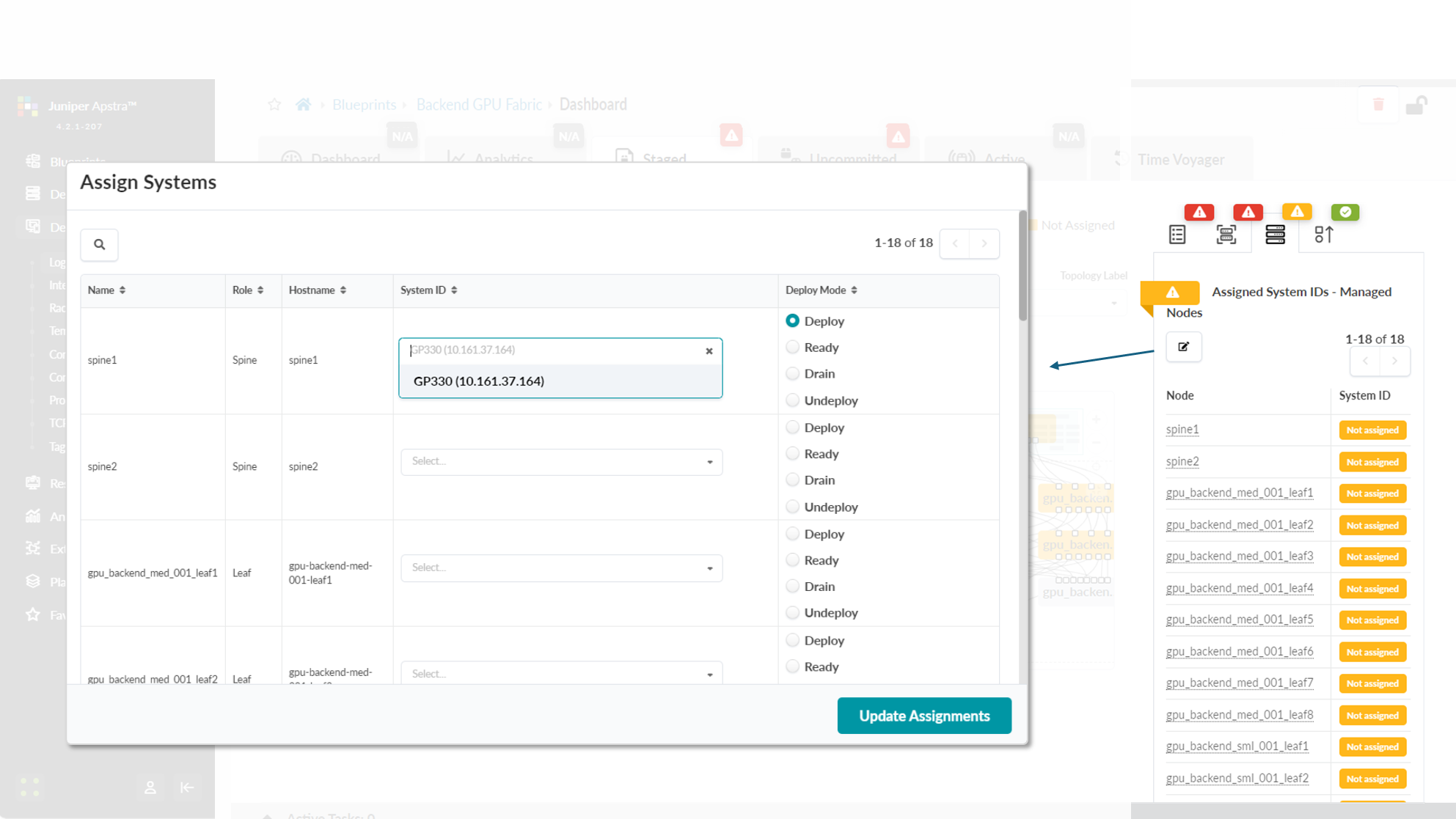

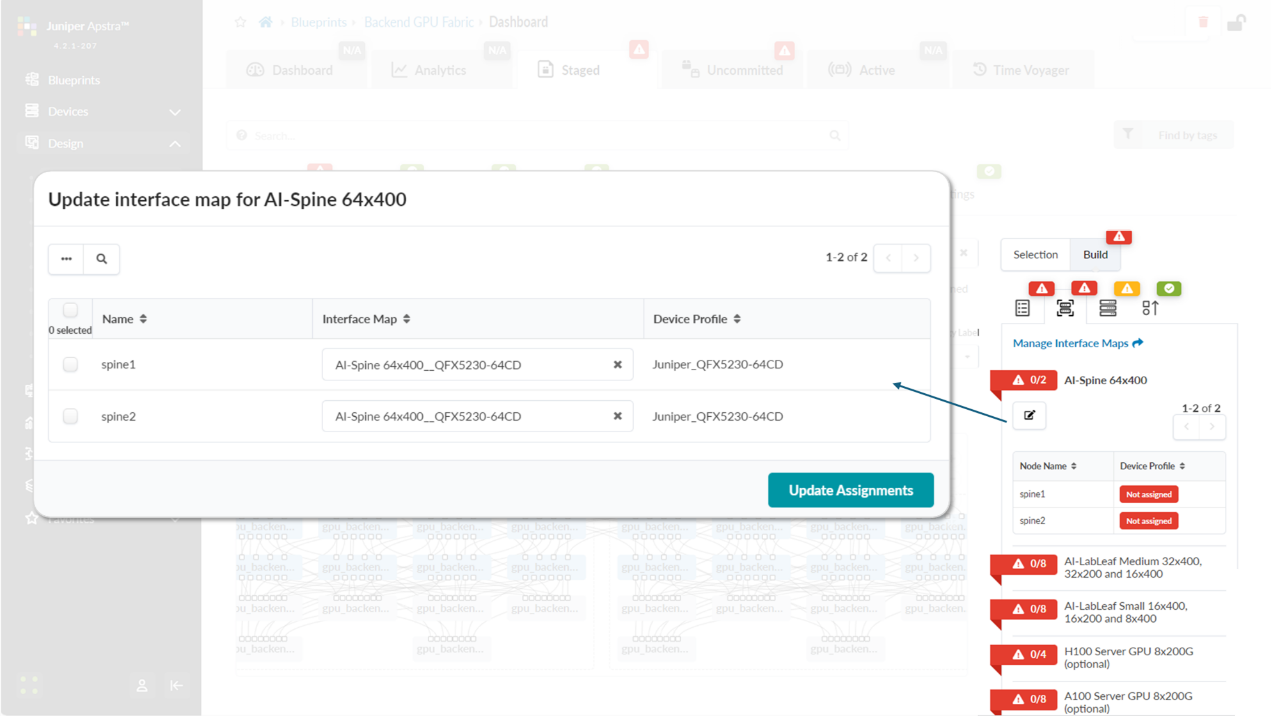

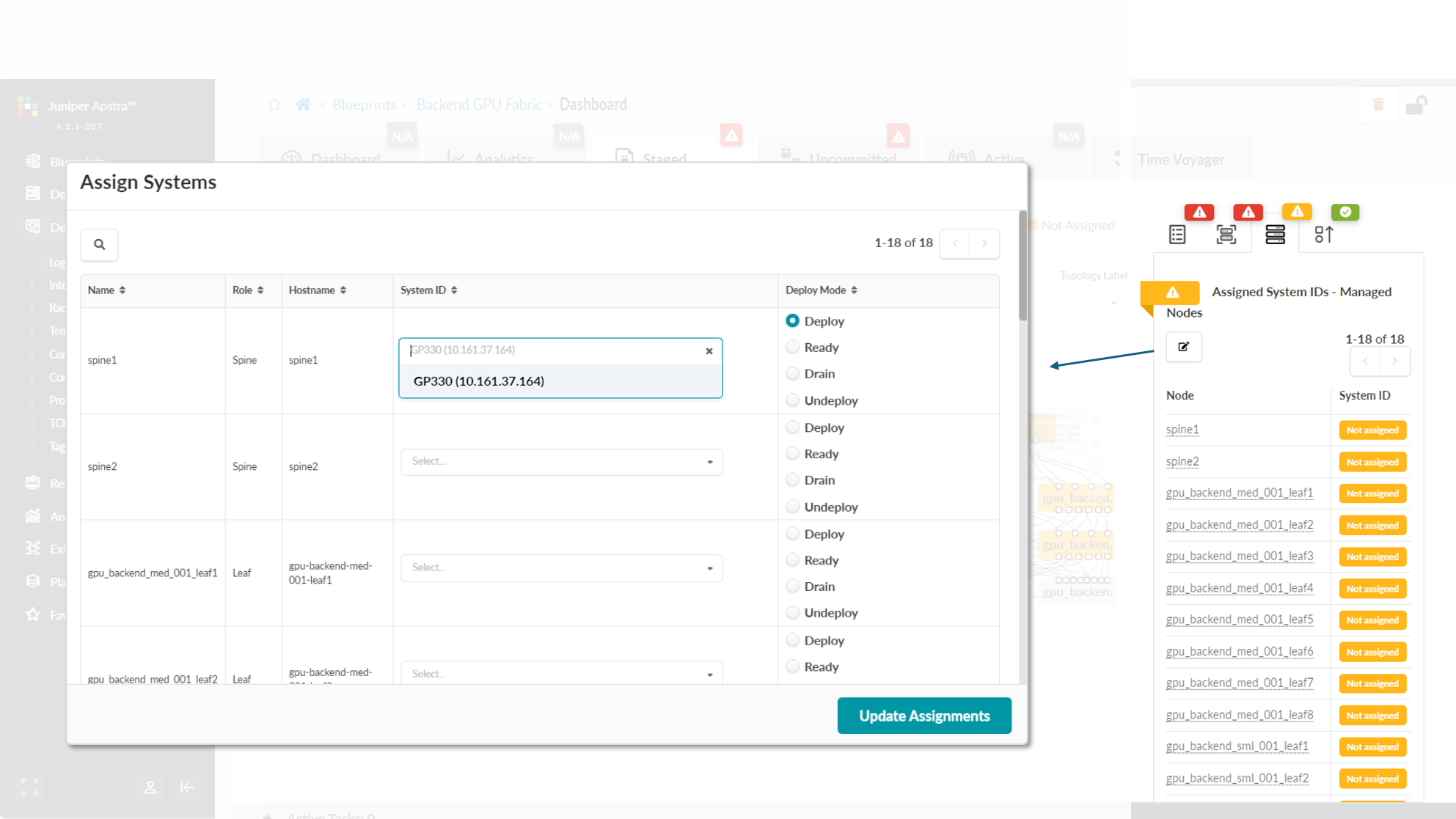

You will also need to select Interface Maps for each device’s role and along with assignment of system IDs as shown in Figures 86-87.

Figure 86: Mapping Interface Maps to Spine Nodes

Figure 87: Mapping Spine Nodes to Physical Devices (System

IDs)

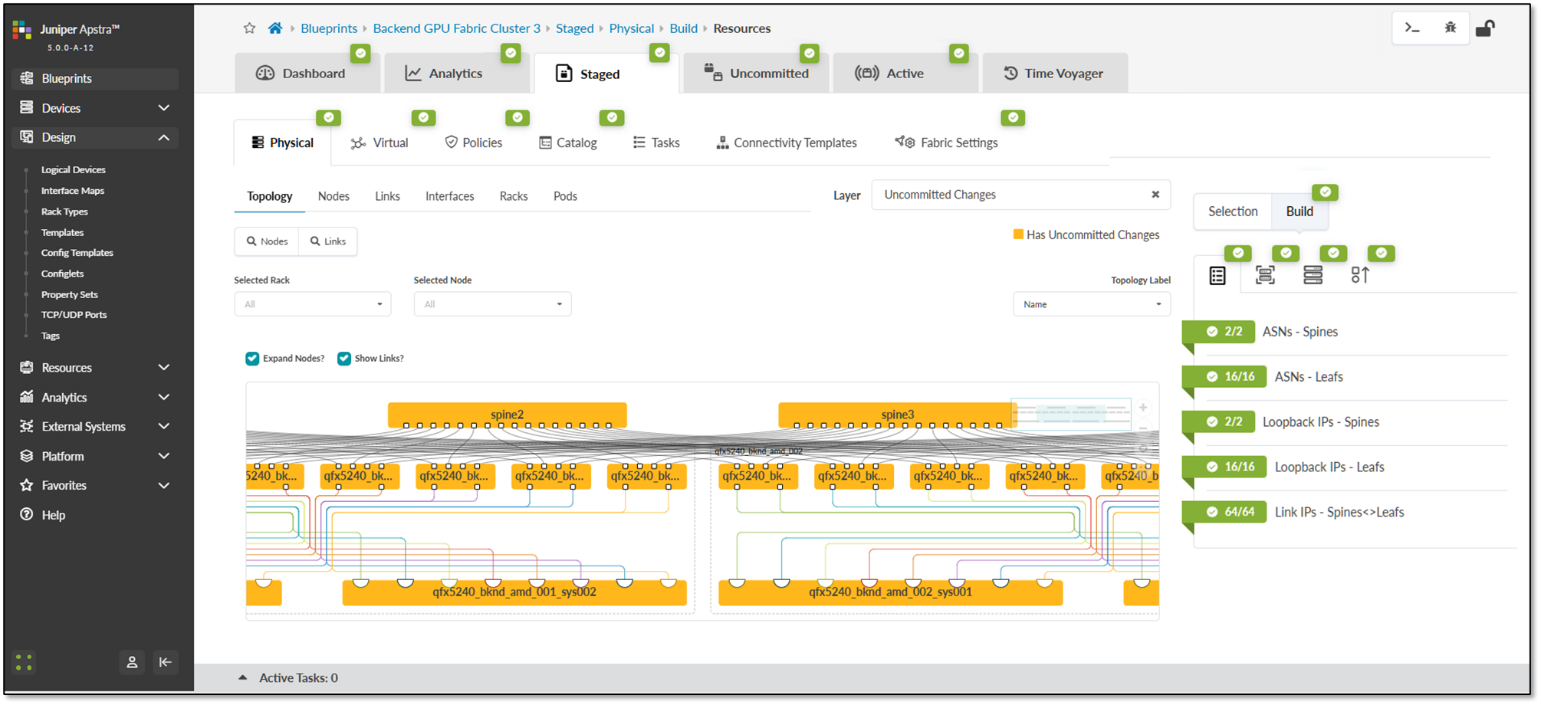

Once all these steps are completed, you can commit the changes, and Apstra will generate and push the vendor and device-type specific configurations to all devices in the blueprint. After this process is complete, the fabric should be successfully deployed, as indicated by the green checkmarks shown in Figure 88.

Figure 88: Active Blueprint.

Step 6: Create Configlets for DCQCN and DLB.

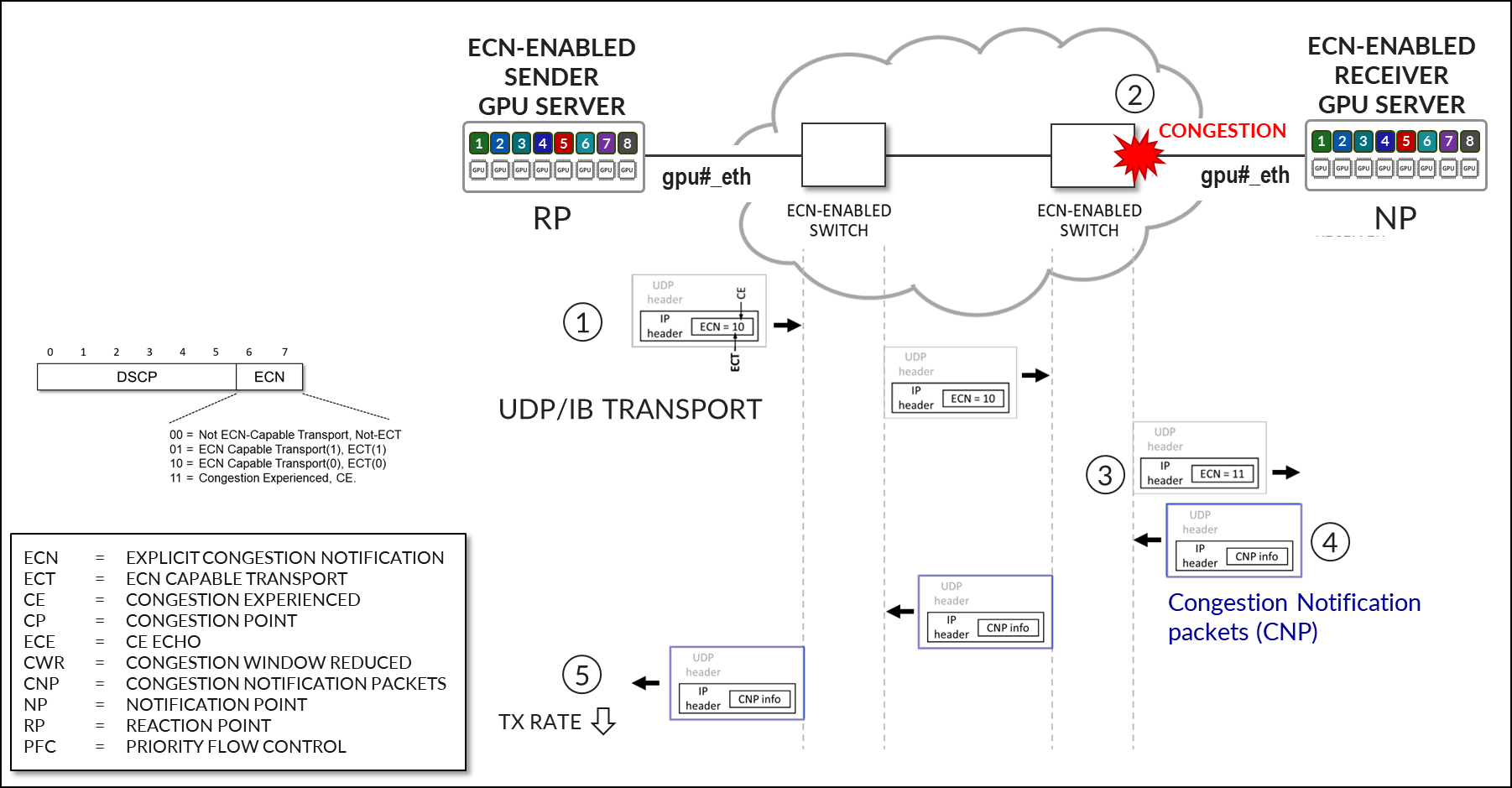

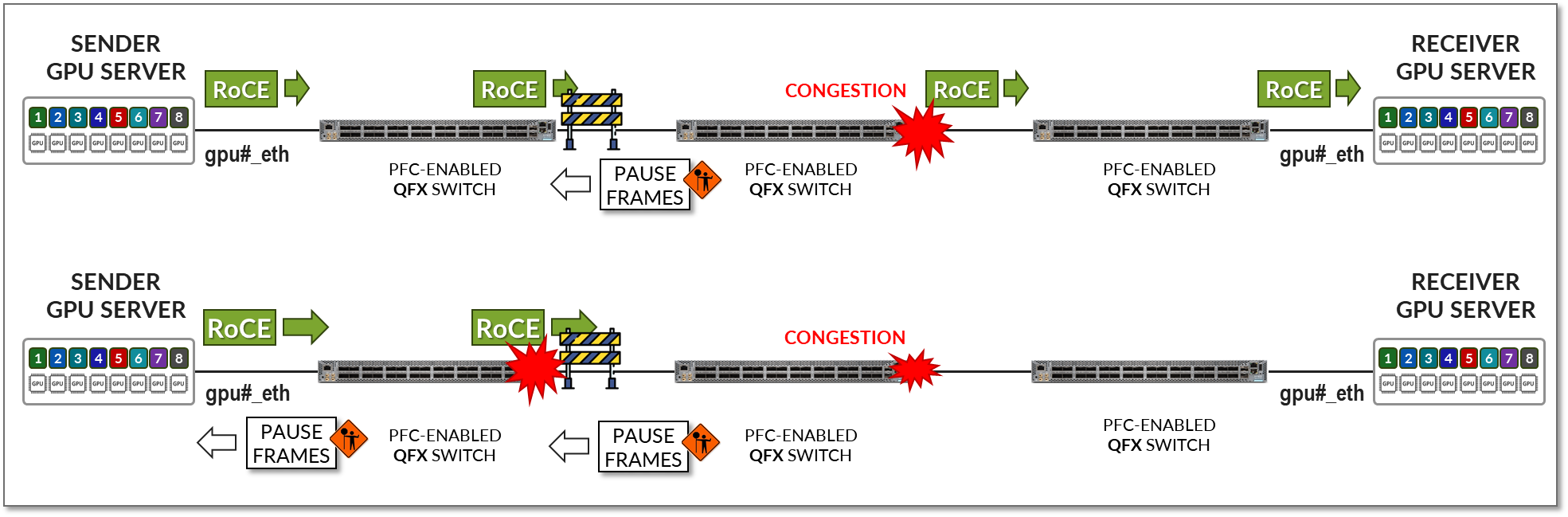

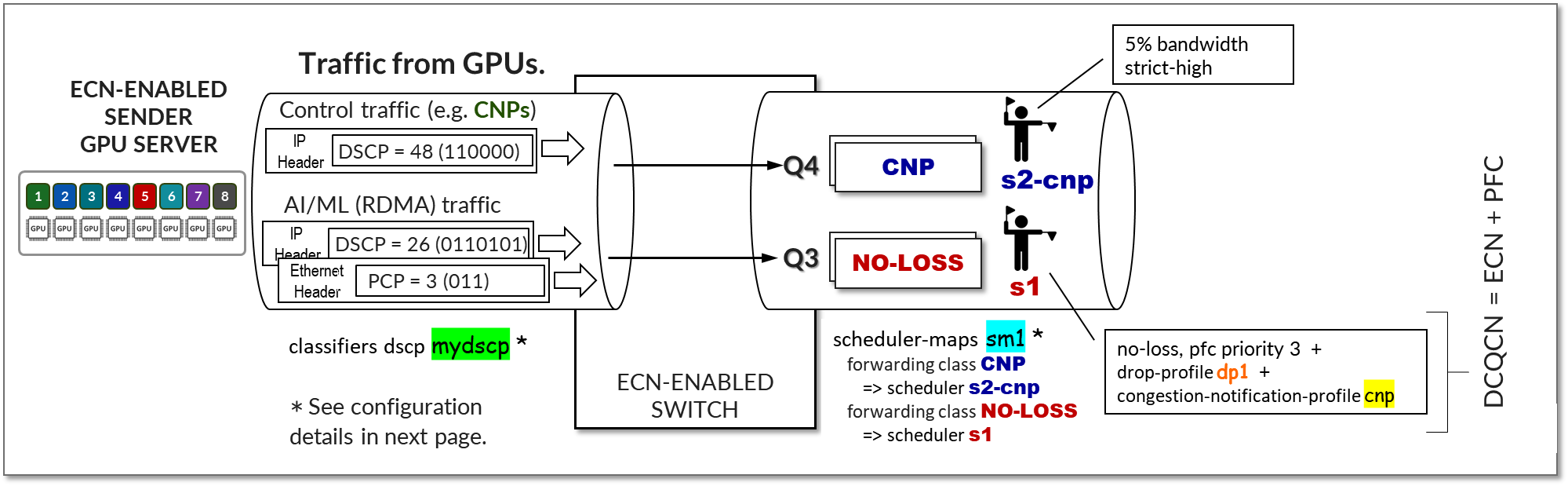

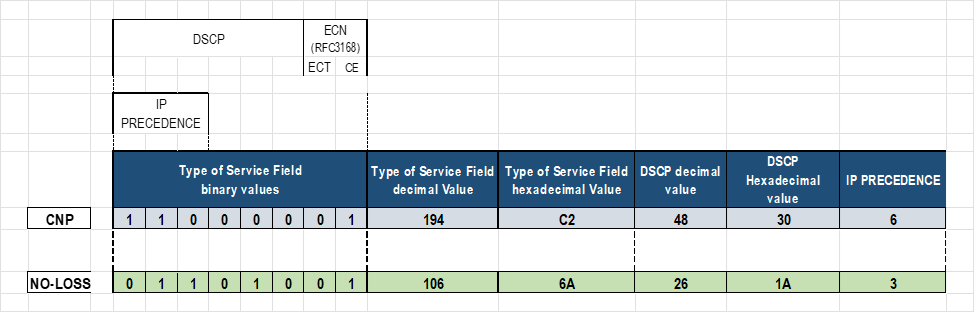

In the Apstra version used for this JVD, features such as ECN, PFC (DCQCN), and DLB are not natively available. Therefore, Apstra configlets should be used to add these features to the configurations before they are deployed to the fabric devices.

The configlet used for the DCQCN and DLB features on the QFX leaf nodes is as follows:

/* DLB configuration for Thor NIC2 Adapter */

hash-key {

family inet {

layer-3;

layer-4;

}

}

enhanced-hash-key {

ecmp-dlb {

flowlet {

inactivity-interval 128;

flowset-table-size 2048;

}

ether-type {

ipv4;

ipv6;

}

sampling-rate 1000000;

}

}

protocols {

bgp {

global-load-balancing {

load-balancer-only;

}

}

}

/* DCQCN configuration */

classifiers {

dscp mydscp {

forwarding-class CNP {

loss-priority low code-points 110000;

}

forwarding-class NO-LOSS {

loss-priority low code-points 011010;

}

}

}

drop-profiles {

dp1 {

interpolate {

fill-level [ 55 90 ];

drop-probability [ 0 100 ];

}

}

}

shared-buffer {

ingress {

buffer-partition lossless {

percent 66;

dynamic-threshold 10;

}

buffer-partition lossless-headroom {

percent 24;

}

buffer-partition lossy {

percent 10;

}

}

egress {

buffer-partition lossless {

percent 66;

}

buffer-partition lossy {

percent 10;

}

}

}

forwarding-classes {

class CNP queue-num 3;

class NO-LOSS queue-num 4 no-loss pfc-priority 3;

}

congestion-notification-profile {

cnp {

input {

dscp {

code-point 011010 {

pfc;

}

}

}

output {

ieee-802.1 {

code-point 011 {

flow-control-queue 4;

}

}

}

}

}

interfaces {

et-* {

congestion-notification-profile cnp;

scheduler-map sm1;

unit * {

classifiers {

dscp mydscp;

}

}

}

}

scheduler-maps {

sm1 {

forwarding-class CNP scheduler s2-cnp;

forwarding-class NO-LOSS scheduler s1;

}

}

schedulers {

s1 {

drop-profile-map loss-priority any protocol any drop-profile dp1;

explicit-congestion-notification;

}

s2-cnp {

transmit-rate percent 5;

priority strict-high;

}

}The configlet used for the DCQCN and DLB features on the QFX spine nodes is as follows:

/* DLB configuration */

hash-key {

family inet {

layer-3;

layer-4;

}

}

enhanced-hash-key {

ecmp-dlb {

flowlet {

inactivity-interval 128;

flowset-table-size 2048;

}

ether-type {

ipv4;

ipv6;

}

sampling-rate 1000000;

}

}

protocols {

bgp {

global-load-balancing {

helper-only;

}

}

}

/* DCQCN configuration */

class-of-service {

classifiers {

dscp mydscp {

forwarding-class CNP {

loss-priority low code-points 110000;

}

forwarding-class NO-LOSS {

loss-priority low code-points 011010;

}

}

}

drop-profiles {

dp1 {

interpolate {

fill-level [ 55 90 ];

drop-probability [ 0 100 ];

}

}

}

shared-buffer {

ingress {

buffer-partition lossless {

percent 66;

dynamic-threshold 10;

}

buffer-partition lossless-headroom {

percent 24;

}

buffer-partition lossy {

percent 10;

}

}

egress {

buffer-partition lossless {

percent 66;

}

buffer-partition lossy {

percent 10;

}

}

}

forwarding-classes {

class CNP queue-num 3;

class NO-LOSS queue-num 4 no-loss pfc-priority 3;

}

congestion-notification-profile {

cnp {

input {

dscp {

code-point 011010 {

pfc;

}

}

}

output {

ieee-802.1 {

code-point 011 {

flow-control-queue 4;

}

}

}

}

}

interfaces {

et-* {

congestion-notification-profile cnp;

scheduler-map sm1;

unit * {

classifiers {

dscp mydscp;

}

}

}

}

scheduler-maps {

sm1 {

forwarding-class CNP scheduler s2-cnp;

forwarding-class NO-LOSS scheduler s1;

}

}

schedulers {

s1 {

drop-profile-map loss-priority any protocol any drop-profile dp1;

explicit-congestion-notification;

}

s2-cnp {

transmit-rate percent 5;

priority strict-high;

}

}

}The configuration used for the DCQCN features on the PTX10008 as spine devices is as follows:

NOTE: when PTX10008 is used as a spine node, GLB is not an option.

/* ALB configuration */

policy-options {

policy-statement ALB-TEST {

term 1 {

from {

route-filter 10.200.1.0/24 exact;

}

then {

load-balance adaptive;

}

}

}

}

routing-options {

forwarding-table {

export ALB-TEST;

}

}

chassis {

ecmp-alb {

tolerance 20;

}

interoperability express5-enhanced;

}

/* DCQCN configuration */

classifiers {

dscp rdma-dscp {

forwarding-class rdma-cnp {

loss-priority low code-points 110000;

}

forwarding-class rdma-data {

loss-priority low code-points 011010;

}

}

}

drop-profiles {

dp-ecn {

fill-level 3 drop-probability 100;

}

}

forwarding-classes {

class network-control queue-num 3;

class other queue-num 1;

class rdma-cnp queue-num 0;

class rdma-data queue-num 2 no-loss;

}

monitoring-profile {

myMon {

export-filters qall {

peak-queue-length {

percent 100;

}

queue [ 3 1 2 ];

}

}

}

interfaces {

et-* {

scheduler-map sched-map-aiml;

monitoring-profile myMon;

unit * {

classifiers {

dscp rdma-dscp;

}

}

}

}

scheduler-maps {

sched-map-aiml {

forwarding-class network-control scheduler sched-nc;

forwarding-class other scheduler sched-other;

forwarding-class rdma-cnp scheduler sched-rdma-cnp;

forwarding-class rdma-data scheduler sched-rdma-data;

}

}

options {

hierarchical-scheduler-disable;

}

schedulers {

sched-nc {

transmit-rate percent 1;

priority medium-high;

}

sched-other {

priority low;

}

sched-rdma-cnp {

transmit-rate percent 1;

priority high;

}

sched-rdma-data {

transmit-rate percent 97;

buffer-size temporal 400;

priority medium-high;

drop-profile-map loss-priority any protocol any drop-profile dp-ecn;

explicit-congestion-notification;

ecn-enhanced {

head-marking;

}

}

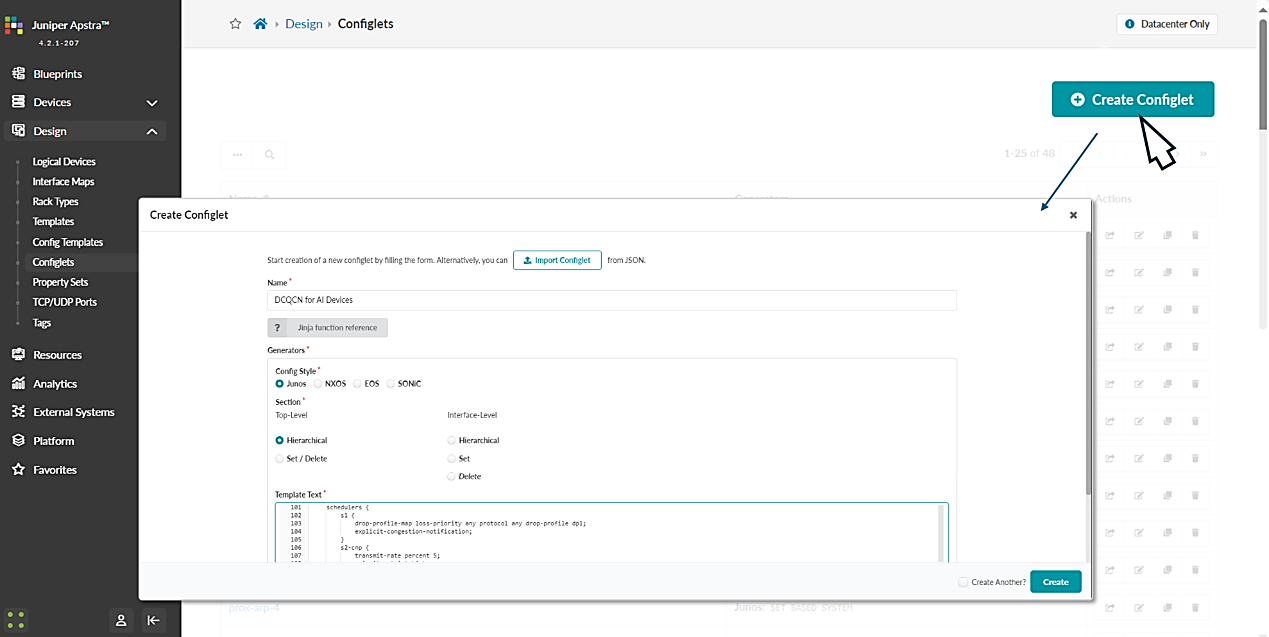

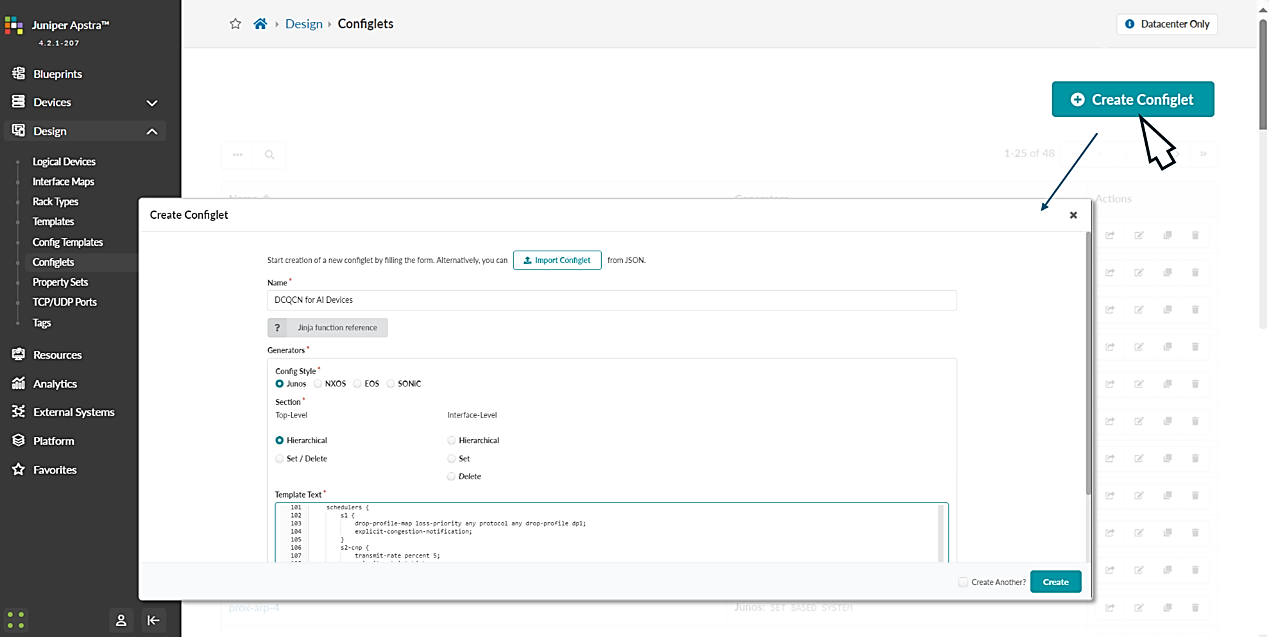

}To create these configlets:

- Navigate to Design -> Configlets -> Create Configlet and click on Create configlet.

- Provide a name for the configlet, select the operating system, vendor and configuration mode, and paste the above configuration snippet on the template text box as shown below:

Figure 89: DCQCN Configlet Creation in Apstra

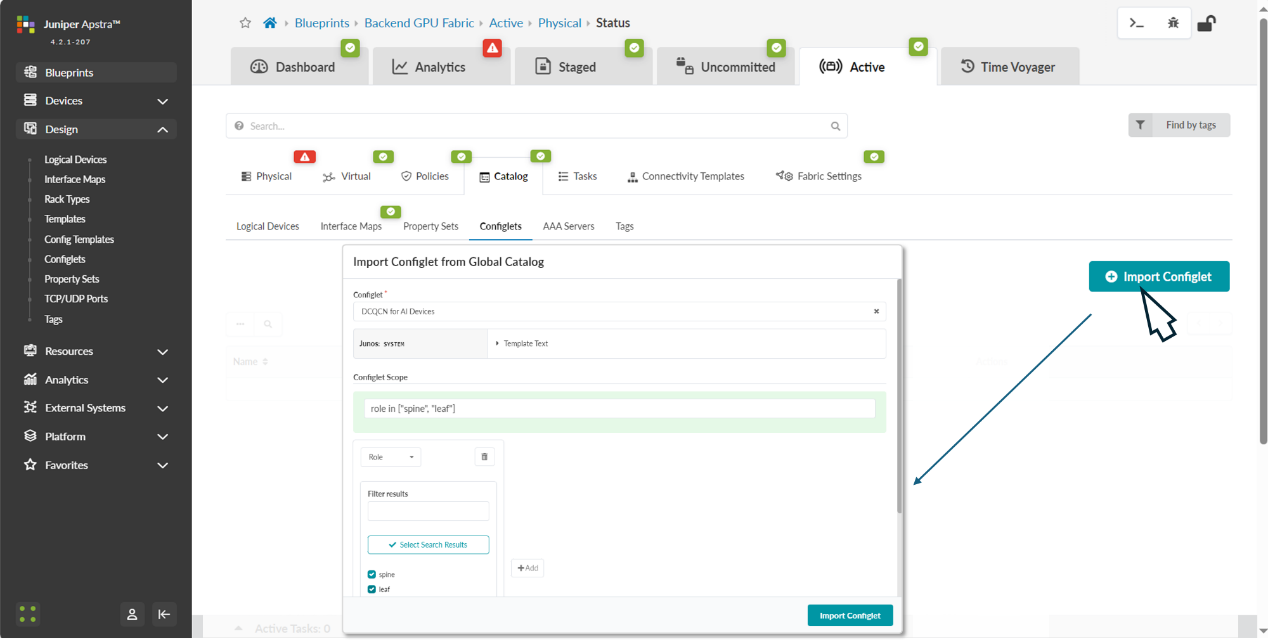

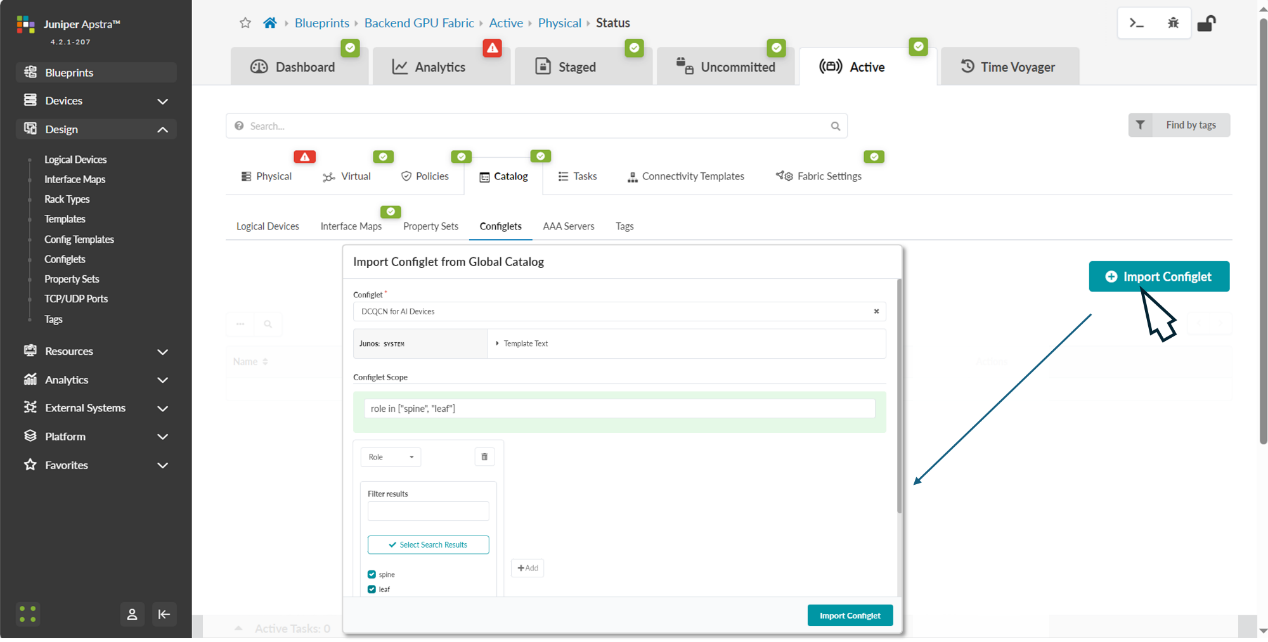

Step 7: Apply the configlets previously created to the Blueprint.

The configlet should be applied to the devices, both leaf and spine roles within the blueprint.

Navigate back to the blueprint dashboard and the move to Staged -> Catalog -> Import.

Select the configlet you want to apply, and the device role where you want to apply it.

Figure 90 Applying DCQCN Configlets to Devices in Apstra

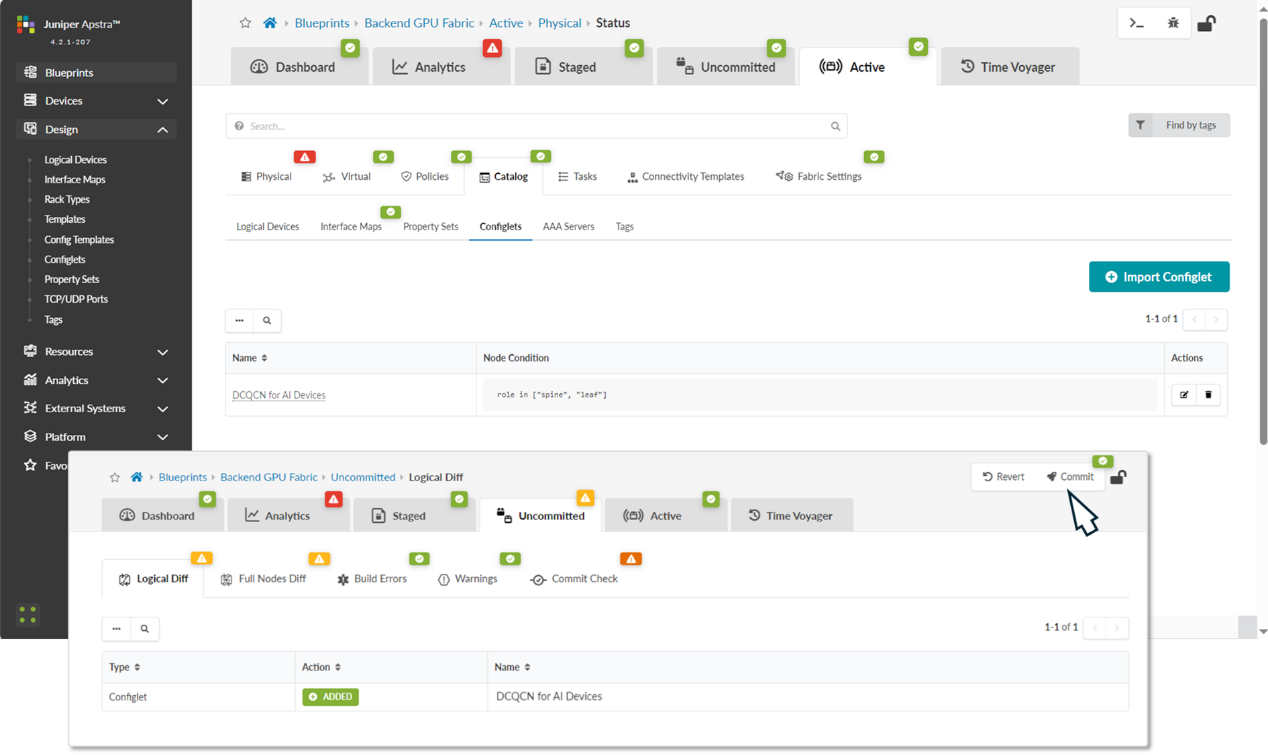

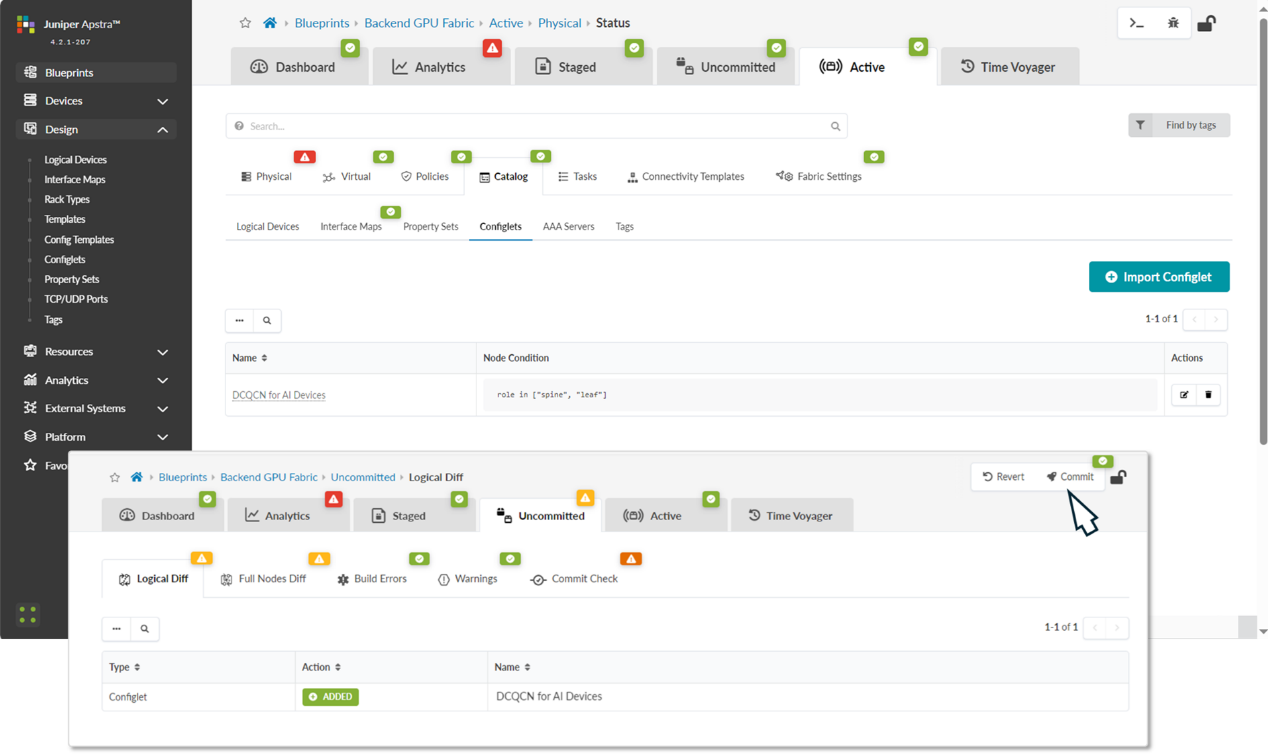

After successfully importing the configlet into the blueprint, it should be listed in the catalog. You need to commit the changes for the configuration to be deployed to the devices.

Figure 91: Applying DCQCN Configlets to Devices in Apstra

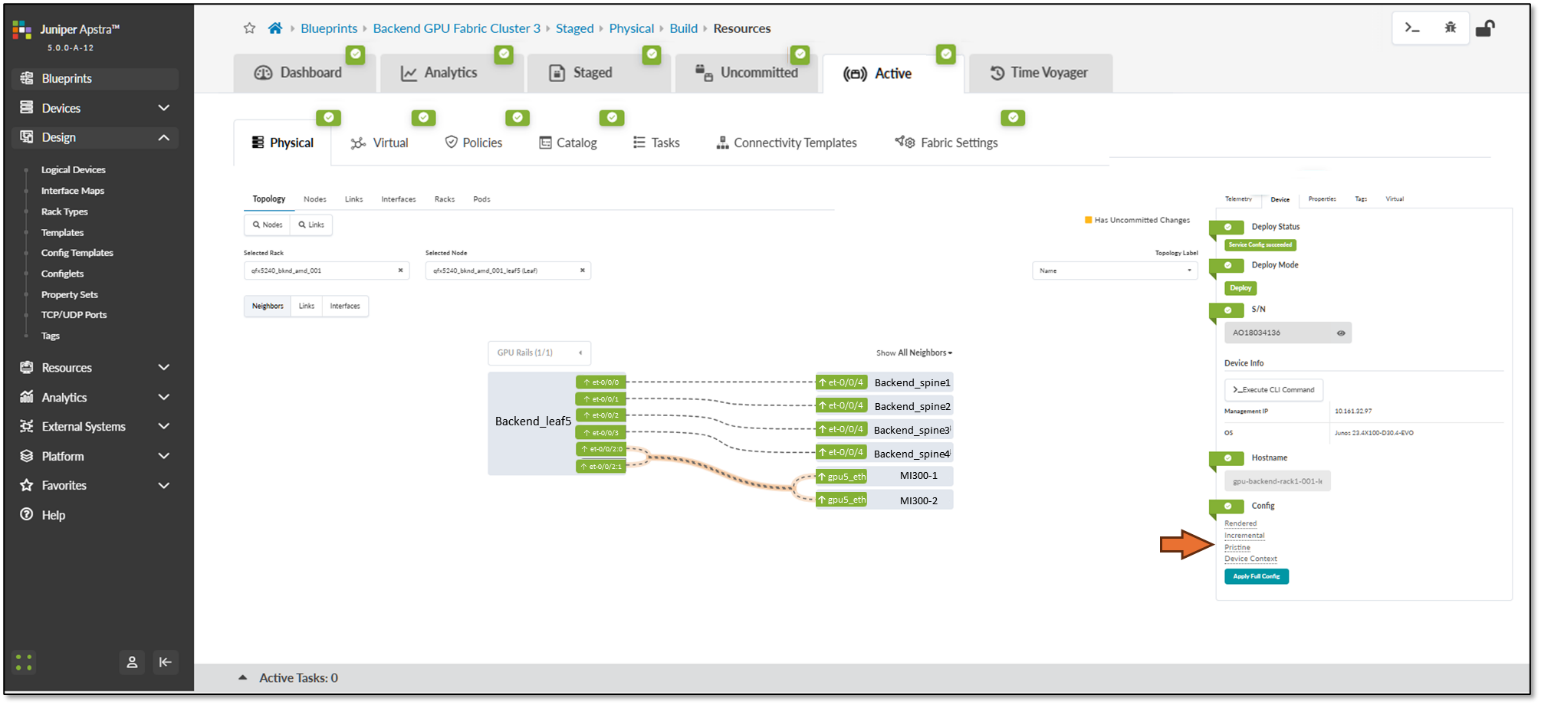

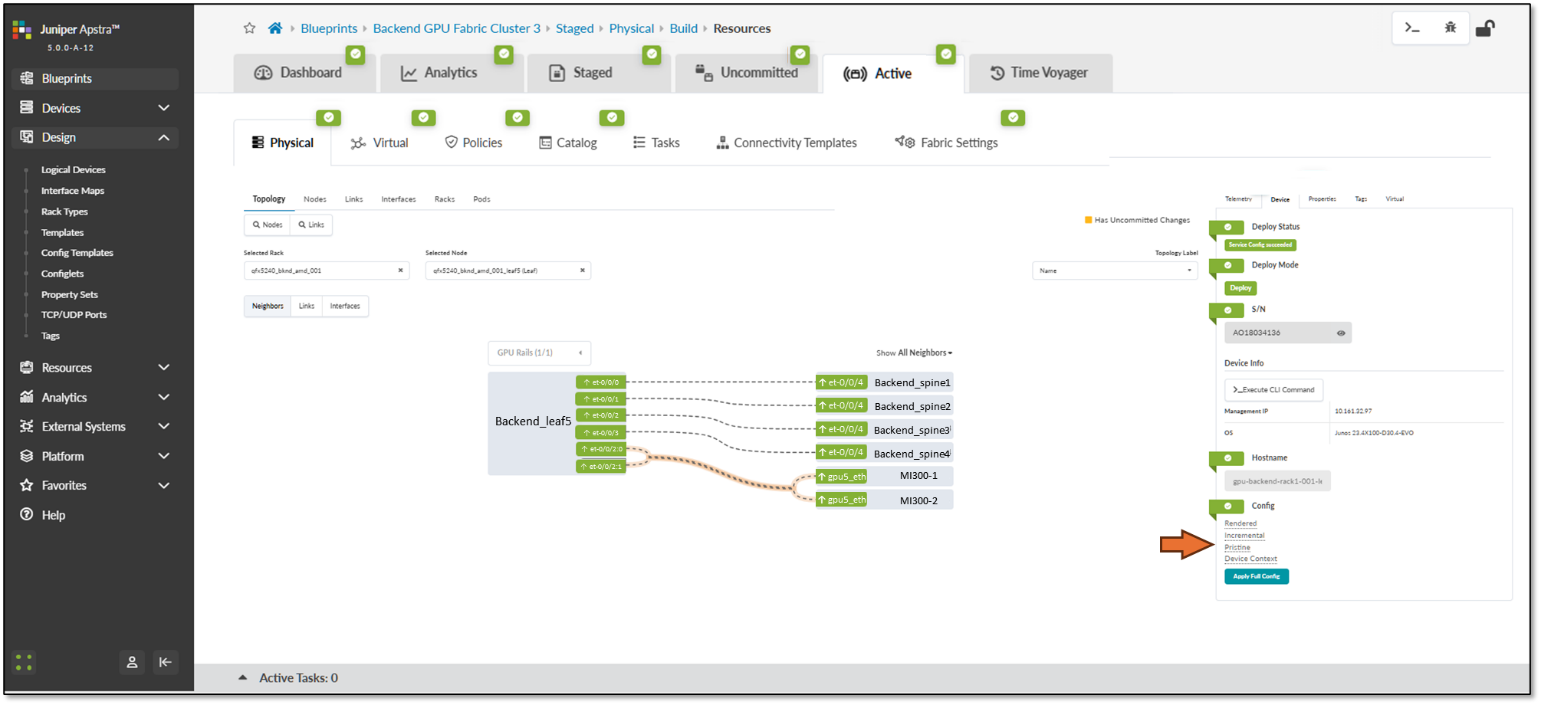

You can quickly check the status and the deployed configuration by clicking on each device in the Active tab and selecting the rendered config under the Device tab on the right side.

Figure 92: Device configuration verification in Apstra

https://rocm.blogs.amd.com/software-tools-optimization/amd-smi-overview/README.html