Juniper Mist NAC 架构

观看视频,熟悉 Juniper Mist Access Assurance 背后的架构。详细了解微服务及其Juniper Mist如何利用微服务提供高可用性和可扩展性。

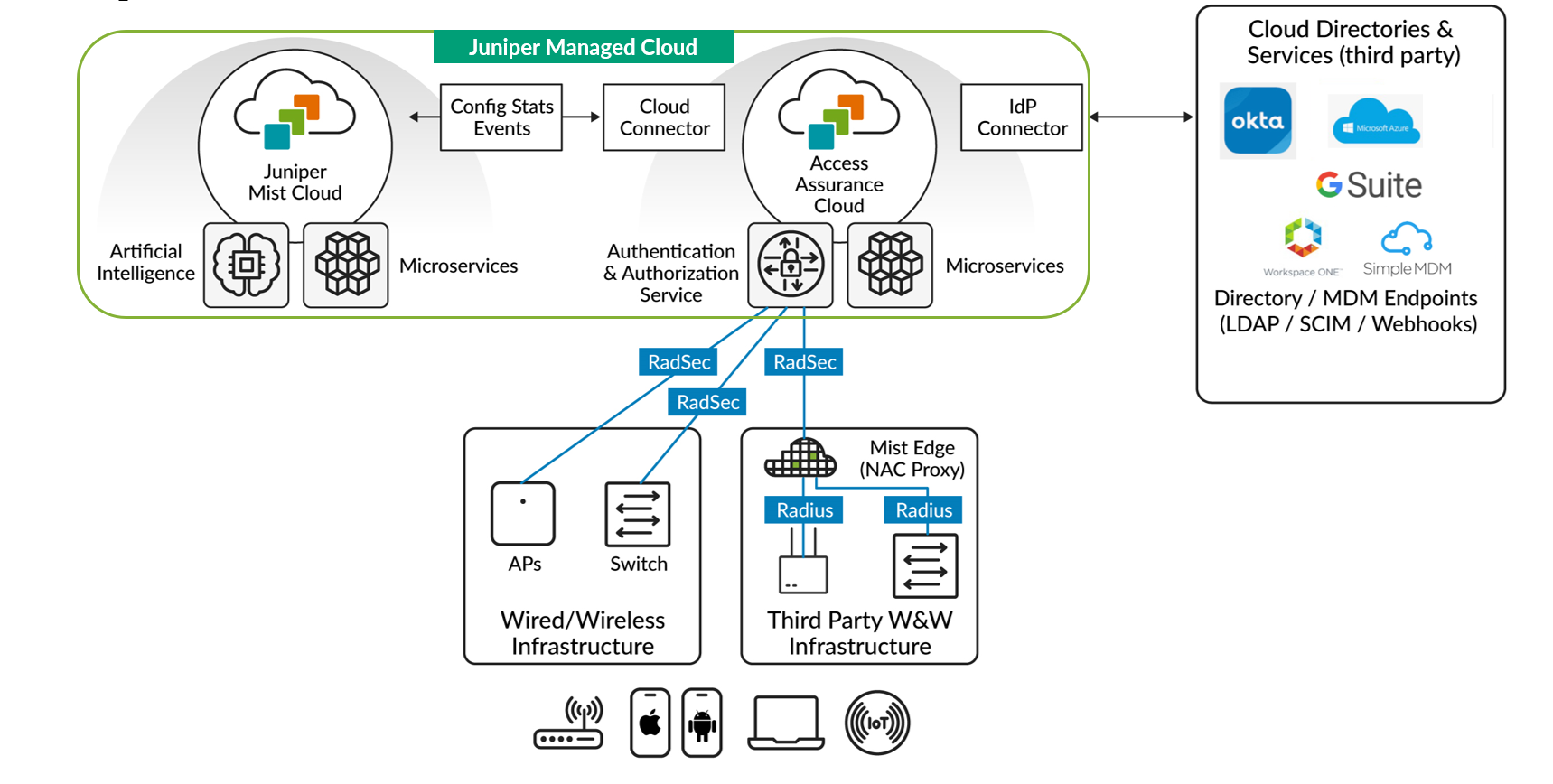

Juniper Mist Access Assurance 利用微服务架构。此架构优先考虑正常运行时间、冗余和自动扩展,从而实现了跨有线、无线和广域网的优化网络连接。

观看以下视频,了解 Mist Access Assurance 架构:

Architecture. So what we've done is actually we've separated the Authentication Service from the Mist cloud that you all know. We now have authentication service as its own separate cloud, actually spread out around the globe in different pods or points of presence, so we'll talk about that a little bit later on.

But what we have here is an authentication service cloud that has its own set of microservices, where each and every feature, each and every component has its own pool of microservices, whether it's responsible for enforcing policies, for actually doing the user device authentication, keeping state of sessions, keeping the databases of all the endpoints, records, or having identity providers' connectors or cloud connectors back to the Mist cloud.

All the authentication requests, if they're coming from the Mist managed infrastructure, whether it's a Mist AP or a Juniper EX switch, they're automatically wrapped into a secure TLS-encrypted RadSec tunnel going to back to our authentication service.

And from there, once the authentication has happened, the Mist authentication service will would have connections to third-party identity sources like an Okta or Azure ID or Ping Identity. Or it could be an MDM provider, such as Microsoft Intune or Jamf, just to get more context, more visibility in terms of who is trying to connect and what type of device we're dealing with.

And what the Mist authentication service cloud will do is it will actually do all the heavy lifting, all the authentication. But it will send all the visibility metadata, all the session information, all the events, all the statistics back to the Mist cloud. So this is how you get all the visibility, all the end-to-end connection experience in one place, and you can manage everything from there.

In addition to that, when we're dealing with third-party network infrastructure-- say you have a Cisco wired switch. You have an Aruba controller or a third-party vendor AP or a switch. The way we would integrate there is we would leverage our Mist Edge application platform that would function as the authentication proxy component.

What you could do is you could take your third-party infrastructure point, point it via RADIUS to Mist Edge. And from there, Mist Edge will convert it automatically to secure RadSec and then perform the authentication. This way, if you look at this architecture, we're really bringing the microservices architecture down to the authentication service.

All of that gives you the performance that is generally associated with microservices clouds. It also gives you the capability of getting feature updates on a biweekly basis and security patches as and when they're needed, without any downtime to your network or to the functionality that this brings.

Juniper Mist Access Assurance 通过整合外部目录服务(如 Google Workspace、Microsoft Entra ID、Okta Identity)和移动设备管理 (MDM) 提供商(如 Jamf 和 Microsoft Intune)来增强其身份验证服务。这种集成有助于准确识别用户和设备,并通过仅向经过验证的可信身份授予网络访问权限来增强安全措施。

图 1 显示了 Mist Access Assurance 网络接入控制 (NAC) 的框架。

Juniper Mist 身份验证服务与 Juniper Mist 云分离,可作为独立的云服务运行。身份验证和授权服务分布在全球各地的各个接入点,以提高性能和可靠性。

此Juniper Mist身份验证服务使用微服务方法。也就是说,一个专用的微服务组或微服务池管理每个服务组件的功能,例如策略实施或用户设备身份验证。同样地,各个微服务会管理每项附加任务,例如会话管理、端点数据库维护以及与Juniper Mist云的连接。

由Juniper Mist云管理的设备,例如瞻博网络®系列高性能接入点或瞻博网络®EX 系列交换机,会向 Juniper Mist 身份验证服务发送身份验证请求。这些请求将使用 RADIUS over TLS (RadSec) 自动加密,并通过安全传输层安全 (TLS) 隧道发送到身份验证服务。

Mist身份验证服务处理这些请求,然后连接到外部目录服务(Google Workspace、Microsoft Azure AD、Okta Identity 等)以及 PKI 和 MDM 提供程序(Jamf、Microsoft Intune 等)。此连接的目的是进一步验证并提供有关尝试连接网络的设备和用户的上下文。

除了身份验证任务之外,Juniper Mist 身份验证服务还会将关键元数据、会话信息和分析数据中继回Juniper Mist云。这种数据共享为用户提供了端到端的可见性和集中管理。

我们将 Juniper Mist Edge 平台用作身份验证代理,将第三方网络基础架构与 Juniper Mist Access Assurance 集成。第三方基础架构通过 RADIUS 与 Juniper Mist Edge 平台进行交互。反过来,Juniper Mist Edge 平台使用 RadSec 来保护通信,然后进行身份验证。

此云原生微服务架构可增强身份验证和授权服务,支持定期功能更新和必要的安全补丁,同时尽可能缩短网络停机时间。

观看以下视频,了解 Mist Access Assurance 高可用性架构:

Design of the Mist Access Assurance service. What we've done is we've placed various access assurance clouds that will do the authentication in various regions around the globe, such that you have a POD in the West Coast, in the East Coast, in Europe and Asia/Pac, et cetera, et cetera.

The way that high availability works, which is actually a very nice and tidy way of doing things, it's looking at the physical location of the site where you have you Mist APs or Juniper switches. Based on the geo-affinity, based on the geo AP of that site, the authentication traffic, the RadSec channels that these are forming, it will automatically be redirected to the nearest access assurance cloud available around the globe.

So if you have a site, as you can see in this example, on the West Coast in the United States, it will automatically be redirected to the Mist Access Assurance cloud on the West Coast as well. Similarly, if you have a site on the East Coast, that authentication traffic will be redirected to the East Coast POD as well.

This will provide with the optimal latency, no matter where your physical sites are, while they're still managed by the same Mist dashboard of your choice.

In addition to that, that also serves as the redundancy mechanism as well. So should anything happen to one of the access assurance clouds or one of the components of the access assurance cloud which would result in a service disruption, all the authentication traffic will be automatically redirected to the next nearest access assurance cloud available in the globe.

观看以下视频,了解 Mist Access Assurance 工作流程:

Mist Assurance authentication service.

So what does it do?

First of all, it's there to do the identification, what or who is trying to connect to our network. Whether it's a wired or wireless device, we want to understand the identity of that user or that device, whether it's using secure authentication, it's using certificate to identify itself, or it's using credentials to authenticate, or it's just a headless IoT device that just has a MAC address that we can look at.

We want to understand the security posture of the device. We want to understand where it belongs to in a customer organization, or where it's located physically, which site it belongs to.

Once we have the user fingerprint, once we understand what type of user or device is trying to connect, we want to assign a policy. We want to say, OK, if it's an employee, a device that's managed by IT that's using a certificate to identify itself, it's a fairly secure device.

We want to assign a VLAN that has less restrictions that provides full access to the network resources. We can optionally assign a group-based policy tag, or we can assign a role to apply a network policy for that given user.

Lastly, but most important, we want to validate that end-to-end connectivity experience across the full stack. We don't care just about the authentication authorization process. We want to combine that whole connection stage end to end.

So we want to answer the question if the user or a device is able to authenticate, and if not, why not exactly? Is it expired certificate? Is it the account that's been disabled?

Is it the policy that's explicitly denying the specific device to connect? We want to answer the question, which policy is assigned to the user?

And after that authentication we want to confirm and validate that the user's getting a good experience so it's able to associate the AP or connect to the right port, get the right VLAN assigned, get the IP address, validate DHCP, resolve ARP and DNS, and finally get access to the network and move packets left and right.

We want to validate that whole path not just some parts of it. And this is where MARVIS anomaly detection, this is where MARVIS conversational interface would help us with troubleshooting.

观看以下视频,了解有关扩展 Mist Access Assurance 架构的信息:

And now let's take a look a little bit, and let's add scale into perspective. Let's take a look at a typical NAC deployment in a production environment.

When you're looking at any type of scale, obviously one box will not be enough just from a redundancy perspective. But more so from a scaling perspective because you'll need to distribute the load, you'll need to load balance your authentications, your endpoint databases, and things like that.

So you're typically looking at deploying a clustered solution, where you would have your policy or authentication nodes, or your brains of the solution, somewhere closer to your NAS devices, your APs and switches, so that they will authenticate clients, they will do the heavy lifting. And at the top, you would have a pair of management nodes. This is where you would configure your NAC policy. This is where you have the visibility logs and things like that.

So that's the cluster deployment you're going to look at. And obviously, in front of that, you'll need to put a load balancer to actually load balance the authentication requests, the RADIUS requests that are come in coming in from various devices, so they would hit all these policy nodes respectively.

And today, this whole deployment is customer managed. It's a customer problem to solve. You need to design for it. You need to scale for it. You need to make sure that you can change that when you need to add scale. You need to make sure that you will maintain this during software upgrades, maintenance windows, and things of that nature.

So that becomes a main challenge. These solutions are becoming complex very, very quickly.

And with those solutions, they're still lacking insights and end-to-end visibility. Because we're still looking at a standalone NAC deployment, which is an overlay to your current network infrastructure.

So even vendors who have infrastructure and NAC from the same vendor, they will not give you end-to-end user connectivity experience visibility in one place. You'll troubleshoot your NAC in one place. You'll troubleshoot your network, your controller switch, your AP or whatever, in a totally different place.

And obviously, think of what will happen if you need to do maintenance, where you'll just want to get a new feature. How would you upgrade this kind of deployment? That requires a lot of investment from the customer side.

Now, just as an example, I'll put the reference here.

We'll take Clearpass as an example, but it's the same for other vendors.

Clearpass has a clustering tech note which is 50 pages long just on how to set up a cluster.

Nothing else, not about the scaling-- nothing else-- it's just about clustering. Think about the complexity here.

观看以下视频,了解基于微服务的架构:

What do we want from a NAC solution today, if we would do it from a clean sheet of paper?

First of all, the architecture has to be microservices based. Ideally, it should be a cloud NAC offering.

It should be managed by the vendor. It should be highly available. Feature upgrades should just come periodically automatically, without requiring any downtime whatsoever. Most importantly, the architecture should be API-based and should allow for cross-platform integration.

Secondly, it has to be IT-friendly. We know that existing solutions, they have been so complex that you needed to have an expert on an IT team dedicated just for NAC.

We want that new NAC product to be tightly integrated into the network management and operations. So you want that single pane of glass that manages your whole full stack network, as well as all of your network access control rules and provide end-to-end visibility.

Lastly, with AI being there to help us solve network-specific problems, we want to extend that and make sure that we can capture that end-to-end user connectivity experience and answer questions. Will this affect my end user network experience?

Is it the client configuration problem? Is it the network that is to blame, or network service that is to blame? Is it my NAC policy that is causing users to have a bad experience and have connectivity issues throughout the day?